Simulation of personalized english learning path recommendation system based on knowledge graph and deep reinforcement learning

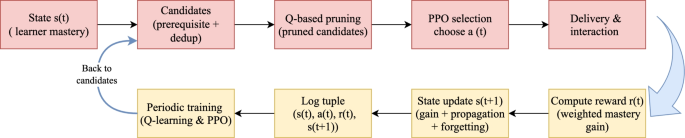

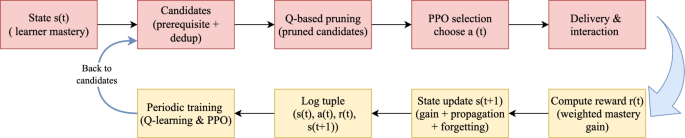

This study proposes an online personalized English learning path recommendation method that integrates knowledge graphs with deep reinforcement learning, aiming to achieve precise modeling of individual learner differences and dynamic optimization of learning trajectories. The method utilizes a knowledge graph to represent various knowledge points and their semantic relationships within the English learning domain, thereby capturing the hierarchical structure and dependency relations of the learning content through a graph-based model. This KG-aware modeling has been widely adopted in recommender systems28. Concurrently, a reinforcement learning framework is incorporated to formulate the recommendation task as a dynamic decision-making process based on states, actions, and rewards. This enables the system to iteratively refine its recommendation strategy according to the learner’s current knowledge state and behavioral feedback. The overall system architecture consists of two tightly coupled core modules: the Knowledge Graph and Learner Knowledge State Modeling Module, and the Reinforcement Learning-based Recommendation Strategy Module. The former provides a structured representation of the learner’s knowledge state, while the latter continuously optimizes the learning path based on interactive feedback, thereby facilitating a dynamic and personalized recommendation of learning paths. Operationally, Fig. 1 summarizes the algorithmic backbone executed at each step: starting from s(t), the system forms prerequisite-feasible and deduplicated candidates, prunes them by Q-values, performs PPO selection to obtain a(t), delivers the item, computes r(t), updates \(s(t1)\) via gain/propagation/forgetting, logs \((s(t), a(t), r(t), s(t1))\), and periodically trains the Q-learning and PPO components before looping back to candidate generation.

Two-row Z-shaped loop for one recommendation step. The top row goes from state s(t) through candidates (prerequisite + dedup), Q-based pruning, PPO selection of a(t), and delivery. The bottom row computes reward r(t), updates the state to \(s(t1)\) via gain/propagation/ forgetting, logs \((s(t), a(t), r(t), s(t1))\), performs periodic training (Q-learning & PPO), and loops back to candidates.

This study was conducted in accordance with the guidelines of the Declaration of Helsinki and was approved by the Ethics Committee of Yancheng Institute of Technology. The studies were carried out in accordance with the local legislation and institutional requirements. Written informed consent for participation in this study was obtained from the participants’ legal guardians or next of kin.

Knowledge graph construction and learning resource structuring

To model the relationships among English knowledge points, we construct a domain-specific knowledge graph based on actual learning data. In this graph, each node represents a distinct knowledge point, such as grammatical concepts or vocabulary topics, and edges denote the dependencies or associations between knowledge points. These edges fall into two main categories: prerequisite relations and semantic relations. The prerequisite relation captures the hierarchical dependency in learning sequences, while the semantic relation reflects content-level similarities or resource-based co-occurrence between concepts.

Let \(K = \\) denote the set of all knowledge points, where N is the total number of nodes. Each knowledge point is assigned a unique identifier and initialized using one-hot encoding. The edge set of the graph is defined as \(E = E_ \cup E_{\text }\), combining both directed prerequisite edges and undirected semantic edges.

Prerequisite relations are established using a hybrid strategy that combines expert knowledge with data-driven inference. Initially, domain experts annotate the fundamental logical dependencies between core concepts based on curriculum design, forming an initial edge set \(E_{\text {prereq}}^{(0)}\). To extend this structure, we incorporate learner behavior data. Let \(s = \{k_{s_1}, k_{s_2}, \dots , k_{s_T}\}\) represent a sequence of knowledge points mastered by a learner. If a statistically significant number of learners demonstrate that \(k_i\) is consistently acquired before \(k_j\), and the probability of mastering \(k_j\) increases after \(k_i\) has been learned, a directed edge \(k_i \rightarrow k_j\) is added to \(E_{\text {prereq}}\).

Semantic relations are constructed based on co-occurrence patterns in shared learning resources. For each knowledge point \(k_i\), we collect its associated resource set \(R(k_i)\). For any two knowledge points \((k_i, k_j)\), we compute the semantic similarity \(\tilde{w}_{ij}\) using the Jaccard index:

$$\begin{aligned} \tilde{w}_{ij} = \frac{|R(k_i) \cap R(k_j)|}{|R(k_i) \cup R(k_j)|} \end{aligned}$$

(1)

If \(\tilde{w}_{ij} \ge \theta\), where \(\theta\) is an empirically selected threshold (set to 0.3 in this study), an undirected edge is added between \(k_i\) and \(k_j\), and \(\tilde{w}_{ij}\) is used as the edge weight.

To represent the overall graph structure, we define an adjacency matrix \({W} = [w_{ij}] \in \mathbb {R}^{N \times N}\) with elements defined as:

$$\begin{aligned} w_{ij} = {\left\{ \begin{array}{ll} 1, & \text {if } k_i \rightarrow k_j \text { is a prerequisite relation} \\ \tilde{w}_{ij}, & \text {if } k_i \leftrightarrow k_j \text { is a semantic relation} \\ 0, & \text {otherwise} \end{array}\right. } \end{aligned}$$

(2)

This formulation ensures that W is asymmetric for prerequisite edges (\(w_{ij} \ne w_{ji}\)) and symmetric for semantic edges (\(w_{ij} = w_{ji}\)), thereby preserving both hierarchical and associative structural information within the graph.

To incorporate learning resources into the graph structure, we define a resource set \(R = \{r_1, r_2, \dots , r_M\}\), where M is the total number of learning resources. We then construct a binary resource-knowledge mapping matrix \({M} = [m_{rk}] \in \mathbb {R}^{M \times N}\), with each element defined as:

$$\begin{aligned} m_{rk} = {\left\{ \begin{array}{ll} 1, & \text {if resource } r \text { is annotated with knowledge point } k \\ 0, & \text {otherwise} \end{array}\right. } \end{aligned}$$

(3)

Through matrix M, each resource is structurally mapped to one or more knowledge points. This mapping integrates the content-level structure of learning resources into the graph topology, enabling downstream models to jointly leverage graph-based knowledge structure and resource semantics for personalized path recommendation.

Learner knowledge state modeling method

Following the construction of the knowledge graph and the structuring of learning resources, it is essential for the system to dynamically perceive each learner’s mastery of individual knowledge points in order to recommend personalized and effective learning paths. To this end, we propose a graph-based knowledge state modeling method that integrates structural dependencies and feedback-driven updates, enabling real-time tracking of learners’ cognitive progress during the English learning process.

Let the knowledge graph contain N knowledge points. At any given time t, a learner’s knowledge state is represented as a vector:

$$\begin{aligned} s(t) = \left[ s_1(t), s_2(t), \dots , s_N(t)\right] ^T \end{aligned}$$

(4)

where \(s_i(t) \in [0,1]\) indicates the learner’s mastery level of the i-th knowledge point at time t. The initial state s(0) is computed based on a diagnostic entrance test administered at registration. This test covers all knowledge points defined in the graph. The system evaluates each knowledge point based on question accuracy and normalizes the scores to the interval [0, 1], yielding the initial mastery values.

During actual learning, every interaction between the learner and the system triggers an update of the knowledge state vector. At time t, for a given learning interaction, the system first identifies the involved knowledge point set C(t) based on the corresponding learning resource. This set is obtained using the resource-to-knowledge mapping matrix described in section* 3.1. For each \(k_i \in C(t)\), we define an importance weight \(\eta _i(t) \in [0,1]\) that reflects the relevance of the knowledge point within the current resource.

Specifically, if \(k_i\) is annotated as the primary knowledge point of the resource, then \(\eta _i(t) = 1\). For secondary knowledge points, the weight is determined based on their pedagogical significance: if \(k_i\) serves as a core supporting concept, then \(\eta _i(t) = 0.6\); if it appears as a peripheral or supplementary item, then \(\eta _i(t) = 0.4\). These weights are pre-defined by domain experts during the resource tagging process and can be adjusted as needed during system deployment.

When a learner provides feedback (e.g., answers a quiz question), the system adjusts the mastery score of the affected knowledge point accordingly. If the response is correct, the score increases; if incorrect, it decreases. The direct change in mastery is defined as:

$$\begin{aligned} \Delta s_i^{\text {(direct)}}(t) = {\left\{ \begin{array}{ll} \alpha ^+ (1 – s_i(t)) \cdot \eta _i(t), & \text {if correct} \\ -\alpha ^- s_i(t) \cdot \eta _i(t), & \text {if incorrect} \end{array}\right. } \end{aligned}$$

(5)

where, \(\alpha ^+\) and \(\alpha ^-\) denote the positive and negative update rates, set to 0.35 and 0.25 respectively. This asymmetric update strategy ensures high responsiveness to unmastered content and stability for already mastered items. Since a learning interaction may involve multiple knowledge points, the above update rule is applied to each relevant node in parallel.

To further capture structural dependencies between knowledge points, we introduce a graph-based propagation mechanism29,30. Based on the edge weights \(w_{kj}\) in the knowledge graph, the change in mastery is propagated from the directly affected nodes to their neighbors. For any node j, the propagated change is given by:

$$\begin{aligned} \Delta s_j^{\text {(prop)}}(t) = \sum _{k \in C(t)} w_{kj} \cdot \Delta s_k^{\text {(direct)}}(t) \end{aligned}$$

(6)

This propagation reflects the interdependence of concept mastery across the graph. On one hand, correct mastery of prerequisite concepts promotes downstream learning (“scaffolding effect”); on the other hand, repeated errors in fundamental knowledge can inhibit progress in related nodes (“learning bottleneck”).

The knowledge state is finally updated using the following formulation:

$$\begin{aligned} s_j(t+1) = \sigma \left( s_j(t) + \Delta s_j^{\text {(direct)}}(t) + \Delta s_j^{\text {(prop)}}(t)\right) \end{aligned}$$

(7)

The compression function \(\sigma (\cdot )\) adopts the sigmoid form to ensure that all updated scores remain within the valid interval [0, 1]. For knowledge points that are not directly involved in the current interaction, we introduce a forgetting mechanism to simulate cognitive decay over time. Specifically, if knowledge point \(k_j\) has not been revisited for a period \(\Delta t \ge 3\) days, its mastery decays exponentially:

$$\begin{aligned} s_j(t+1) = s_j(t) \cdot \exp (-\mu \cdot \Delta t) \end{aligned}$$

(8)

The forgetting rate \(\mu\) is set to 0.08 based on statistical analysis of real user behavior data, reflecting empirically observed temporal decay patterns during the learning process.

Reinforcement learning-based modeling for personalized learning path recommendation

After modeling the learner’s knowledge state, we formulate the personalized learning path recommendation task as a Markov Decision Process (MDP) to enable dynamic optimization. The MDP is defined by a five-tuple \((S, A, P, R, \gamma )\), where each component is specified as follows.

The state space S represents the learner’s knowledge state s(t) at time t, consisting of mastery levels over all knowledge points in the graph, i.e.,

$$\begin{aligned} s(t) = [s_1(t), s_2(t), \dots , s_N(t)]^T. \end{aligned}$$

(9)

The action space A denotes the set of learning resources that the system can recommend. Each action \(a \in A\) maps to one or more knowledge points and is subject to prerequisite constraints defined by the current knowledge state. The mapping from actions to knowledge points is provided by the resource-to-knowledge matrix defined in section* 3.1.

The state transition function \(P(s’|s, a)\) models the evolution of knowledge states. After the learner engages with resource a under state s, the system updates the learner’s knowledge state to \(s’\) using the knowledge update mechanism described in section* 3.2.

The reward function R(s, a) quantifies the immediate benefit of recommending action a in state s. To capture learning gains, we define the reward based on the observed improvement in knowledge mastery. Specifically, the mastery change vector is defined as \(\Delta s(t) = s(t+1) – s(t)\), and the reward signal is computed as the weighted sum of all affected knowledge points:

$$\begin{aligned} r_t = \sum _{i \in C(t)} \eta _i(t) \cdot \Delta s_i(t), \end{aligned}$$

(10)

where C(t) denotes the set of knowledge points associated with the selected resource a, \(\eta _i(t)\) is the importance weight of knowledge point i (see section* 3.2), and \(\Delta s_i(t)\) is the mastery gain for that point. In our implementation, we set \(\eta _i(t) = 1.0\) for primary concepts, \(\eta _i(t) = 0.6\) for secondary (supporting) concepts, and \(\eta _i(t) = 0.4\) for peripheral concepts based on expert annotation. This reward formulation enables direct evaluation of each resource’s contribution to learning progress.

Under this framework, we introduce a parameterized policy \(\pi _\theta (a|s)\) that learns to select optimal actions given a learner’s current state. To train this policy, we collect transition samples \((s, a, r, s’)\) from logged learner-system interactions. The training process is based on Proximal Policy Optimization (PPO), which aims to maximize the expected cumulative reward:

$$\begin{aligned} \mathbb {E}_{\pi _\theta } \left[ \sum _{t=0}^{T} \gamma ^t r_t\right] , \end{aligned}$$

(11)

where \(\gamma\) is the discount factor that balances short-term and long-term learning objectives. We set \(\gamma = 0.99\) in our experiments to promote long-term learning benefits.

During each training iteration, the system samples interaction trajectories from historical logs and updates the policy network using the ratio between the current and previous policies. The clipped surrogate loss function is defined as:

$$\begin{aligned} L(\theta ) = \mathbb {E}_t\left[ \min \left( r_t(\theta ) \cdot \hat{A}_t,\; \text {clip}\left( r_t(\theta ), 1 – \epsilon , 1 + \epsilon \right) \cdot \hat{A}_t\right) \right] , \end{aligned}$$

(12)

where \(r_t(\theta )\) denotes the probability ratio of the current policy to the old policy at action \(a_t\), \(\hat{A}_t\) is the estimated advantage at time t, and \(\epsilon\) is a hyperparameter that constrains the update range to ensure training stability. We set \(\epsilon = 0.2\). This is the clipped surrogate of Proximal Policy Optimization (PPO), which stabilizes on-policy updates via ratio clipping (and optionally KL-based early stopping).31

At each step t, the system first forms a prerequisite-feasible and de-duplicated candidate set \(\mathscr {A}_t\) from the knowledge graph. The Q-network then computes Q(s(t), a) for \(a \in \mathscr {A}_t\) and prunes the set to \(\widetilde{\mathscr {A}}_t\) by discarding actions below a data-driven threshold (e.g., Top-M or a quantile \(\tau\)). Given the pruned set \(\widetilde{\mathscr {A}}_t\), the PPO policy \(\pi _\theta (a \mid s(t))\) selects the final action a(t). After delivery, the reward r(t) is computed and the state is updated to \(s(t{+}1)\) via the gain–propagation–forgetting mechanism. We log \((s(t), a(t), r(t), s(t{+}1))\) and periodically update both the value and policy components using the same replay buffer, as detailed below.

Reinforcement learning strategy training and recommendation generation

To complement the policy-gradient-based framework introduced in section* 1.3, this section* adopts a value-based reinforcement learning approach grounded in Q-learning. While the previous section* directly modeled a stochastic policy \(\pi _\theta (a|s)\) for action sampling, here we approximate a deterministic state–action value function \(Q(s(t), a; \theta )\) to estimate the long-term utility of each candidate action. This formulation enables greedy action selection and supports efficient value-driven learning.

Specifically, we extract transition tuples \((s(t), a(t), r(t), s(t+1))\) from historical records, where s(t) denotes the learner’s knowledge state at time t, a(t) is the recommended learning resource, r(t) is the feedback-based reward, and \(s(t+1)\) is the updated knowledge state. These samples are stored in a replay buffer \(\mathscr {D}\) to support batch-based learning.

The Q-network takes the current knowledge state s(t) as input and outputs the estimated state–action values \(Q(s(t), a; \theta )\) for all possible actions \(a \in A\), where \(\theta\) denotes the current network parameters. The training objective is to minimize the temporal difference (TD) loss:

$$\begin{aligned} L(\theta ) = \mathbb {E}_{(s(t), a(t), r(t), s(t{+}1)) \sim \mathscr {D}} \Big [ \big ( r(t) + \gamma \max _{a’} Q(s(t{+}1), a’; \theta ^{-}) – Q(s(t), a(t); \theta ) \big )^2 \Big ], \end{aligned}$$

(13)

where \(\theta ^{-}\) represents the parameters of the target network used for stabilizing training, and \(\gamma\) is the discount factor (set to \(\gamma = 0.95\) in our implementation). We optimize this objective on mini-batches drawn from a replay buffer and update the target network periodically.This objective follows standard Q-learning with tabular convergence guarantees32. In the deep setting, we adopt the DQN-style training with experience replay and a target network33.

Once training is complete, the system enters the recommendation phase. At each time step t, the system proceeds as follows:

-

(i)

it forms a prerequisite-feasible and de-duplicated candidate set \(A_t \subseteq A\) from the knowledge graph;

-

(ii)

the Q-network computes Q(s(t), a) for \(a \in A_t\) and produces a pruned candidate set \(\widetilde{A}_t \subseteq A_t\) by discarding items using a data-driven rule (e.g., Top-M or a quantile threshold); and

-

(iii)

the PPO policy selects the final action on the pruned set:

$$\begin{aligned} a(t) = \arg \max _{a \in \widetilde{A}_t} \,\pi _\theta \big (a \mid s(t)\big ). \end{aligned}$$

(14)

After selecting action a(t), the learner engages with the corresponding learning resource, and the system updates the knowledge state to \(s(t{+}1)\) using the mechanism defined in Section 3.2. This state–action–state transition process continues iteratively until a stopping condition is met, such as reaching a target mastery level or a maximum path length. The resulting sequence of actions forms the personalized learning path \(P = [a(1), a(2), \dots , a(T)]\).

This prune–then–select rule ensures both structural validity and efficiency: prerequisite-violating or already-recommended items are filtered when building \(A_t\), low-value items are removed by Q-based pruning to obtain \(\widetilde{A}_t\), and PPO optimizes the final choice within this reduced, pedagogically coherent action space.

link