Real-time driver drowsiness detection using transformer architectures: a novel deep learning approach

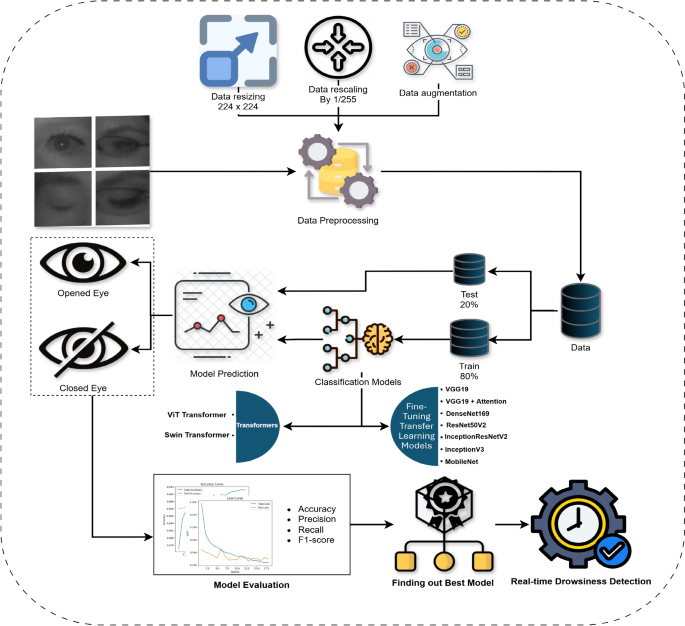

This paper introduces a comprehensive and systematic methodology for real-time driver drowsiness detection, leveraging state-of-the-art deep learning techniques to classify eye states into “Open-Eyes” and “Close-Eyes.” The proposed framework is designed to address the limitations of existing approaches by integrating advanced data preprocessing, transformer-based architectures, and transfer learning models. The methodology begins with data preparation, including image resizing, normalization, and augmentation, to enhance the generalization capabilities of the models. The MRL, NTHU-DDD, CEW datasets are utilized, with an 80-20 split for training and testing, ensuring a robust evaluation of the models. A diverse set of deep learning architectures, including Vision Transformer (ViT), Swin Transformer, and fine-tuned transfer learning models such as VGG19, DenseNet169, ResNet50V2, InceptionResNetV2, InceptionV3, and MobileNet, are trained and evaluated based on key performance metrics such as accuracy, precision, recall, and F1-score. The best-performing model is then deployed in a real-time system that utilizes Haar Cascade classifiers for face and eye detection, coupled with a drowsiness scoring mechanism to trigger alarms when prolonged eye closure is detected. This end-to-end approach not only ensures high accuracy but also provides a practical and scalable solution for improving road safety.The summary diagram of how the driver drowsiness detection model works is shown in Fig. 1 which outlines the sequence of steps followed in this research.

The workflow architecture.

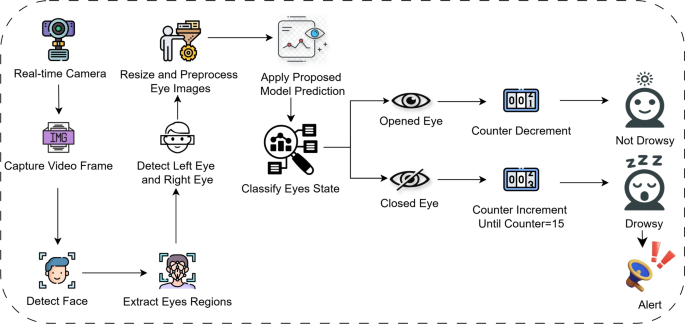

For the purpose of evaluating drowsiness, videos are processed by detecting the frames in real time and then classifying them in real time, starting from driver’s face to both the left and right eye using Haar Cascade classifiers. The captured eye regions of the driver are preprocessed to conform to the set standards of the model including scaling as well as normalization, once this step has been completed, the model with optimally set parameters derived from the previous workflow is deployed to identify whether the eyes were open or closed. Every drowsiness score starts from zero, a score increases by 1 unit in case closed eyes are detected. The score for drowsiness will be triggered in the case that the score has remained constant for 15 frames, meaning the driver will alert once a frame reaches a certain threshold. If the predictions generated indicate the eyes of the driver are open, then the score is decreased by one. The real-time detection process is visually represented in Fig. 2.

Real-time detection architecture.

Dataset description

With emerging technologies, selecting appropriate datasets in the field of driver drowsiness detection is crucial, as they are deemed fit for robust model calibration as well as generalizability. Every dataset comes with its own set of challenges affecting the system’s detection accuracy and performance. These hurdles include variations in lighting, facial occlusions from glasses or other objects, head movements, and subtle but impactful slow blinking and yawning, as well as other sleepy behaviors. Understanding these dataset-specific issues is essential for model development, preprocessing strategies, and evaluation. The key challenges associated with the NTHU-DDD, CEW, and MRL datasets used in this study are summarized in Table 3.

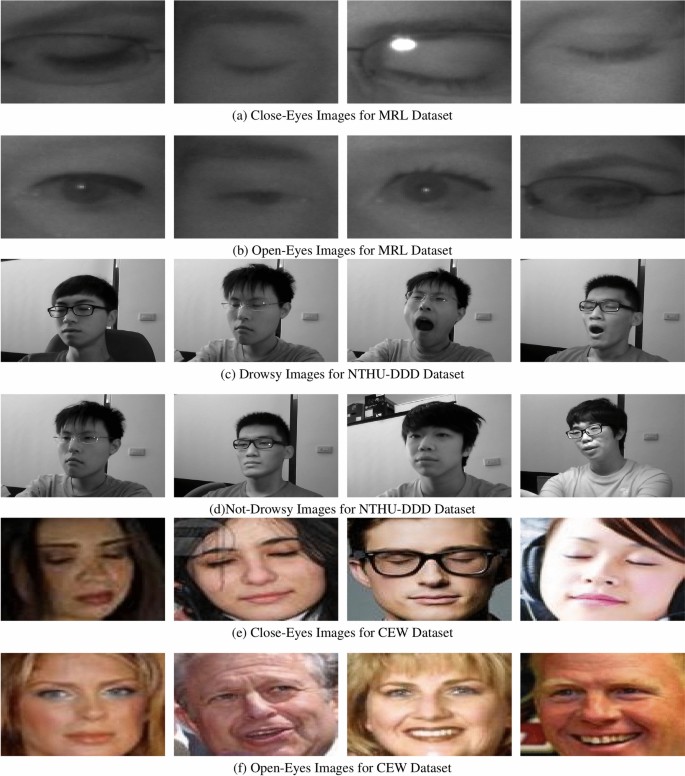

For the experiment, the MRL Eye Dataset28 is used. This dataset has been popular for other research as well notably for drowsy driver detection tasks that focus on eye-state recognition. The MRL dataset contains 84,898 samples in total, all of which can be classified into two main categories, these being Open-Eyes and Close-Eyes. In the aforementioned categories there are 42,952 images for Open-Eyes and 41,946 images for Close-Eyes; Hence, the two categories are distributed almost equally. The images included in this dataset span different resolutions, light conditions, and even different orientation of the eye: so it is a very hard dataset for construction of effective classification models. All these factors suit our research – the dataset’s size allows for deep learning models that are able to detect drowsiness in real-life cases.

NTHU-DDD dataset is a comprehensive and diverse collection of driver videos recorded in real vehicles under daytime and nighttime environments. It has annotated behaviors such as yawning, slow blinking, and nodding, along with sleepy states’ combination, recorded at various facial angles and head movements. This dataset helped us significantly in our work by enabling us to test a complete range of sleepy behavior and account for performance in operating conditions that are representative of real-world scenarios. Within the dataset as a whole, there are 66521 640 \(\times\) 480-resolution grayscale images of each of the two classes, i.e., drowsy and not-drowsy. There are 36030 sleepy images in the dataset altogether and 30491 images altogether which are not sleepy.

Closed Eyes in the Wild (CEW) dataset contains a collection of facial images with closed and open eyes that were collected in unconstrained scenarios. It presents huge variation in facial orientation, expression, and occlusions such as glasses and hair. CEW was employed in our research as a cross-validation reference to measure model generalization to in-the-wild data, simulating real-world deployment scenarios where changing visual features should be correctly understood. The CEW dataset comprises 27200 images of closed and open eyes. In contrast to the MRL dataset, the CEW dataset comprises full-face images, posing additional challenges in the guise of occlusions, different lighting, head pose variation, and motion blur. These conditions make the dataset very representative of real-world driving situations, where environmental conditions are changing and unpredictable.

Interpreting Figs. 3 and 4 represent the data distribution, and sample images of the obtained images from the dataset. The dataset has a total of two purposes hence two classifications; one for training, and one for testing. In simpler terms, out of the total dataset 80% was allocated for training and 20% reserved for testing.

Dataset distribution across MRL, NTHU-DDD, and CEW.

Data preprocessing

This part introduces the need of data preprocessing. This is one of the core processes in both machine learning and deep learning as it minimizes the chances of over fitting and generalizes the model. Any kind of working on the input data to make it ready for further action is termed as “data preprocessing.”. The concept of preprocessing in data makes a lot of applications such as machine learning, deep learning, data mining, and computer vision more friendly and effective. For the purpose of this experiment, we carry out data normalization and scaling. It is necessary to ensure that all images are of the same size in order to allow for the same dimensional input throughout the model. In our experimental of the proposed model, all the images were scaled to dimensions of \(224 \times 224\). The model learning process is improved by reducing the influence of external pixel values. To attain that, each pixel is normalized into the range of [0,1] by dividing each pixel value by 255.

In an effort to prevent overfitting and make the model more generalizable, data augmentation methods are utilized on the MRL dataset throughout the preprocessing phase. Many transformations are done on the training images to keep their main characteristic intact while being changed. The ShiftScaleRotate tools in this case moves random images off center and out of proportion, the model then grows stronger to these changes in the position of the eye. HorizontalFlip is often paired with images to give a reflection to the rest of them to strengthen and prepare the model for different angles. Images RandomBrightnessContrast changes the brightness and contrast of images allowing the model to work under different lighting scenarios. Some more tools are rotation_range, width_shift_range, height_shift_range that slightly rotate and move images vertically and horizontally in order to simulate real life movement of the head and the position of the eyes. As a result of editing with these augmentation tools the model became stronger at identifying Open-Eyes and Close-Eyes states giving better real-time drowsiness detection.

Region of interest selection

This study uses Haar cascade classifiers to detect, locate and extract the face and eye regions for drowsiness detection preprocessing. Haar cascade is a technique based on machine learning, where essential features are gained from a portrait, or integral image, and categorized utilizing AdaBoost34. The algorithm scans an image over different intervals, and identifies elements depending on pre-instructed features. The classifier scans parts of an image over different intervals, employing predefined features. In this case, face or eyes, it seeks special features such as the bridge of the nose is lighter than the eyes, the eyes are darker than the cheeks and the forehead is lighter than the eyes. Because of the classification algorithm, face is extracted and from the extracted face box, eye regions are extracted. This step is performed to reduce probabilistic and computational cost of false positives.

The shape of a human eye can be described by a set of points. The relative position of these points defines how open or closed the eye is. So as to measure how open an eye is, it’s essential to know which 2D landmarks to use around the eyes35. There are some important points p1 to p6 are the 2D facial landmarks of the eye as shown in Fig. 5. The eye’s state, open or close, is detected by calculating the Eye Aspect Ratio (EAR) as shown in Eq. (1). The vertical distances between the eye landmarks are summed up and placed in the numerator, whereas those placed horizontally are in the denominator. A lower EAR value refers to a closed eye, while a higher value refers to an open eye. An EAR score less than 0.25 would define the eye as closed whereas an EAR that scores above will define the eye as open.

$$\begin{aligned} EAR = \frac{\Vert P_2 – P_6 \Vert + \Vert P_3 – P_5 \Vert }{2 \times \Vert P_1 – P_4 \Vert } \end{aligned}$$

(1)

After detecting the face, the Haar cascade classifier is trained to detect the eye region, after which a deep learning classifier is used to classify the extracted eye images. The model predicts the unlocked or locked status of the eye, which adds to the overall drowsiness score. If the score from both eyes is under a certain value for a set time, then an alarm is sounded. This combination of Haar cascade classifier for Region of Interest (ROI) selection and deep learning for classification proved to be effective and accurate for real-time drowsiness detection.

Ethical statement

The human face visible in Fig. 27 is that of one of the authors. The author has provided informed consent for the use of their image in this manuscript. All methods and procedures involving human subjects were carried out in accordance with relevant guidelines and regulations. No institutional or licensing committee approval was required for the use of the author’s image, as it was voluntarily provided for research purposes.

Transformer architectures

The advancement of transformers in deep learning particularly natural language processing and image processing models cannot be ignored. They were first introduced in the research paper ‘Attention Is All You Need’36 and in this paper, the architecture has replaced the fully connected recurrent and convolution structures by self attention which allows models to be able to tackle full sequences or full images effortlessly.

With those properties, transformers are suitable in image processing tasks considering the fact that they can be used to model complex dependencies between far apart elements in space. Not like CNNs that are built upon local areas, vision transformers in a similar fashion to traditional ones, self attention is spread all over the parts of the images which make them outperform other network models on numerous tasks such as image identification and locating objects within an image.

Meanwhile, the Swin Transformer Adds ons to ViT by proposing a window shifting approach alongside a multi level bidirectional design to enable quick processing and enhance the efficacy of working with large images. Vision transformer’s issues are addressed in the Swin Transformer by using a sliding window approach and also resulted in maintaining a productive result in vision activities while also lessening computation cost.

ViT transformer

ViT is among the first transformer-based models used to perform image classification tasks37. While CNNs rely on convolutional layers to hierarchically analyze the features of images, ViTs process images by dividing them into fixed-size patches which get linearly embedded and treated as a sequence through the use of a Transformer encoder38.

ViT has a number of primary elements: The Classification Head is the last part to process the feature mapping to enable an image determined to be Open-Eyes or Close-Eyes when combined into a fully connected layer. The Transformer decoder, which comprises a number of self-attention layers and feed forward networks that function alongside the patch embeddings. ViT also has a classification kernel, or Layer Merge. Embedded images have positional information pasted on them, typically 16×16 patches. The self and cross attention modules together ensure that the spatial data learned is used. ViT has proven useful on large scale datasets especially for image classification tasks12. In this case, ViT is slightly altered and instead of determining whether a driver is awake or not, it determines whether a drivers eyes are open or closed or in other words whether a driver is.

The MRL dataset fine-tuning starts with resizing images adjusted to 224\(\times\)224 pixels. Also, horizontal flipping is used at random to promote better training. The first step of training starts with a weight trained model google/vit-base-patch16-224 which is adjusted for a two state eye model and an AdamW optimizer is applied with a rate of 5e-5 with a CrossEntropyLoss applied. The model is equipped with an early stopping validator with a patience of five. For the computing conditions, the model doesn’t start depending on a bias since, the masks aren’t utilized, achieving balanced multi orientated images, however the model utilizes a default value of 32 across the line up of 30 and splitting testing and training. Performance was judged by metrics of loss and accuracy during the two sets of training and testing that were held in a single session. The process is stopped as soon as one of the early stopping parameters is reached to ensure that the model doesn’t overfit and handles testing well.

The proposed Vision Transformer (ViT) model that has been submitted for drowsiness detection works by splitting input images into smaller patches followed by a linear projection accompanied by the addition of positional embeddings. These linear projections ensure that spatial information is preserved. The patches that have been transformed are then run through a number of transformer encoder layers. Here, global dependencies are captured by mechanisms of multi-head self-attention along with normalisation layers which improve the model’s robustness. The model also includes a Head, MLP, and Multi-Layer Perceptron for classification into Open-Eyes and close-Eyes states. More so, Class Activation Mapping (CAM) is implemented for enhanced visibility/easier understanding of the decision made by focus modeling and at the same time identifying clearly the areas that were of interest in the decision. The block diagram of this structure is presented in Fig. 6. It visually depicts the different stages in the process of extracting and classifying features.

The ViT transformer architecture.

Swin transformer

The Swin Transformer architecture involves multiple components. To begin with, the image is sliced into square blocks with no overlaps and then a Patch Embedding Layer is used to map the image blocks into higher dimensional vectors. The structure of the Swin Transformer is hierarchical in nature and it enables local and global dependencies to be captured via the use of shifting windows of self-attention39. The self-attention blocks are restricted within each window and once again the windows are moved throughout the layers of the self attention model so that the model can learn cross-window interactions. In addition, patch merging layers are employed in order to down sample the feature map thereby permitting the model to learn deeper scales features. At last, a classifier head is utilized to make the final decision and classifies the grayscale image as Open-Eyes or Close-Eyes. The Swin Transformer does well in image classification problems dealing with high-resolution images40 and is tuned in this work to drowsiness detection of a person’s eyes whether open or closed.

We take an image with the label of Open-Eyes or Close-Eyes and send it through our model trained specifically from the Hugging Face SwinForImageClassification swin-tiny-patch4-window7-224 instance. To achieve a binary classification, the model undergoes further adjustments. The image encoder is swapped with a custom one that has a dropout layer and a linear projection layer which are used to tackle overfitting. Razoring images with random flips and alterations to contrast and brightness up prepares the dataset for the model enlargement allowing the model to deal with a larger variety of classes. The AdamW optimizer is combined with a learning rate and a cross-entropy loss function to train the model, streamlining the fine-tuning procedure. Other than that, early stopping is used while monitoring the validation loss to ensure the model trains efficiently without overfitting. Moving weight averages (SWA) are applied to boost generalization and improve overall performance. After the model is fine-tuned to our needs, we conduct further evaluations using accuracy, loss metrics and drowsiness detection optimizations allowing the model to distinguish between Open-Eyes and Close-Eyes efficiently.

To ensure maximum effectiveness, a set of hyperparameters are established in the Swin Transformer model during the fine tuning process. The model is set to a batch size of 32, this helps in effective image data processing during the training and evaluation phases. Different learning rates are applied to the backbone and classifier parts of the model: 1e-5 is defined in the former and 1e-3 is defined in the latter. As a result in the issued setting, the parameters learn the last classification layers faster than 1e-5. The training is set to span across 30 epochs and has an early stopping mechanism which halts the training process after 5 epochs without an improvement in validation loss. This is done to mitigate the risk of overfitting. A weight decay of 1e-4 is set in the loss function to facilitate the parameters optimization process using AdamW optimizer. Label Smoothing Cross Entropy is the loss function applied with a smoothing factor of 0.1, this reduces both overfitting and increases generalization. The block diagram of the Swin transformer is presented in Fig. 7. It visually depicts the different stages in the process of extracting and classifying features.

The swin transformer architecture.

CNN models architectures (fine-tuning transfer learning models)

VGG19

The visual geometry group at Oxford University introduced the VGG architecture that is extended into what is now known as VGG1941. It was developed in the year 2014 and consists of Nineteen layers, out of these layers—out of which 16 are convolutional and 3 are fully connected, along with that it contains strategically placed max-pooling layers which serve to reduce the spatial dimension while retaining important features. Just like the VGG16 model, the VGG19 model also uses 2 \(\times\) 2 max-pooling kernels and 3 \(\times\) 3 convolutional filters, they enhance the efficiency of the entire design of the system. With the addition of the layers, VGG19 has enhanced capacity to perform deep feature extraction, hence allowing it to perform complex image classification with high accuracy.

Since the VGG19 is hierarchical in nature, it is able to recognize intricate patterns with ease42. The very basic and simple features such as edges and textures are concentrated in the shallow layers however more higher level features, like facial movements or jaw movements, are contained within the deeper sides of the layer. The fine granularity that this allows is useful in scenarios where driver drowsiness detection is needed and xeyelid closure and prolonged blinking are informative. Althought the architecture cost for the VGG19 is higher than that of the VGG16, the accuracy and robustness in the detection of fatigued drivers is better in VGG19.

The VGG19 model has a keen aptitude when it comes to telling distinguishing features of a face that’s associated with drowsiness, especially when it is used with well trained domain centered datasets like MRL. Sitting down for this further improves its performance at being able to tell the difference between open and closed eyes which works well with letting it being used in systems that are aimed for monitoring the state of a driver in real time. With fatigue based patterns being effectively determined along with the high-level accuracy that the model provides, it makes it great for use in various situations while a person is behind the wheel.

VGG19 + attention

In this subsection, we describe the process of fine-tuning a pre-trained VGG19 model with an attention mechanism to classify the open-eye and close-eye states within the MRL dataset. The aim is to make use of the feature extraction of VGG19 but at the same time, disentangle with attention block over relevant image features.

The model of the fine-tuned VGG19 is composed of the already trained VGG19 model but without the fully connected layers on top, preloaded with weights obtained from ImageNet. We then freeze the first 15 layers to keep the pre-trained feature extraction and allow the rest of the deeper layers to be trained on the dataset. A multi-layer perceptron attention mechanism is used to enhance the feature maps by first applying global average pooling, dense layers and a sigmoid activation function to the input feature maps. Global Average Pooling eliminates unnecessary spatial information but retains the major residual components. Fully connected layers of 512, and 256 neurons were then added with ReLU activation and with dropout for regularization. The last layer is a softmax classifier that predicts two output classes: Open-Eyes and Close-Eyes.

Now, let’s analyze the channel attention mechanism that is able to weight feature maps given by VGG19. It first applies Global Average Pooling which converts the feature maps to a single channel. Then, the vector is processed with fully connected layers that are aimed at preserving some essential spatial relationships. A sigmoid function is the last step, it generates attention weights that are then multiplied with feature maps over individual elements so that important areas can be highlighted43.

To enhance the performance of the model while testing, data augmentation is applied to the training images, which include a degree of random rotations, width and height shifts, as well as, horizontal flipping. Both the training and test data is obtained from folders and scaled to 224 \(\times\) 224. Since this is a multi-class classification problem, the categorical class mode is used.

In order to increase performance, a few measures have been taken. The training is stopped after a predetermined number of epochs without improvement of the validation loss. This is done to avoid overfitting. In addition, there is a model checkpoint that saves the model with the best validation accuracy in order to restore the appropriate weights later. If the validation loss does not improve, the learning rate is lowered then, which helps in achieving convergence.

After the model has been trained, it goes through an evaluation stage where it is provided with a test dataset. In this stage, a few key metrics, such as the accuracy of the model and the loss are noted and recorded. The particular model that has the lowest loss is saved and used for classifying eye states of the drivers in real time to assess their drowsiness in future applications.

DenseNet169

DenseNet169 is a deep convolutional network that is about 169 layers deep and was developed to eliminate the vanishing gradient problem44. The goal was to greatly increase the effectiveness of learning new features while decreasing usage of parameters. It is efficient when it comes to leveraging previous knowledge, and while doing so, facilitates the propagation of gradients. Each layer was designed to pull information from all layers at once after itself, in doing so, it was able to enhance the redundancy of parameters.

DenseNet169 perceives intricate multi-level features that are essential for recognizing driver drowsiness, specifically for drivers who have some signs of closed eyes and slow blinking, as they are slight eye movement indicators, this proves useful to wide driver fatigue detection45.

Constantly adjusting the DenseNet169 on the MRL dataset increases the effectiveness with which the model can tell supporting it with the capability of classifying the model accurately. Dense connectivity architecture broadly assists with the capability of generalization ensuring vast and immense performance of the model in real life driver monitoring situations.

ResNet50V2

ResNet50V2 is a Microsoft Research based deep convolution neural network with residual learning which is an updated version of ResNet5046. This network consist of fifty layers which include identity and convolutional residual blocks which enhance the propagation of gradients leading to resolving the problem of vanishing gradients. Because of this architecture, networks can be deeper while maintaining stability during training, making it efficient in tasks that require complex image classification.

Driver drowsiness detection gets a huge boost from ResNet50V2’s deep hierarchical feature extraction capabilities47. The initial layers focus on basic image properties while the high level ones zoom eye closing and minute facial expressions. And as the model relies on residual connections, that ensures there will be greater efficiency with avoiding overfitting during fine tuning the model to the MRL data set.

The alter as described above allows ResNet50V2 to perform better with MRL dataset as it can now effectively discriminate Open vs Closed eyes and thus classify drowsiness states. The model does not suffer from any generalization issues suggesting it’s viable for real world deployment where the use case is driver supervision in real time, ensuring the detection is accurate regardless of the light or surrounding.

InceptionResNetV2

InceptionResNetV2 combines Inception and ResNet into a single framework as it adds inception modules with residual connections to boost accuracy and loss to gain efficiency48. Because of this hybrid design, there is less need to worry about the complications that arise when training a model when the networks are very deep since there is a high degree of accuracy achieved.

Because of its features, the model is appropriate for driver drowsiness detection. Its inception blocks are residual, and they can identify complex and abstract facial features which are relevant such as the closure of one’s eyes and other signs of fatigue30.

By applying InceptionResNetV2 on a particular dataset called MRL, we can improve the model’s ability to effectively distinguish between closed and opened eyes. A real time system that monitors a driver to determine if they are drowsy can be possible while ensuring safety on the roads against accidents that are caused by fatigue, owing to InceptionResNetV2, which is able to extract relevant features and learn efficiently.

InceptionV3

InceptionV3 is another great product that Google developed. It is an AI algorithm that makes use of deep learning and specializes in Amalgamation neural networking processes for efficient computational resource and cost reduction of an enhanced deep learning model. Two other of its components are classifier aids and de-factored filtering49.

Inception V3 also allows monitoring of drowsy drivers as it is able to capture fine and coarse details which will construct proper facial features. When coupled with inception modules, it becomes easier to precisely identify eye closure and other signs relating to fatigue which improves the necessary cue in the monitoring process to control the drowsiness of the driver.

To a large extent, the reliability of the driver is enhanced such that InceptionV3 has been fine-tuned on the MRL dataset greatly improving its monitoring tools and detecting drowsiness for drivers regardless of their status. Being safe, the software continues to prove extremely efficient in real-time applications.

MobileNet

MobileNetV2 is a light-weight Convolutional Neural Network specifically targeted for mobile and embedded systems. It was developed by Google and employs the two techniques of depthwise separable convolutions and the inverted residual blocks50. These techniques decrease the complexity of computations without losing a lot of accuracy. Owing to its efficient architecture, MobileNetV2 is well-suited for use in environments with limited resources and which require real-time inferences.

The capability of MobileNetV2 to hierarchically extract features, makes this architecture appropriate for detection of driver drowsiness. This model is competent in determining facial indicators like eye closure and extended blinking of the eyes; which is not computationally intensive at all. This efficacy particularly helps in embedded applications using real-time analytics, where every millisecond counts.

MobileNetV2’s eye classification is fine-tuned on the MRL dataset which allows for the quick determination of either open or closed eyes and thus drowsiness is detected with low latency. Furthermore, this architecture is easily implemented on in-vehicle driver monitoring systems and thus ensures that timely warnings about fatigue related issues are issued. The problem with this model is that it gives low accuracy.

link