Prototype based contrastive graph clustering network for reducing false negatives

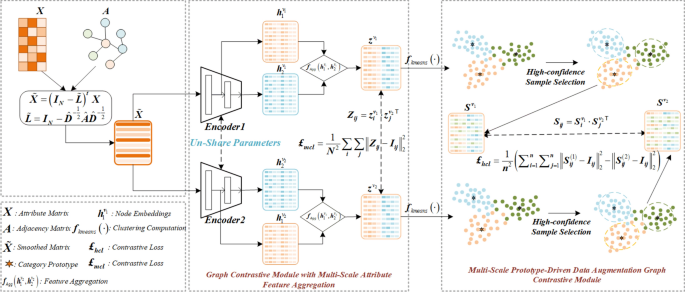

This section introduces the proposed prototype-driven contrastive graph clustering network, as shown in Fig. 1. The network consists of two primary components: the Multi-Scale Attribute Feature Aggregation Graph Contrastive Module (MSAFA) and the Multi-Scale Prototype-Driven Data Augmentation Graph Contrastive Module (MSPDA). In MSAFA, two dual-layer Multi-Layer Perceptron (MLP) graph encoders are constructed, each with unshared parameters, one of which incorporates randomly added noise. As a result, even with the same input, the two encoders produce different feature representations, generating dual-view outputs. In this module, aggregation calculations are performed to fuse low-level and high-level sample features, enhancing feature richness and distinguishability, and generating latent representations \({z^{{v_1}}}\) and \({z^{{v_2}}}\). A cross-view decoupled contrastive learning mechanism is introduced, using a mean squared error contrastive loss function that considers only positive samples to constrain the similarity between embeddings from different views. This enables the model to learn more robust and consistent representations across views. In MSPDA, the multi-scale views \(h_1^{{v_1}}\), \(h_2^{{v_1}}\) from Encoder 1 and those \(h_1^{{v_2}}\), \(h_2^{{v_2}}\) from Encoder 2 are processed through k-means clustering to generate prototypes (cluster centers) for each category. According to the clustering concept, samples clustered around prototypes are assumed to have high similarity. Thus, high-confidence sample sets are collected to generate augmented views. To mitigate the influence of false negative samples, a cross-view decoupled contrastive learning mechanism is used to align embeddings from different augmented views. This enables the model to learn features better suited for clustering tasks.

Detailed definitions of these modules are provided in the following sections.

Notations and problem definition

Given an undirected graph \(G = \left\{ {X,E,A} \right\}\), \(V = \left\{ {{v_1},{v_2},…,{v_N}} \right\}\) represents the set of N nodes in the graph G, E represents the set of edges in the graph G, and these nodes are partitioned into K categories. Here, \(X \in {\mathbb {R}^{N \times D}}\) and \(A = \left\{ {{a_{ij}}} \right\} \in {\mathbb {R}^{N \times N}}\) represents the attribute matrix and the adjacency matrix of the graph G respectively. The degree matrix is defined as \(D = diag\left( {{d_1},{d_2},…,{d_N}} \right) \in {\mathbb {R}^{N \times N}}\), where \({d_i} = \sum \nolimits _{\mathrm{{i}} = {v_i},j = {v_j}} {{a_{ij}}}\), \(\left( {{v_i},{v_j}} \right) \in E.\) Therefore, the Laplacian matrix is defined as \(L = D – A\). \(\hat{A} = A + I\) is the adjacency matrix of the graph G with self-loops added, and I is the \(N \times N\) identity matrix. The corresponding symmetrically normalized graph Laplacian matrix is denoted as \(\tilde{L} = I – {\hat{D}^{ – \frac{1}{2}}}\hat{A}{\hat{D}^{ – \frac{1}{2}}}\), and \(\hat{D}\) is the degree matrix corresponding to \(\hat{A}.\)

Graph contrastive module with multi-scale attribute feature aggregation (MSAFA)

Graph contrastive learning methods typically learn node or graph representations by contrasting positive and negative sample pairs. For instance, CCGC11 and CONVERT20 use high-confidence pseudo-labels to guide the creation of positive and negative sample pairs, followed by designing contrastive loss functions to optimize the model. However, pairs created with inaccurate pseudo-labels are prone to the false negative problem. To address this issue, a decoupled contrastive learning mechanism is designed, treating all data-augmented samples as positive, eliminating the need for negative samples. Embedding representations from different data-augmented views are aligned, resulting in more robust and consistent embeddings. Based on this concept, it is essential to improve sample quality by eliminating noise and enhancing feature richness. Therefore, the MSAFA module incorporates noise processing, twin encoders for specific views, and a decoupled contrastive loss function.

Cui et al.34 argue that the entanglement between filters and weight matrices does not improve performance in unsupervised graph representation learning and can harm training efficiency. They propose a carefully designed Laplacian smoothing filter to mitigate this issue and more effectively eliminate high-frequency noise in node features. Inspired by this approach, a Laplacian smoothing filter is also adopted to remove high-frequency noise and obtain the smoothed attribute matrix \(\tilde{X}\), as shown in Eq. 1:

$$\begin{aligned} \tilde{X} = {\left( {I – \tilde{L}} \right) ^t}X, \end{aligned}$$

(1)

where \(\tilde{L}\) represents the symmetric normalized graph Laplacian matrix, t is the number of layers of Laplacian filtering, I is the identity matrix, and X is the attribute matrix. The \(\tilde{X}\) matrix is then fed into the MSAFA module for further computation.

The MSAFA module consists of two MLP encoders with identical structures but non-shared parameters, also known as twin encoders. Both encoders are configured with two layers, each containing the same number of neurons. The difference is that random noise \(\varepsilon\) is added to the parameters of one encoder. After passing \(\tilde{X}\) through the twin encoders, the sample-specific view embeddings are obtained, as shown in Eqs. 2 and 3 :

$$\begin{aligned} & h_1^{{v_1}},h_2^{{v_1}} = ML{P_1}\left( {\tilde{X},{\theta _1}} \right) , \end{aligned}$$

(2)

$$\begin{aligned} & h_1^{{v_2}},h_2^{{v_2}} = ML{P_2}\left( {\tilde{X},{\theta _2} + \varepsilon } \right) , \end{aligned}$$

(3)

where \(h_1^{{v_1}}\) and \(h_2^{{v_1}}\) refer to the low-level and high-level embedding views from Encoder 1, while \(h_1^{{v_2}}\) and \(h_2^{{v_2}}\) refer to the low-level and high-level embedding views from Encoder 2. \({\theta _1}\) and \({\theta _2}\) are the parameters of the encoders. Next, L2 normalization is applied to \(h_1^{{v_1}}\), \(h_2^{{v_1}}\), \(h_1^{{v_2}}\), and \(h_2^{{v_2}}\), as shown in Eqs. 4 and 5:

$$\begin{aligned} & h_1^{{v_1}} = \frac{{h_1^{{v_1}}}}{{\left\| {h_1^{{v_1}}} \right\| }}, h_2^{{v_1}} = \frac{{h_2^{{v_1}}}}{{\left\| {h_2^{{v_1}}} \right\| }}, \end{aligned}$$

(4)

$$\begin{aligned} & h_1^{{v_2}} = \frac{{h_1^{{v_2}}}}{{\left\| {h_1^{{v_2}}} \right\| }}, h_2^{{v_2}} = \frac{{h_2^{{v_2}}}}{{\left\| {h_2^{{v_2}}} \right\| }}. \end{aligned}$$

(5)

To enrich sample features and enhance their distinguishability, an aggregation function is used to fuse low-level and high-level features, resulting in the aggregated views \({z^{{v_1}}}\) and \({z^{{v_2}}}\), as shown below:

$$\begin{aligned} & {z^{{v_1}}} = {f_{agg}}\left( {h_1^{{v_1}},h_2^{{v_1}}} \right) , \end{aligned}$$

(6)

$$\begin{aligned} & {z^{{v_2}}} = {f_{agg}}\left( {h_1^{{v_2}},h_2^{{v_2}}} \right) . \end{aligned}$$

(7)

Where the \({f_{agg}}\left( \cdot \right)\) function represents the aggregation calculation, and in this paper, the aggregation function used is the concatenation operation.

In contrastive learning, representations of the same instance from different views should be more similar, while representations of different instances should be more distinct. Therefore, the similarity between cross-view representations \({z^{{v_1}}}\) and \({z^{{v_2}}}\) is calculated using Eq. 8, as shown below:

$$\begin{aligned} {Z_{ij}} = z_i^{{v_1}} \cdot { {z_j^{{v_2}}} ^\top }, \end{aligned}$$

(8)

where \({Z_{ij}}\) refers to the cosine similarity between node i in view \({z^{{v_1}}}\) and node j in view \({z^{{v_2}}}\) .

In summary, a decoupled contrastive learning mechanism is designed, treating all data-augmented samples as positive samples. Using the target loss function, cross-view positive samples with similar semantic content are consistently aligned, constraining the similarity between embeddings from different views. This enables the model to learn more robust and consistent representations across views. With this design, the following decoupled mean squared error contrastive loss function \({\pounds _{mcl}}\) is proposed, calculated using Eq. 9:

$$\begin{aligned} {\pounds _{mcl}} = \frac{1}{{{N^2}}}\sum \limits _{i = 1}^N {\sum \limits _{j = 1}^N {\left\| {{Z_{ij}} – {I_{ij}}} \right\| _2^2} }, \end{aligned}$$

(9)

when i equals j, \({I_{ij}}\) it equals 1; otherwise, \({I_{ij}}\) it is equal to 0.

Typically, existing contrastive learning methods rely on constructing positive and negative sample pairs, which, as noted in the introduction, can lead to false negative samples that hinder model optimization. The proposed decoupled contrastive learning mechanism effectively mitigates the impact of false negative samples.

Multi-scale prototype-driven data augmentation graph contrastive module (MSPDA)

Relying solely on the MSAFA module for feature learning may neglect the relationship between feature learning and clustering. Therefore, the clustering results from the MSPDA module are used to iteratively guide feature learning, gradually improving clustering performance. A multi-scale prototype-driven data augmentation method is designed based on the output of the MSAFA module. In the PCL method23, prototypes are defined as “representative embeddings of a set of semantically similar instances.” The CPLAE method14 defines prototypes as the average of instance embeddings within each category. The ProPos and DPN methods35,36 use cluster centers obtained via k-means as prototypes. Thus, samples clustered around a prototype are likely to belong to the same category. Inspired by this, the k-means algorithm is used to obtain the prototype \(C_j^u\) for each category. The distance between samples and prototypes is calculated, and samples with distances below the threshold \(\tau\) are collected, while boundary samples with greater distances are discarded. The remaining samples, referred to as high-confidence samples, form the high-confidence sample set \(n < N\). The calculation formulas for \(C_j^u\) and \({S^{{v_1}}},{S^{{v_2}}}\) are as follows:

$$\begin{aligned} & C_j^u = {f_{k – means}}(h), \end{aligned}$$

(10)

$$\begin{aligned} & s_i^u = \left\{ {\begin{array}{*{20}{c}} {h_{i,j}^u}& {d\left( {h_{i,j}^u,C_j^u} \right) < \tau }\\ 0& {else} \end{array}} \right. , \end{aligned}$$

(11)

where \(u \in \left\{ {{v_1},{v_2}} \right\}\), \(h \in \left\{ {h_1^u,h_2^u} \right\}\) and \(j = \left[ {1,2, \ldots ,K} \right]\). \(C_j^u\) represents the cluster center of the category j obtained by applying k-means on view u. The function \(d\left( \cdot \right)\) represents the Euclidean distance calculation and \(\tau\) is the threshold distance. \(h_{i,j}^u\) represents the embedding of samples belonging to the category j in view u. Thus, the high-confidence sample set is defined as \({S^u} = \left\{ {s_1^u,s_2^u, \ldots ,s_n^u} \right\}\).

Inspired by ProPos36, the k-means algorithm is used to obtain each cluster center as the prototype for each category. However, unlike ProPos, which uses prototypes as pseudo-labels to construct positive and negative sample pairs, this approach can lead to false negatives due to inaccurate pseudo-labels. To avoid generating false negatives, decoupled contrastive learning is adopted, considering all high-confidence samples as positive and aligning their embeddings across different views. This approach enables the model to learn more suitable features for clustering tasks. Thus, a decoupled mean squared error contrastive loss function is proposed for high-confidence sample sets, as shown below:

$$\begin{aligned} & {S_{ij}} = S_i^{{v_1}} \cdot { {S_j^{{v_2}}} ^\top }, \end{aligned}$$

(12)

$$\begin{aligned} & {\pounds _{hcl}} = \frac{1}{{{n^2}}}\left( {\sum \limits _{i = 1}^n {\sum \limits _{j = 1}^n {\left\| {S_{ij}^{\left( 1 \right) } – {I_{ij}}} \right\| _2^2 + \left\| {S_{ij}^{\left( 2 \right) } – {I_{ij}}} \right\| _2^2} } } \right) , \end{aligned}$$

(13)

where \(S_{ij}^{\left( 1 \right) }\) and \(S_{ij}^{\left( 2 \right) }\) represent the similarity matrices of high-confidence sample embeddings from the first and second layers of Encoder 1 and Encoder 2, respectively.

Therefore, the loss of the entire framework can be represented as:

$$\begin{aligned} \pounds = \alpha {\pounds _{mcl}} + \left( {1 – \alpha } \right) {\pounds _{hcl}}, \end{aligned}$$

(14)

where \(\alpha\) is the balancing parameter.

The complete algorithm is summarized in Algorithm 1. Discriminative embeddings and excellent clustering results are obtained through the joint optimization of the MSAFA and MSPDA modules.

The training procedure of the proposed method

link