Machine learning methods on BioVid heat pain database for pain intensity estimation

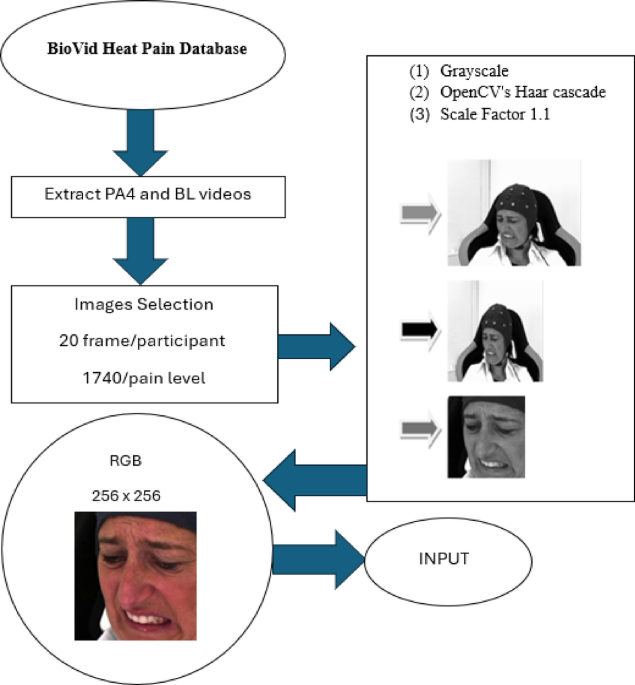

In this study, we developed and evaluated a set of deep learning models and transformers—CNN, CvT, MobileViT, and VGG16—to estimate pain intensity based on facial expressions from the BioVid Heat Pain Database. Our models aimed to differentiate between high pain (PA4) and no pain (BL1) images by using architectures designed to extract and process key visual features (see Table 1). The results demonstrated that the CNN and CvT models performed better than previously reported studies in the literature, achieving higher accuracy and improved generalization. This performance is particularly noteworthy, as we employed different participants for training and testing phases, a methodological improvement over earlier studies that often used the same participants in both stages, which can lead to inflated accuracy metrics due to lack of true generalization.

Our CNN model achieved an accuracy of 71%, which is significantly higher than other studies that applied convolutional architectures for pain intensity detection. For instance, Zheng et al.6 reported an accuracy of only 52% when classifying pain and no-pain conditions using a 5-layer CNN architecture. This improvement in accuracy demonstrates the effectiveness of our optimized CNN model, especially in generalizing to unseen participants, a critical factor in real-world applications. Moreover, the CvT model, which integrates convolutional operations into a transformer framework, achieved an accuracy of 69%. This result underscores the potential of hybrid architectures that combine the local feature extraction strengths of CNNs with the global context modelling capabilities of transformers. The improved performance of our CvT model highlights the importance of spatial and temporal features when estimating pain intensity from facial expressions. In comparison, the VGG16 architecture, a more traditional CNN model, demonstrated consistent but moderate accuracy in our study. While it performed lower than more advanced models like CNN and CvT, VGG16 provided reliable results across various datasets, achieving consistent performance even when tested on different participants’ sets. This consistency suggests that, while VGG16 may not be the most powerful model for pain detection, it offers a stable baseline for comparison and is still useful in scenarios where model simplicity and reliability are prioritized.

A key challenge in this study was balancing model complexity with computational efficiency. The CNN and CvT models, although highly accurate, require substantial computational resources. The CvT, for example, is computationally demanding due to its hybrid architecture, which integrates convolutions with transformer layers. This added complexity improves accuracy but increases the model’s training time and resource consumption, making it less suitable for real-time applications, particularly in environments with limited computational power, such as mobile or embedded systems. On the other hand, the MobileViT model was specifically designed for resource efficiency, utilizing fewer parameters and a streamlined architecture to perform pain classification. While MobileViT achieved a lower accuracy (60%) compared to CNN and CvT, it excelled in scenarios where computational resources are constrained, such as real-time applications on mobile devices. This trade-off between performance and efficiency illustrates the adaptability of MobileViT in situations where lightweight models are needed, even if they sacrifice some accuracy.

Finally, the findings of this study align with recent advancements in video-based pain intensity estimation, as demonstrated by the VideoMAE model in Pittara et al. study25. While our image-based approach successfully captures static pain features, achieving comparable accuracies (71% for CNN and 69% for CvT), video-based models such as VideoMAE leverage temporal dynamics to enhance pain detection. By integrating spatiotemporal cues, VideoMAE achieved an accuracy of 71% in distinguishing pain from no pain. This highlights the potential of video-based approaches in capturing pain expressions that evolve over time, which static images may miss.

Several prior studies in automated pain recognition have gone beyond applying standard deep learning models and have proposed tailored pipelines or multimodal approaches. Our contribution is distinct in several ways. First, rather than focusing on a single architecture, we systematically evaluate a range of both conventional CNNs and transformer-based architectures (CvT, MobileViT) on the same dataset, providing a comparative benchmark that is currently lacking in the literature. Second, we emphasize performance metrics beyond accuracy (including precision, recall, F1-score, and log loss), which gives a more holistic picture of model reliability—particularly relevant for clinical applications where false negatives and positives carry different risks. Third, our focus on lightweight and mobile-class models (e.g., MobileViT) directly addresses practical deployment scenarios, such as bedside or remote healthcare monitoring, which many earlier studies have not prioritized.

A number of previous works on the BioVid database have added biosignals in addition to facial video, and these consistently report higher performance than image-only systems. Werner et al.7 combined facial features with electrocardiography (ECG), electromyography (EMG), and electrodermal activity (EDA), achieving about 70% accuracy across all pain levels. Although, accuracy when no biosignals were included not mentioned in the current study. Werner et al.8 extended this line of work, again incorporating video with ECG, EMG, and EDA signals, and reported roughly 72% accuracy. More recently, Zheng and Lin6 also connected facial expressions with physiological signals (ECG and EDA), obtaining around 52% accuracy for pain vs. no-pain and 51% for all levels. No accuracy referred when no biosignals in this study. In contrast, image-only approaches yield lower accuracies and highlight the difficulty of the task without biosignals. Gigkas et al.11 used a LOSO cross-validation protocol on BioVid facial video and obtained 73.3% for pain vs. no-pain, but only 31.5% for all levels. In their follow-up study, still under LOSO evaluation, performance improved modestly to 77.1% for pain vs. no-pain and 35.4% for all levels when they included biosignals. These comparisons make clear that biosignals such as ECG, EMG, and EDA substantially enhance recognition accuracy, but at the cost of requiring contact sensors, whereas facial video alone, although less accurate, remains attractive for practical, sensor-free deployment in real-world clinical environments.

Overall, biosignals have repeatedly been shown to outperform facial videos for automatic pain recognition. In the BioVid database, EDA alone typically reaches 82–85% accuracy under LOSO10,7 while EMG and ECG are individually weaker but complementary. Fusion of these channels maintains accuracy in this range. Werner et al.8 further confirmed the advantage of multimodal biosignal integration. More recent works also report strong results: Farmani et al.26 achieved 87.5% accuracy on BioVid using a transformer-based fusion of EDA and ECG, and Gkikas et al.11 demonstrated that combining facial video with HR signals improves performance over video alone.

By contrast, facial video alone consistently performs lower, with multimodal studies reporting around 70–72% accuracy when video is included alongside biosignals10,7 and image-only LOSO evaluations yielding 73–77% binary accuracy and 31–35% for all levels11. Taken together, these findings highlight that biosignals such as EDA, EMG, ECG, or HR remain the most reliable channels for pain recognition, especially under LOSO evaluation. However, facial video retains clear clinical value because it is non-contact, unobtrusive, and scalable, offering a practical alternative where attaching sensors is infeasible. Our study thus provides a rigorous benchmark of the facial expression channel in isolation, positioning it as a complementary modality rather than a replacement for biosignals.

Limitations and challenges

Despite the promising results, this study faced several challenges that influenced the models’ overall efficacy and applicability. Overfitting was a prominent issue, particularly in the more complex models like CNN and CvT, which have large numbers of parameters. While these models performed very well on the training data, they struggled to generalize to unseen participants. This was especially evident when tested on participants with facial expressions that differed significantly from those in the training set. Techniques like dropout, batch normalization, and data augmentation were used to mitigate overfitting, but achieving the right balance between underfitting and overfitting remained a challenge. A unique feature of this study was the use of separate participants for training and testing, a practice that provided a more accurate reflection of how these models would perform in real-world applications. However, this approach also introduced challenges, as the models needed to generalize across individuals with varying pain tolerance and facial expressiveness. Pain is a subjective experience, and the same stimuli can produce very different responses across individuals. This variability made it difficult for the models to generalize effectively, particularly for the MobileViT model, which performed worse than CNN and CvT in handling participant diversity.

Another limitation was the imbalance in the dataset, as it primarily focused on the extremes of pain (PA4) and no pain (BL1). This imbalance skewed the models towards recognizing no-pain frames more effectively, while they sometimes underperformed in detecting high-pain moments. To address this, future work should explore data augmentation techniques like oversampling or synthetically generating intermediate pain levels to create a more balanced dataset. Additionally, transforming the task into a regression problem, where pain is predicted on a continuous scale, could provide more pain estimations features. While facial expressions are a valuable indicator of pain, relying only on this, limits the model’s ability to capture the full complexity of the pain experience. Multimodal data, such as physiological signals (e.g., heart rate, electrodermal activity, electromyography), could significantly improve the robustness of pain detection systems. This is particularly important in cases where facial expressions are suppressed or absent, yet other physiological indicators may still signal pain.

While our study makes use of the BioVid Heat Pain Database10, which is one of the two most widely recognized benchmark datasets for experimental pain research, we acknowledge that our evaluation has not yet extended to other standard datasets. In particular, the UNBC-McMaster Shoulder Pain Expression Archive27 is considered a gold-standard for pain recognition and has been extensively used in prior studies, particularly for validating algorithms on patient populations with clinical pain. This dataset contains over 200 video sequences from 25 patients with shoulder pain, annotated with FACS (Facial Action Coding System) coding, observer ratings, and self-reported pain scores. Future work will aim to expand our comparative analysis to include this dataset, thereby strengthening cross-dataset benchmarking and ensuring that our proposed models are robust to variations in experimental setup, participant demographics, and pain modalities.

Future directions

Future studies should incorporate multimodal data from the BioVid Heat Pain Database, including physiological signals like heart rate and EMG, to develop a more comprehensive understanding of pain. Combining facial expressions with biosignals could lead to more robust and accurate pain detection models, especially when dealing with individuals who may mask their facial expressions of pain. Moreover, to make pain estimation models more practical for clinical settings, future research should focus on developing lightweight, real-time models that can be deployed on mobile devices or wearable systems. Techniques like model pruning, quantization, and knowledge distillation could reduce the computational demands of CNN and CvT models while maintaining high accuracy.

Furthermore, incorporating domain adaptation techniques and expanding the diversity of training datasets will be critical to improve the generalization of pain estimation models across different populations. Collecting data from a wider range of ethnicities, age groups, and genders will ensure that these models are not biased toward specific demographics. Finally, the lack of interpretability in deep learning models remains a major challenge for clinical adoption. Future research should integrate explainable AI techniques, to provide clinicians with insights into how the model arrives at its predictions. Our research is based on facial expressions from the BioVid Heat Pain Database aimed to differentiate between high pain (PA4) and no pain (BL1). This transparency is crucial for gaining trust in medical settings, where understanding the decision-making process is vital for patient care. Finally, future work could explore combining both static and dynamic modalities (pictures and videos from patients) to develop a more robust and comprehensive pain assessment system.

link