Machine learning-based personalized training models for optimizing marathon performance through pyramidal and polarized training intensity distributions

Study design and ethics

All experimental procedures adhered to the Declaration of Helsinki standards and received approval from Hanyang University’s Institutional Review Board (Approval No. HYU-2025-001). Prior to participation, written informed consent was secured from all participants, including legal guardian consent for those under 18 years of age. The research protocol, data collection, processing, and analytical procedures followed established human subjects research guidelines. This study employed a machine learning-based methodological framework to conduct comparative analysis of marathon training models, specifically examining pyramidal and polarized training intensity distributions. The investigation focused on evaluating individualized training intensity distribution models by incorporating evidence-based training principles and personalized prescription methods to account for individual physiological characteristics, adaptation responses, and exercise intensity preferences24.

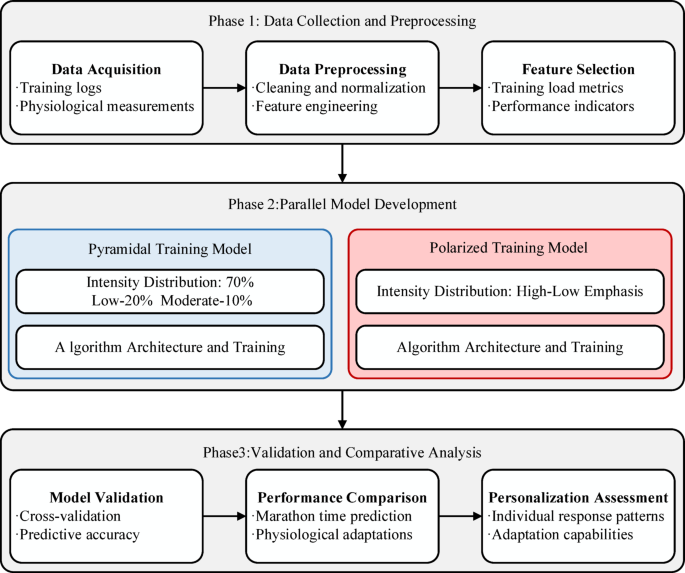

The comprehensive research framework illustrated in Fig. 1 encompasses three sequential phases: data collection and preprocessing, parallel model development, and experimental validation through comparative model analysis. A parallel-group, randomized controlled trial design was implemented, with participants allocated to either pyramidal (n = 60) or polarized (n = 60) training protocols throughout a 16-week preparation period preceding an autumn marathon event. Both experimental groups followed identical data collection protocols and physiological assessment procedures, with training intensity distribution serving as the sole differentiating factor between groups. This parallel development approach facilitated controlled comparative analysis while maintaining the distinct characteristics inherent to each training methodology. The integrated framework prioritized concurrent development and evaluation of both training models, with particular emphasis on critical methodological elements including model architecture, data management protocols, and evaluation metrics to ensure comprehensive comparative analysis of training effectiveness and individualization potential.

Research framework for comparative analysis of machine learning-based marathon.

Participants

A total of 120 recreational and semi-elite marathon runners (68 male, 52 female) were recruited through local running clubs, online platforms, and university athletics programs. Participants represented diverse training backgrounds and performance levels, reflecting the heterogeneous nature of the modern marathon running population2. Baseline participant characteristics are presented in Table 1. The cohort was stratified by experience level: novice (< 2 years, n = 31), intermediate (2–5 years, n = 47), advanced (5–8 years, n = 28), and elite (> 8 years, n = 14), ensuring adequate representation across the performance spectrum to enable meaningful analysis of individual response patterns18.

Inclusion criteria comprised: age 18–55 years; minimum 12 months of consistent running training (≥ 3 sessions/week); ability to complete half-marathon distance within 6 months prior to enrollment; commitment to complete the full 16-week training intervention; access to GPS-enabled running watch and heart rate monitor; and no planned major competitions during the intervention period beyond the target marathon. Exclusion criteria included: history of cardiovascular disease, metabolic disorders, or musculoskeletal injuries requiring medical intervention within 6 months; current use of performance-enhancing substances or medications affecting cardiovascular response; pregnancy or planned pregnancy during the study period; elite athletes with marathon personal best times faster than 2:45:00 (male) or 3:15:00 (female); inability to attend baseline and follow-up laboratory assessments; and previous participation in structured polarized or pyramidal training programs within 12 months.

Participants were randomly assigned to either pyramidal training (n = 60) or polarized training (n = 60) groups using computer-generated randomization stratified by gender, age group (18–30, 31–40, 41–55 years), and baseline marathon experience. Group allocation was concealed until completion of baseline assessments. As shown in Table 1, demographic and physiological characteristics demonstrated no significant differences between groups at baseline (all p > 0.05), confirming successful randomization as outlined in the study framework (Fig. 1). A total of 116 participants completed the study protocol, achieving a 96.7% retention rate through regular monitoring, flexible scheduling of assessments, and comprehensive support throughout the intervention period.

Data collection

Data collection employed a systematic approach utilizing consumer-grade wearable technology to ensure practical feasibility and participant compliance throughout the 16-week marathon preparation period. The comprehensive monitoring framework was designed to capture essential training and physiological parameters while maintaining ecological validity for recreational marathon runners24. All data acquisition protocols prioritized non-invasive monitoring methods suitable for continuous use during normal training activities (Table 2).

The primary data collection system comprised GPS-enabled sports watches (Garmin Forerunner series) and chest-strap heart rate monitors, providing continuous monitoring of cardiovascular and movement parameters during all training sessions. This approach enabled systematic collection of approximately 50,000 data points per participant over the study duration, encompassing heart rate variability, training load metrics, and performance indicators without imposing unrealistic monitoring demands. The framework incorporated evidence-based principles for individualized training monitoring, ensuring data quality while maintaining practical implementation standards25.

Data preprocessing followed a standardized pipeline to transform raw sensor outputs into meaningful training variables while preserving the temporal relationships essential for machine learning model development. The preprocessing framework addressed missing data through multiple imputation techniques, applied outlier detection using interquartile range methods, and implemented temporal alignment procedures to synchronize multi-sensor data streams with varying sampling frequencies. Quality assurance protocols included weekly device calibration against laboratory standards and systematic validation of data integrity throughout the collection period (Fig. 2).

Data preprocessing and feature engineering pipeline for marathon training analysis.

Feature engineering extracted time-domain and frequency-domain characteristics from physiological signals, emphasizing heart rate zone distributions and running dynamics parameters accessible through consumer wearable devices. The process generated 27 optimized features across five categories: cardiovascular measures, movement efficiency indicators, training load parameters, recovery metrics, and athlete profile characteristics. This feature set was specifically designed to capture the distinct physiological signatures associated with pyramidal and polarized training methodologies while maintaining compatibility with practical monitoring constraints. The resulting dataset provided robust input for machine learning model development and enabled comprehensive analysis of individual training responses across diverse athlete populations.

Physiological assessments

Comprehensive physiological assessments employed established, non-invasive laboratory protocols to characterize individual athlete profiles and monitor training adaptations. All testing utilized validated methodologies appropriate for recreational marathon runners, with emphasis on measurements directly relevant to endurance performance and training intensity prescription (Table 3)26.

Maximal oxygen uptake (VO₂max) was determined using incremental treadmill protocols with metabolic cart analysis, representing the primary measure of aerobic capacity. Lactate threshold assessment employed incremental exercise testing with capillary blood sampling, enabling determination of first lactate threshold (LT₁ at 2 mmol/L) and second lactate threshold (LT₂ at 4 mmol/L) as critical markers for training zone prescription. Running economy was evaluated through steady-state submaximal testing at standardized speeds relevant to marathon performance.

The testing schedule comprised baseline assessments, mid-intervention evaluations, and follow-up assessments at study completion. This timeline captured meaningful physiological adaptations while minimizing participant burden and testing-related interference with training protocols27. Heart rate responses were continuously monitored during all testing to establish individual heart rate zones corresponding to metabolic thresholds, enabling precise training intensity prescription for both pyramidal and polarized protocols.

All laboratory assessments were conducted under standardized environmental conditions with consistent quality control procedures, including daily equipment calibration and validation protocols. The comprehensive assessment battery provided robust physiological characterization for machine learning model input while maintaining compatibility with the individualized training prescription framework.

Machine learning model development

Machine learning model development employed a systematic approach to capture the distinct physiological signatures associated with pyramidal and polarized training methodologies. The development framework utilized established principles for sports performance modeling while addressing the specific requirements of individualized training prescription15. Two parallel models were developed to enable direct comparison of training approaches while maintaining the unique characteristics inherent to each intensity distribution paradigm.

The model architecture selection process considered the complex, high-dimensional nature of endurance training data and the substantial inter-individual variability in training responses documented in endurance athletes18. A hybrid approach combining gradient boosting regression for performance prediction and support vector machine classification for training zone assignment was implemented, aligning with recent advances in sports analytics16.

Hyperparameter optimization employed Bayesian search methodology with five-fold cross-validation to ensure robust model performance across diverse athlete populations (Table 4). The optimization process systematically evaluated learning rates, regularization parameters, and network architecture configurations to maximize prediction accuracy while preventing overfitting. Distinct hyperparameter sets were established for pyramidal and polarized models to accommodate the fundamental differences in training intensity distributions.

The fundamental mathematical framework for both models employed weighted training load calculations:

$$P(t)=f\left( {\sum\limits_{{i=1}}^{n} {{w_i}} \cdot T{L_i}(t) \cdot {e^{ – \lambda (t – {t_i})}}} \right)$$

(5)

where P(t) represents predicted performance at time t, TLi denotes training load at session i, wi represents intensity-specific weighting factors, and λ reflects the decay factor for training stimulus over time20.

The pyramidal model (P-ML) utilized progressive weighting factors reflecting the characteristic intensity distribution, while the polarized model (POL-ML) employed bimodal weighting emphasizing intensity extremes (Fig. 3). Model-specific loss functions incorporated intensity distribution constraints:

$${L_{polar}}=\alpha \cdot {L_{class}}+\beta \cdot {L_{perf}}+\gamma \cdot {L_{zone2}}$$

(6)

where α and β balance classification accuracy and performance prediction, while λ enforces training methodology-specific intensity distribution patterns.

Comparison of pyramidal and polarized training intensity distributions.

Feature engineering generated 27 optimized variables across cardiovascular, biomechanical, training load, recovery, and athlete profile domains. Training load quantification employed both session rating of perceived exertion (sRPE × duration) and heart rate-based training impulse (TRIMP) calculations to capture training stress indicators. Model validation employed stratified cross-validation preserving performance distribution across all folds, following the systematic evaluation framework illustrated in Fig. 4. Performance evaluation incorporated multiple metrics including mean absolute error (MAE), root mean square error (RMSE), and coefficient of determination (R²), emphasizing generalization capability over training performance.

Structure of marathon training effect evaluation.

Statistical analysis

All statistical analyses were conducted using R version 4.3.0 and Python 3.9.7, with statistical significance set at α = 0.05. Sample size determination followed established guidelines requiring 10–15 observations per predictor variable10. With 120 participants and 27 features, the study met the recommended ratio for robust machine learning model development. To address potential overfitting concerns given the feature-to-sample ratio, we employed several mitigation strategies: (1) feature selection using correlation analysis to reduce multicollinearity, (2) stratified 5-fold cross-validation with multiple iterations, (3) L2 regularization parameters optimized through Bayesian search, and (4) external validation procedures to assess generalization capability. Machine learning model validation employed stratified 5-fold cross-validation with performance evaluated using mean absolute error (MAE), root mean square error (RMSE), and coefficient of determination (R²). Feature importance was assessed using permutation-based methods to ensure ranking stability.

Inter-individual variability in training responses was analyzed using mixed-effects models to partition variance components and quantify true individual differences beyond measurement error18. Between-group comparisons utilized independent t-tests for normally distributed data and Mann-Whitney U tests for non-parametric distributions. Repeated measures ANOVA assessed changes over time, with effect sizes calculated using Cohen’s d and partial eta squared. Individual response patterns were identified through k-means clustering with silhouette analysis for optimal cluster determination. Model interpretability employed SHAP values to quantify feature contributions to predictions. Hyperparameter optimization utilized Bayesian optimization with cross-validation to prevent overfitting. Missing data (< 3% of observations) were handled using multiple imputation with chained equations. All analyses followed intention-to-treat principles with sensitivity analyses conducted using per-protocol populations to assess result robustness.

link