Detecting command injection attacks in web applications based on novel deep learning methods

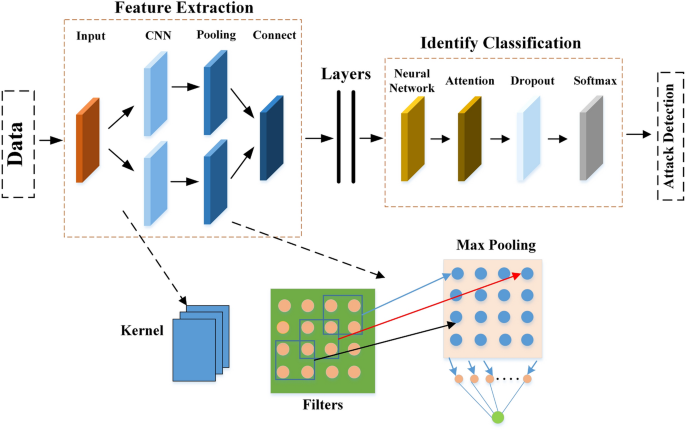

The overall workflow is divided into two primary phases: preprocessing and model recognition. During the preprocessing phase, the dataset is prepared and split into training and test sets. In the model recognition phase, the constructed model is trained and evaluated using these datasets. The overall structure of the model recognition process is illustrated in Fig. 3.

General structure diagram.

Data preprocessing

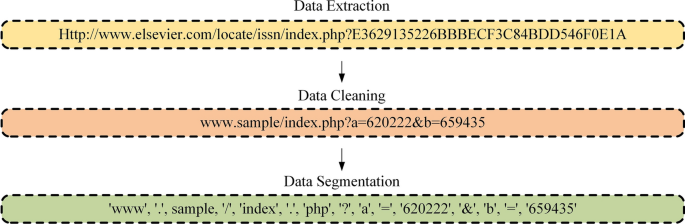

Preprocessing involves the necessary operations and preparation of raw data for subsequent analysis, modeling, or other related tasks. Once the dataset for training is determined, preprocessing is essential to adapt the data to meet the input requirements of the model, ensuring that the model can learn useful patterns and information. This study preprocesses the data injected by received commands, dividing the steps into data extraction, data cleaning, and data segmentation, as depicted in Fig. 4.

Data extraction

Command injection data is extracted by analyzing the parameters within HTTP request packets. The detection process targets the parameter sections of each field in the HTTP data packets, which are subsequently reassembled into malicious traffic for further analysis. Python scripts are employed to extract and process the relevant information from the received data packets.

Data cleaning

-

Data decoding: Command injection attack code is often obfuscated using encoding techniques to evade detection. Before training, it is necessary to decode the input until it is in a form accepted by the target application, thereby deobfuscating the command injection code.

-

Data stream processing: Communication processes may include elements such as image transmission, file uploads, and video streaming. The body of a POST request often contains redundant stream data, which is irrelevant for model training and unnecessarily increases data complexity. Therefore, we first identify the data streams and replace them with strings according to their types, as detailed in Table 4.

-

Data normalization: To optimize model training, parameter names in the dataset are replaced with sequential alphabetic characters (e.g., a, b, c), as parameter names do not contribute to target identification. Additionally, the protocol component is removed, and the primary domain name is replaced with the placeholder string sample. These preprocessing steps ensure that the data is cleaned and streamlined, providing a more effective foundation for model training.

Data segmentation

Given the critical role of special symbols in command injection attacks, a statistical analysis was conducted to identify the symbols most frequently associated with these attacks. This analysis identified 23 high-frequency special symbols: [’-’, ’_’, ’.’, ’=’, ’/’, ’?’, ’ &’, ’:’, ’#’, ’+’, ’<’, ’>’, ’(’, ’) ’, ’%’, ’=’, ””, ’|’, ’$’, ’’, ’’, ’;’, ’,’]. These symbols were selected as delimiters for the data segmentation process.

Utilizing these special symbols as segmentation criteria during data preprocessing enhances the accuracy and thoroughness of the segmentation process. This method enables the extraction of more refined data, which in turn supports the generation of comprehensive vector representations, thereby optimizing the effectiveness of model training and subsequent analysis.

Model

Given the critical role of special symbols in command injection attacks, relying solely on Word2Vec embedding is insufficient to capture the essential information these symbols convey22. To address this limitation, we employ character embedding to preserve the effective information inherent in these special symbols.

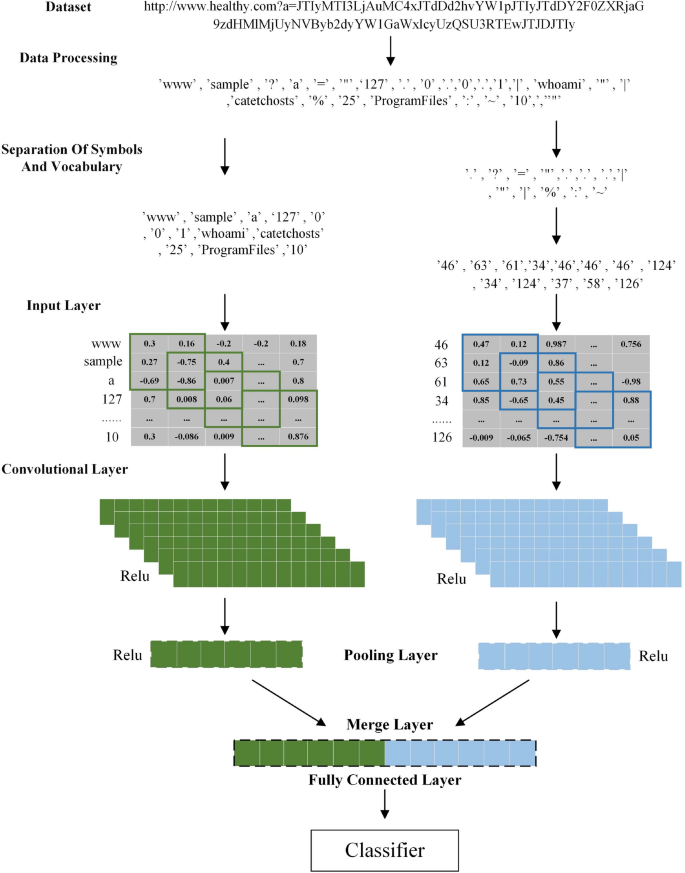

Following data preprocessing, the segmented data are divided into two categories: words and symbols. For the word component, we utilize the Word2Vec algorithm to train each word in the vocabulary. However, due to the unique characteristics of command injection attack traffic, special symbols are crucial features for identifying such attacks. Relying solely on Word2Vec embedding would inadequately retain the key information contained in these symbols. Therefore, we implement a character embedding-based feature representation method for the symbol component. In this approach, each symbol is first replaced with an integer value corresponding to its sequence and then mapped into feature vectors. These vectors are subsequently stacked to form a matrix, effectively preserving the critical information necessary for detecting command injection attacks.

Feature extraction design

The proposed model structure for extracting command injection attack features consists of two main components: the input layer and the convolutional layer. The input layer is responsible for text embedding, while the convolutional layer is dedicated to feature extraction.

-

Input layer: We employ dual-channel embedding for text processing. The preprocessed input comprises n words and symbols, denoted as \(D=\a_1, a_2, \dots , a_n\\), where each \(a_n\) (\(n=1, 2, 3, \dots , n\)) represents a segmented word or symbol. Initially, we separate the words and symbols within D, dividing it into a word set \(W=\w_1, w_2, \dots , w_p\\), containing p words, and a symbol set \(C=\c_1, c_2, \dots , c_q\\), containing q symbols. For embedding, we apply two distinct methods: the word vector model from Jang et al.23 for the word set W, and a character mapping-based symbol vector for the symbol set C. These processes generate two embedding matrices: a word vector matrix and a symbol vector matrix. The resulting vector matrices are subsequently used as inputs to the convolutional layer.

-

Convolutional layer: The word and symbol vector matrices generated by the input layer are processed through two distinct CNN channels for feature extraction. CNN channel 1 based on word matrix: After the word set \(W=\w_1, w_2,\dots , w_p\\) in the input is converted into a feature matrix through the input layer, we use a convolution kernel to extract the data in W. The sizes of the convolution kernels are 3, 4, and 5, and the data they extract are the feature data of unigrams, bigrams, and trigrams in W. For the jth convolution kernel, \(j \in \11, 12, 13\\), we represent the weight matrix as \(U^j \in R^c_j \times m_j\), and the bias vector as \(b^j \in R^C_j\), where \(C^j\) represents the dimensionality size of the output, \(m^j = h^j \times m\), where m represents the dimensionality of word embedding, and the size of the combined word embedding feature is represented by \(h^j\). We use the proposed convolution kernel to perform a convolution extraction operation on \(W=\w_1, w_2,\dots , w_p\\) to generate the output vector \(S^j=[s^j_1, s^j_2,\dots ,s^j_C^j]\), where \(S^j\) represents the feature vector extracted by the jth convolution kernel. The output vectors of the three convolution kernels (corresponding to \(j=11, 12, 13\)) are then concatenated, as expressed in Eq. 1:

$$\beginaligned \beginaligned \hspace14.5em s^1&= [s^11, s^12, s^13]&s^1&\in \mathbb R^C_11 + C_12 + C_13 \endaligned \endaligned$$

(1)

The generated \(s^1\) is used as the output vector of the extracted word matrix. CNN channel 2 based on symbolic matrix: After the symbol set \(C=\c_1, c_2,\dots ,c_q\\) in the input is converted into a feature matrix through the input layer, we use the same method as CNN channel 1 of the word matrix to extract the feature vector. We use the same model of convolution kernel on \(C=\c_1, c_2,\dots ,c_q\\) to extract the convolutional extraction, generating the output vector \(S^j=[s^j_1, s^j_2,\dots ,s^j_C^j]\). Finally, we concatenate the output vectors of the three convolution kernels (corresponding to \(j=21, 22, 23\)), as expressed in Eq. 2:

$$\beginaligned \beginaligned \hspace14.5em s^2&= [s^21, s^22, s^23]&s^2&\in \mathbb R^C_21 + C_22 + C_23 \endaligned \endaligned$$

(2)

Feature extraction structure

The complete architecture of our proposed dual CNN model is illustrated in Fig. 5. For preprocessed inputs, the output from the input layer is directed to the corresponding CNN channel. The output of the convolutional layer is computed using the formula \(p_i = g(x_i *W + b)\) where b represents the bias and W denotes the weight matrix. The resulting data from the convolutional layer is subsequently passed through the max-pooling layer, which selects the maximum feature from the extracted convolutional outputs. Following the convolutional and fully connected layers, a merging layer concatenates the extracted word and symbol features. The output of this merging layer is a concatenated sequence derived from both CNN channels, integrating the convolutional and pooling features. This concatenated sequence represents the global vector, concluding the feature extraction process.

Double convolution channel structure diagram.

Identify classification

The proposed architecture was designed to enhance classification accuracy by aggregating multiple features. Initially, the data underwent preprocessing and was subsequently passed through the input layer, where it was converted into an embedding vector matrix. The feature extraction layer subsequently captures command injection attack features from this vector matrix for modeling. The BiLSTM model captured contextual features by integrating both forward and backward hidden layers. An attention mechanism layer was incorporated to process these contextual features, enabling the model to focus on words and symbols associated with command injection keywords, thus improving the model’s understanding of sentence semantics. After processing through the attention layer, the data is fed into the softmax classifier.

Given the critical importance of keywords in sentence semantics, assigning differential weights to these keywords was deemed essential for precise sentence interpretation. The attention mechanism assigns varying weights to each keyword, enhancing the model’s ability to recognize and interpret the sentence’s meaning.

The BiLSTM network was employed as the recognition mechanism, effectively overcoming the limitations of traditional LSTM models and enhancing text classification performance by efficiently extracting local contextual information. BiLSTM processes both forward LSTM (denoted as \(\overrightarrow\text LSTM\)) information, from \(Bc_1\) to \(Bc_m\) (m represents the number of words in the text), and backward LSTM (denoted as \(\overleftarrow\text LSTM\)) information, from \(Bc_m\) to \(Bc_1\). By integrating information from both directions, the model constructs a comprehensive context for the sentence. The outputs of the BiLSTM network were expressed by Eqs. 3 and 4.

$$\beginaligned \beginaligned \hspace17em \overrightarrowh_f = \overrightarrowLSTM\left( Bc_n\right) ,\ n \in \left[ 1, m\right] \endaligned \endaligned$$

(3)

$$\beginaligned \beginaligned \hspace17em \overleftarrowh_f = \overleftarrowLSTM\left( Bc_n\right) ,\ n \in \left[ m, 1\right] \endaligned \endaligned$$

(4)

The annotation of a given feature sequence \(Bc_n\) is obtained through the forward hidden state \(\overrightarrowh_f\) and the backward hidden state \(\overleftarrowh_b\). The model derives the total hidden state \(\overrightarrowh_c\) by concatenating the forward hidden state \(\overrightarrowh_f\) and the backward hidden state \(\overleftarrowh_b\), As shown in Eq. 5.

$$\beginaligned \beginaligned \overrightarrowh_c = [\overrightarrowh_f, \overleftarrowh_b] \endaligned \endaligned$$

(5)

The attention mechanism was specifically designed to focus on keyword features, thereby reducing the influence of non-keyword elements on the overall text semantics. It functions as a fully connected layer combined with a softmax function. The attention mechanism’s operation is described by the following equations:

$$\beginaligned \beginaligned \overrightarrowu_f = \tanh (w*\overrightarrowh_c + b) \endaligned \endaligned$$

(6)

$$\beginaligned \beginaligned \overrightarrowa_f&= \frac\exp \left( \overrightarrowu_f *\overrightarrowv_f\right) \sum _i=1^M \exp \left( \overrightarrowu_f *\overrightarrowv_f\right) \\ \endaligned \endaligned$$

(7)

$$\beginaligned \beginaligned \hspace20em A_c&= \sum \left( \overrightarrowa_f*\overrightarrowh_c\right) \endaligned \endaligned$$

(8)

As indicated by Eq. 6, the word annotation \(\overrightarrowh_c\) was initially processed through a perceptron layer, resulting in \(\overrightarrowu_f\), which represents the hidden state of \(\overrightarrowh_c\). Here, w and b represent the weights and biases in the neuron, and \(\tanh (.)\) is the hyperbolic tangent function. The model uses \(\overrightarrowu_f\) and the word-level context vector \(\overrightarrowv_f\) to assess the importance of each word.

Equation 7 employs the softmax function to obtain the normalized weight \(\overrightarrowa_f\), where M is the number of words in the text, and \(\exp (.)\) is the exponential function. The word-level context vector \(\overrightarrowv_f\) serves as a high-level representation of word information and is randomly initialized and jointly learned during the training process. The weighted sum of the forward reading word annotations is calculated based on the weight \(\overrightarrowa_f\) to produce the forward context representation \(A_c\).

\(A_c\), represented by Eq. 8, is the output of the attention layer.

In our method, the Adam optimizer24 was employed to optimize the network’s loss function. The Adam optimizer fine-tunes the model parameters, and the loss function is shown in Eq. 9.

$$\beginaligned L_\text total = -\frac1\text num \sum _i=1^\text num \sum _j=1^\text classes y_ij \ln o_ij \endaligned$$

(9)

Here, “classes” represents the number of categories, \(y_ij\) denotes the label of sample i for category j, and \(o_ij\) represents the model’s predicted probability that sample i belongs to category j.

The complete model process is detailed in Table 5, while the core pseudo-code is presented in Table 6.

link