Deep learning method for detecting fluorescence spots in cancer diagnostics via fluorescence in situ hybridization

Sample preparation

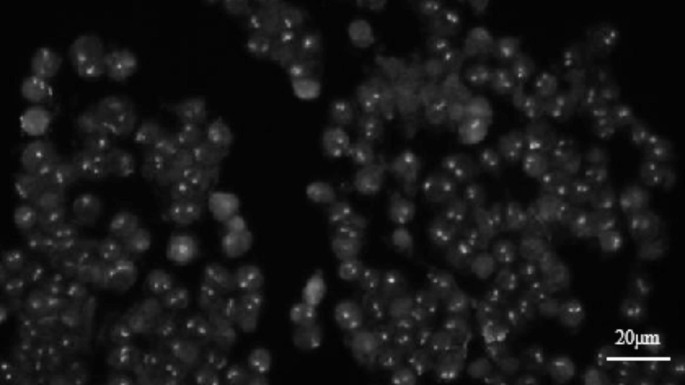

We conducted experiments on the patient’s leukocytes using the AML1/ETO fusion gene detection kit. In the FISH experiment, whole blood preprocessing is performed first by adding a lysis buffer to induce hemolysis, followed by immersion in KCl solution and fixation using methanol-acetic acid. Next, phosphate-buffered saline (PBS) is used for sample loading and washing. Then, denaturation and hybridization are conducted by adding probe buffer, AML1/ETO probe, and mineral oil, completing the hybridization at specific temperatures and pressures. After hybridization, samples are washed sequentially with sodium citrate buffer (SSC), post-hybridization wash solution, and deionized (DI) water. Finally, DAPI is used for staining to facilitate the observation of cell nuclei. The ETO probe was labeled with an orange-red fluorophore, and the AML1 probe was labeled with a green fluorophore. The probes were hybridized to the target detection sites using in situ hybridization technology. Green light (with a wavelength in the range of 500–550 nm) is used to excite the ETO probe, while blue light (with a wavelength in the range of 450–490 nm) is used to excite the AML1 probe. Since the fluorescent spots formed by the two probes have different colors but nearly identical shapes, we obtain their grayscale images for model training.

Collection of FISH images

The inverted fluorescence microscope used was an OLYMPUS IX83, equipped with a fluorescence illumination system and widefield imaging modality. The objective lens was an OLYMPUS LUCPLFLN 60X with a numerical aperture (N.A) of 0.7 and a magnification of 60. The detector used for image acquisition was a QIMAGING optiMOS camera, which is a type of sCMOS. This combination of camera and objective lens is designed to balance imaging resolution, the requirements of the detection task, and the availability of equipment under the current experimental conditions. All FISH images and biological experiments in this study were conducted and provided by professionals from our laboratory. Each pixel corresponds to an actual distance of approximately 0.1 μm, and each FISH spot is about 5 pixels in size. Figure 1 shows representative images along with their scalebars.

We annotated 199 FISH fluorescent spot images, each with a size of 1920 × 1080, using Labelme software. The annotation process is illustrated in Fig. 2.

To address the limited number of images, we expanded the dataset to increase the diversity of training samples, reduce overfitting, and enhance the generalization capability of the network. Various augmentation techniques were employed, such as rotation, cropping, mirror symmetry, and the addition of Gaussian noise. Consequently, we generated 995 images of fluorescent spots, each with a size of 1920 × 1080 pixels, containing 100 to 200 spots. The dataset was split into a training set and a validation set with a ratio of 9:1, comprising 895 and 100 images, respectively.

Proposed method for fluorescent spot detection

To this date, YOLOv10 represents the latest advancement in the YOLO series23,24. However, we have chosen to utilize YOLOv8 for our analysis. The rationale for this selection will be provided in the subsequent sections, where we will discuss its suitability for our specific application, including considerations related to processing efficiency and performance in the context of FISH data analysis. The official YOLOv8 code offers several networks; however, this article focuses primarily on YOLOv8, YOLOv8-P2, and YOLOv8-P6 for object detection tasks. YOLOv8-P2 and YOLOv8-P6 are distinguished by the inclusion of an additional small object detection head and a large object detection head, respectively, enhancing their detection capabilities. Specifically, the enhanced ability of YOLOv8-P2 to detect small objects, such as fluorescent spots, significantly improves accuracy in scenarios where detection of small objects is critical.

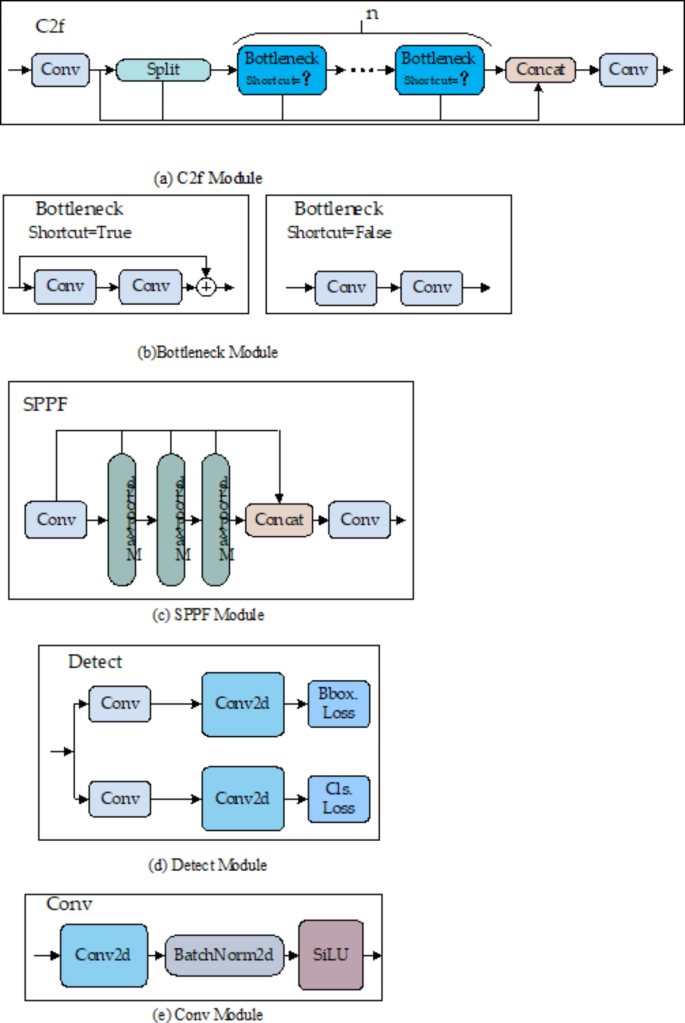

The YOLOv8 model comprises several modules, including C2f (Convolution to Fully Connected), Bottleneck, SPPF (Spatial Pyramid Pooling-Fast), Detect, and Conv. The C2f module utilizes cross-stage partial feature fusion to integrate low-level and high-level feature maps. This integration significantly increases the model detection precision and processing speed. The Bottleneck architecture reduces feature map channels, decreasing the computational burden. It incorporates residual connections to mitigate vanishing gradients and includes a 3 × 3 convolutional layer to expand the receptive field. SPPF, a crucial component, features Spatial Pyramid Pooling, enabling the model to process various object sizes within a single image by aggregating features from multiple receptive fields. The Detect module processes the outputs from the Neck module, which integrates inputs from the C2f, Bottleneck, SPPF, Detect, and Conv modules, as depicted in Fig. 3.

YOLO-SEM network module structure diagram.

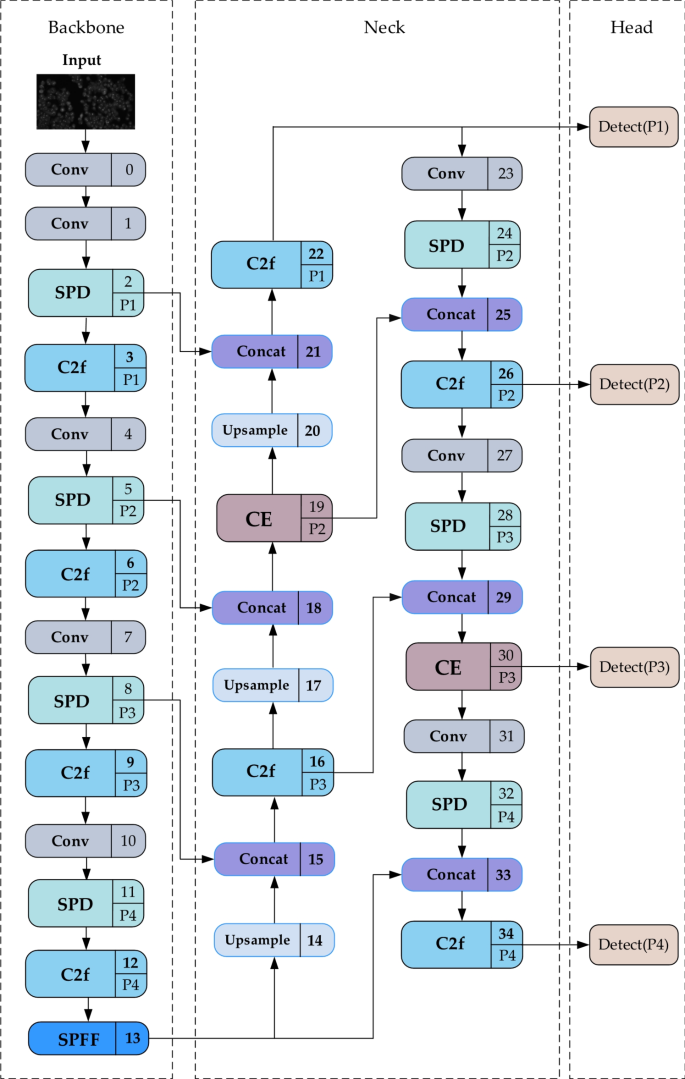

YOLO-SEM (YOLO-small object enhancement model) network framework

Inspired by the referenced models, we introduce YOLO-SEM, consisting of a backbone, neck, and head components. The backbone serves to extract relevant image information for use in subsequent network layers. This component enhances efficiency and performance, while simultaneously lowering the computational complexity involved in feature extraction. Positioned between the backbone and the head, the neck optimizes the use of extracted features and aids in feature fusion. The head utilizes these features to improve recognition capabilities.

The input image, featuring fluorescent dots, is segmented into an N × N grid structure by the network with the grid partitioning automatically performed using the k-means algorithm25. Although this grid-based approach may encounter some disadvantages when handling multiple adjacent spots, it also offers several key advantages. By processing the entire image in a single forward pass, this method allows the model to consider the global context, which helps reduce the effect of background noise and improves target localization. Understanding the global context is crucial for overall accurate detection. Additionally, this approach is very fast, enabling real-time or near-real-time analysis, which is particularly beneficial in high-throughput scenarios or when processing large datasets. Each cell within this grid undergoes examination to detect targets. Responsibility for detection is assigned to a cell if the target’s center intersects with it. Subsequently, the network forecasts bounding boxes for each cell and allocates a confidence score to them. The computation of the confidence score is defined by the following formula (1):

$$Conf=P \times IoU_truth^pred,\quad P \in [0,1]$$

(1)

The variable P is set to 1 when objects are present within the mesh; otherwise, it remains 0. The IoU quantifies the overlap between the predicted and actual bounding boxes. The confidence level measures the precision of a bounding box that contains an object and indicates the presence or absence of an object in the mesh. When multiple bounding boxes identify the same target, the YOLO network employs non-maximal suppression (NMS) to select the optimal box. This technique is essential for eliminating redundant detection frames, ensuring only the most representative frame is retained. Detection frames are organized in descending order by their confidence levels. Starting with the highest confidence frame, the NMS algorithm calculates its IoU against other frames. Frames exceeding a predefined IoU threshold with the baseline frame are discarded. This iterative selection process continues, using one highest confidence frame as the reference each time, until all frames are evaluated. NMS guarantees that only one representative frame per target appears in the final results.

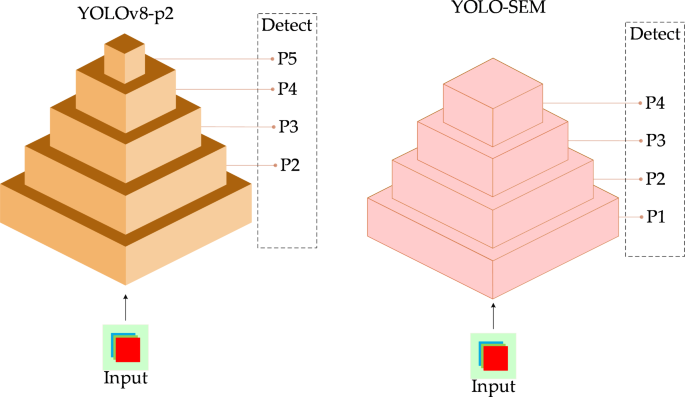

In object detection tasks, detection heads are classified by the size of their corresponding feature maps. Larger detection heads are associated with feature maps of lower resolution, while smaller detection heads are linked to feature maps of higher resolution. To improve the management of small objects in object detection tasks, we have chosen to replace the large object detection head with a smaller one specifically designed for small objects, as shown in Fig. 4. This modification allows the model to concentrate more effectively on small objects, thereby improving their detection rates and contributing to targeted optimization. In the YOLO-SEM model, four feature map sizes are organized in descending order with varying resolutions, labeled as P1, P2, P3, and P4. Employing multi-scale feature maps and different sizes of detection heads enables YOLO-SEM to comprehensively detect a wide range of objects, thus enhancing overall detection performance.

Comparison of YOLO-SEM and YOLOv8-P2 models.

Our experiments have demonstrated that the P2 and P3 detection heads significantly influence the accuracy of fluorescent point detection. To mitigate risks of model overfitting, diminished generalization capability, increased computational demands, and longer training and inference times associated with an excess of attention mechanisms, we have implemented the ECA mechanism exclusively on feature maps of corresponding sizes in P2 and P3. This strategy ensures precise control over the application levels of the attention mechanism, balancing the model performance and complexity. Furthermore, this method enhances the model robustness, making it more viable for real-world applications. The model structure is depicted in Fig. 5.

SPD module

The YOLO series of architectures excels in various computer vision tasks, including object detection and image classification26,27,28,29. However, it exhibits a notable decline in performance when processing low-resolution images or detecting small objects30,31. This decrease can be attributed to the use of stride convolution and pooling layers, prevalent in YOLO architectures, which lead to reduced feature representation and a loss of precision. Typically, these adverse effects are mitigated because the images analyzed generally possess high resolution and contain large objects, allowing the model to skip redundant pixel information while still effectively learning features. However, this assumption of redundancy fails in the case of the FISH image dataset, where images are blurry, and objects are small, resulting in the loss of fine-grained information, insufficient feature learning, and a marked reduction in the model’s detection capability.

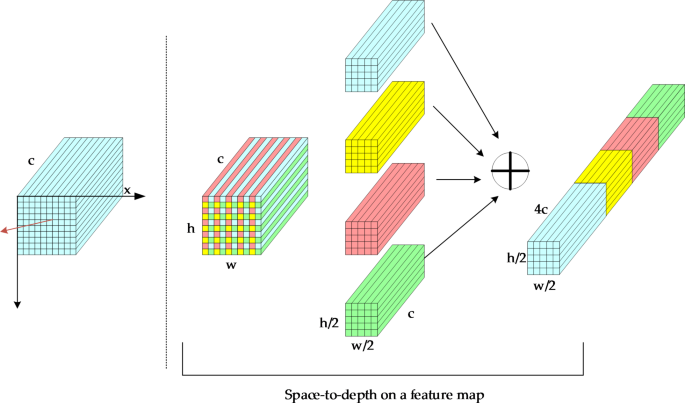

To resolve this challenge, we integrated the SPD module32 into YOLO-SEM. This module employs a spatial-depth layer to downsample feature maps, ensuring the retention of information across channel dimensions and minimizing data loss. Furthermore, the Conv module in YOLO-SEM employs a step size of 1, which eliminates additional downsampling and reduces the total downsampling instances within the model, thereby maintaining the spatial resolution of the input data. Figure 6 depicts the SPD module.

Space-to-depth when step = 2.

Assuming that each feature map F is of size W × H × C, the feature map is sliced into sub-feature sequences Fm, n, As shown in Eq. (2):

$$F_m,n=\textF [m:H:Step,n:H;Step]$$

(2)

Next, these sub-feature sequences are concatenated along the channel dimensions to obtain a feature map\(F^\prime\) of size \(\fracWstep \times \fracHstep \times (C \times step^2)\), and this new feature map is then passed to the next layer.

CE module

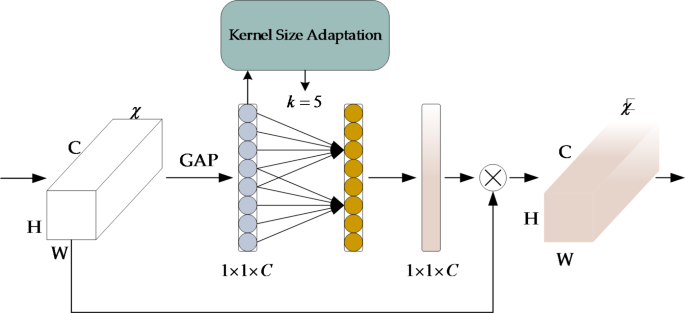

The attention mechanism has gained significant attention in recent years due to its outstanding performance across various applications33,34,35,36. While current approaches often aim to improve overall model effectiveness by designing increasingly complex attention modules, this usually results in higher model complexity. In contrast, the ECA module37 enhances model performance with only a slight increase in computational cost and facilitates cross-channel interactions in learning channel attention without reducing channel dimensionality, as depicted in Fig. 7.

Global average pooling (GAP) is used to obtained aggregated features map Box1, which is then extracted as a single real value to obtain feature \(\chi _avg \in R^(W \times H \times C)\), as shown in Eq. (3):

$$\left\{ \beginarrayl \chi _avg=GAP(\chi ) \hfill \\ GAP(\chi )=\frac1W \times H\sum\nolimits_i=1,j=1^W,H \chi _i,j \hfill \\ \endarray \right.$$

(3)

ECA generates channel weights through a rapid one-dimensional convolution of size k, which is adaptively determined by mapping the channel dimension, C. The extent of interaction, indicated by k, the size of the convolution kernel, correlates directly with the channel dimension through a mapping \(\phi\), as shown in Eq. (4):

If the mapping is represented by the linear function \(\phi (k)=\lambda \times k – b\),its capacity to depict feature relationships is notably restricted. Consequently, this linear function is expanded to a nonlinear function to enhance its representational capability. Typically, the channel dimension C (number of filters) is set at 2. The relationship between these parameters is defined in Eq. (5):

$$C=\phi (k)=\text2^\gamma \times K – b$$

(5)

The adaptive method for the kernel size K can then be determined as follows:

$$K=\psi (C)={\left| {\frac\log _2(C)\gamma +\fracb\gamma } \right|_\textodd }$$

(6)

Where \( t \right\)represents the nearest odd integer to t. Activation values for one-dimensional convolutional outputs are derived using the Sigmoid function. The ECA algorithm preserves channel dimensions and assesses inter-channel relationships to address noise-induced disturbances, thereby enhancing the model’s noise reduction capabilities.

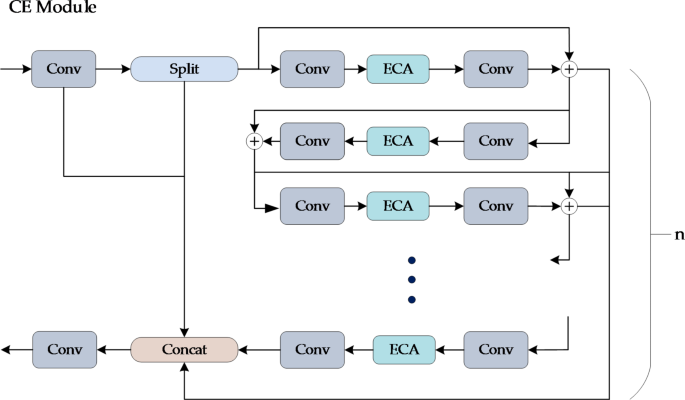

To enhance the inter-channel correlation, the ECA module has been integrated into the C2f module. The C2f module, comprising multiple convolutional layers and complex feature transformations, facilitates this enhancement by leveraging the ECA module. This integration fosters richer and more discriminative feature representations, thereby improving the performance and generalization capabilities of complex networks, ultimately resulting in higher accuracy.

The Bottleneck, a critical component of C2f, primarily operates on local feature maps. Enhanced by the incorporation of ECA, the Bottleneck selectively augments task-specific channels, thereby improving the capture of essential object features. The configuration of Bottleneck, denoted by ‘n’, facilitates the adjustment of ECA implementations, depending on the task requirements and available computational resources. The Bottleneck structure comprises two convolutional modules tasked with feature extraction and transformation. Positioned between these convolutional layers, the ECA module optimizes channel attention weighting on the outputs from the initial convolution. This arrangement allows the weighted features to undergo further transformation in the subsequent convolutional layer, thus enabling the attention mechanism of the ECA module to influence the entire feature map comprehensively. Figure 8 illustrates the configuration of the Bottleneck with the ECA module.

LMPDIoU loss function

Bounding box regression is extensively used in object detection and instance segmentation, serving as a critical step for target localization38,39,40. The original IoU is calculated as the ratio of the intersection area between the predicted bounding box and the ground truth bounding box to their combined union area, as delineated in Eq. (7):

$$IoU=\fracB_gt \cap B_prdB_gt \cup B_prd$$

(7)

The distance dc between the center coordinates of the predicted box and the ground truth box can be expressed using Eq. (8).

$$d_c^2=(x_c^prd – x_c^gt)^2 \times (y_c^prd – y_c^gt)^2$$

(8)

Where \((x_c^prd,y_c^prd)\) and \((x_c^gt,y_c^gt)\) represent the center coordinates of the predicted box and the ground truth box, respectively.

Let wc and hc be the width and height of the minimum enclosing box, then its area c can be expressed by Eq. (9).

$$\textc^2=w_c^2+h_c^2$$

(9)

V is the aspect ratio consistency measure between the predicted box and the ground truth box, defined by Eq. (10).

$$V=\frac4\pi ^2 \left(\arctan \fracw^gth^gt – \arctan \fracw^prdh^prd \right)^2$$

(10)

\(w^gt\) and \(h^gt\) are the width and height of the ground truth box, while \(w^prd\) and \(h^prd\) are the width and height of the predicted box. By calculating the arctan difference of the aspect ratios, V reflects the aspect ratio discrepancy between the predicted box and the ground truth box.

The initial bounding box regression loss function in YOLOv8-p2 is CIoU which is also used in most YOLO models. It can be described by Eq. (11).

$$CIoU=IoU – \fracd_c^2c^2 – \alpha \cdot V$$

(11)

Where α is a weighting factor used to balance the importance between IoU and the center point distance, typically ranging between 0 and 1. However, CIoU face challenges in optimizing effectively when the predicted bounding box maintains the aspect ratio of the true bounding box but differs in size. To address this issue, a novel bounding box similarity metric, Minimum Point Distance-based Intersection over Union (MPDIoU), has been introduced. This metric leverages the geometric characteristics of horizontal rectangles and incorporates all pertinent factors considered in existing loss functions, such as overlapping or non-overlapping areas, center distance, and width and height deviations. Moreover, it simplifies the computational process. The loss function, LMPDIoU, is derived from MPDIoU.

Assuming that \(B_prd=(x_1^prd,y_1^prd,x_2^prd,y_2^prd)\)and\(B_gt=(x_1^gt,y_1^gt,x_2^gt,y_2^gt)\),where the coordinates of the top-left \((x_1^prd,y_1^prd),(x_1^gt,y_1^gt)\) and bottom-right \((x_2^prd,y_2^prd),(x_2^gt,y_2^gt)\) points are given, the width and height of the image are denoted as w and h respectively, as shown in Eq. (12):

$$\begingathered d_1^2=(x_1^prd – x_1^gt)^2 \times (y_1^prd – y_1^gt)^2 \hfill \\ d_2^2={(x_2^prd – x_2^gt)^2} \times {(y_2^{{prd}} – y_2^{{gt}})^2} \hfill \\ \endgathered$$

(12)

Then MPDIoU for Eq. (13):

$$MPDIoU=IoU – \fracd_1^2h^2+w^2 – \fracd_2^2h^2+w^2$$

(13)

The LMPDIoU for Eq. (14):

$$L_MPDIoU=1 – MPDIoU$$

(14)

MPDIoU is suitable for multiple object detection contexts because it evaluates not only the overlap between two bounding boxes but also their spatial relationships. This enhances the accuracy in depicting the relative positions of objects within an image, especially when objects are occluded or partially visible.

link