Breast lesion classification via colorized mammograms and transfer learning in a novel CAD framework

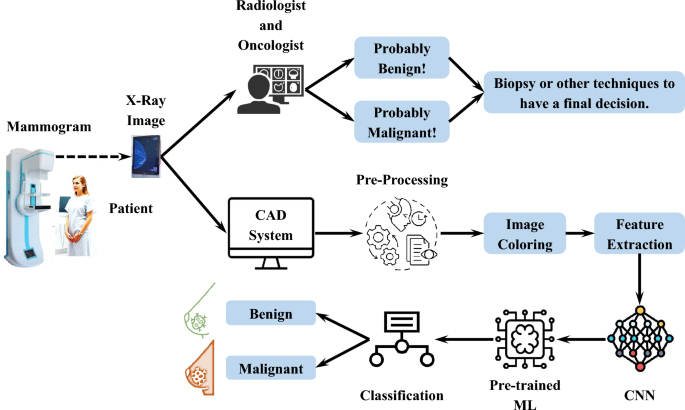

It is crucial to differentiate between malignant and benign tissues in order to ensure that BC patients receive timely and appropriate treatment. A misclassification can result in unnecessary procedures or delayed intervention, which may adversely affect the patient’s health. As a result, CAD systems must be precise and reliable in order to enhance diagnostic accuracy in clinical practice. Accordingly, the specific mechanisms used by the proposed CAD system to differentiate malignant from benign tissues, as well as the underlying formulations and evaluation criteria, are discussed.

The tumor-centric colorization framework enhances computational feature separability while simultaneously improving clinical interpretability by visually emphasizing suspicious regions, thereby enabling radiologists to detect lesions more quickly and with increased diagnostic confidence, i.e., especially in cases where conventional grayscale imaging may obscure subtle abnormalities. This visual augmentation streamlines the diagnostic process by reducing interpretation time and facilitating rapid decision-making, without imposing additional cognitive burdens, as the integrated color scheme eliminates the need for separate post-processing steps often associated with traditional CAD systems. As a result, this approach represents a clinically validated solution that supports efficient breast cancer screening in high-throughput clinical settings, contributing to faster patient evaluations, minimized diagnostic delays, improved workflow efficiency, and ultimately, earlier detection and better patient outcomes.

Fundamentals of grayscale to color transformation in tissue differentiation

Colored mammograms provide significant enhancements in tissue differentiation capacity when converted from grayscale to color. It is difficult to make an accurate diagnosis based upon conventional grayscale mammograms due to their natural low contrast, background noise, and contrast non-coherency from region to region. This method overcomes these constraints by processing mammography images across three separate channels and generating a color-enhanced representation that enhances minute tissue characteristics that might otherwise be undetectable. It is possible to distinguish benign from malignant tissues by their different morphological and textural characteristics by means of targeted channel-specific improvements. As a result of combining these improved channels into a color representation, it is possible to obtain an image with a high degree of variation between healthy, benign, and malignant tissues. By using this improved visualization, features can be extracted and classified more accurately. Mathematically, the combined enhancement is expressed by Eq. 11.

$$\begin{aligned} X_\textrm{Color}(x,y)=[E_\textrm{R}(X_\textrm{Orig}(x,y)),E_\textrm{G}(X_\textrm{Orig}(x,y)),E_\textrm{B}(X_\textrm{Orig}(x,y))] \end{aligned}$$

(11)

where \(X_\textrm{Color}(x,y)\), \(X_\textrm{Orig}(x,y)\), \(E_\textrm{R}\), \(E_\textrm{G}\), and \(E_\textrm{B}\) represent the final colored image, the original grayscale mammogram, and the enhancement operations for the red, green, and blue channels, respectively.

Mammogram feature extraction from color-enhanced images

Color enhancement is followed by the implementation of a pre-trained CNN, which employs models such as ResNet, VGG, and EfficientNet to extract features from images without updating the model weights. A separate ML model, such as SVM, is then utilized to classify the extracted features. Layers of CNN models process images step by step, each focusing on a different aspect of the image, including different tissue characteristics that trigger layer-specific activations, A system of learned representations that captures patterns beyond the scope of traditional feature engineering, and Layer-specific activation maps such as early layers correspond to edges and deeper layers correspond to semantic structures.

Identification of benign and malignant tissues based on their characteristics

The proposed color-enhancing method emphasizes several crucial characteristics that distinguish benign from malignant breast tissue. First, a malignant mass is usually characterized by irregular (spiculated) or ill-defined borders, whereas a benign mass is typically characterized by smooth and well-circumscribed boundaries. This variation in boundary is accentuated by the proposed channel-specific improvements. Second, benign lesions usually exhibit homogeneous patterns, while malignant lesions may exhibit a variety of internal compositions and irregular density distributions. As a result of the color change, these compositional variations are brought to light. Third, the improved images tend to highlight architectural distortion of the surrounding tissues, which is often caused by malignant tissue. Finally, malignant masses typically have irregular shapes as opposed to benign masses that are more regular, oval, or circular.

Tumor-centric diagnostic colorization for enhanced lesion detection in dense breast tissue: A multi-channel framework for radiographic and CNN-based interpretation

Breast tissue with dense density presents a significant diagnostic challenge, as malignant lesions as well as fibroglandular structures appear radiopaque, which may result in a masking effect, a reduction in sensitivity, or a higher rate of false negatives. In this study, a tumor-centric colorization framework was introduced to improve lesion detection across different breast densities, representing a paradigm shift from conventional grayscale analysis. In the proposed method, the intensity of tumor signals is enhanced using histogram-based intensity enhancement within the 108-130 range, as well as color channel mapping that is used to encode noise suppression, lesion sharpening, and adaptive normalization across the RGB channels. By doing so, grayscale mammograms are transformed into visually and computationally enriched representations optimized for feature extraction and clinical interpretation based on CNNs.

In addition to improving computational separability, diagnostic colorization provides radiologists with three synergistic mechanisms that address the challenges associated with dense tissue. First, it enhances lesion-background contrast, counteracting dense breast imaging masking effect. By doing so, subtle architectural distortions and irregular masses that conventional grayscale images conceal can be detected. Additionally, the methodology enriches AI systems’ feature space through complementary RGB channels, each optimized for specific diagnostic tasks. This enables convolutional neural networks to extract morphological patterns that cannot be detected by single-channel grayscale analysis. Furthermore, it facilitates rapid clinical decision-making by providing intuitive color-coded indicators that guide the radiologist’s attention to suspicious regions, diagnostically relevant “hotspots.”

Despite the lack of formal density annotations in the Mini-DDSM dataset, it contains a wide range of breast densities. The qualitative assessment confirms that the system accurately classified lesions in dense cases as reflected in the published performance metrics. It appears that this method has the potential to generalize effectively across different types of breast tissue compositions based on this preliminary evidence. In the future, work will be devoted to quantitative density-based validation and comparison of enhancement techniques specific to dense tissues.

Feature extraction using pre-trained models: A mathematical representation

A feature extraction process in mathematics involves transforming raw images into high-dimensional feature vectors by means of convolutional, activation, and pooling operations. Assume \(\phi CNN\) is a pre-trained CNN with weights W initialized on ImageNet. Mammograms are used to fine-tune the model to fit the patterns of medical imaging. Convolutional layers, activation functions, and pooling layers are the key layers for feature extraction. Hierarchical spatial patterns such as edges, textures, and shapes are extracted by convolutional layers. Activation functions introduce non-linearity, e.g., Rectified Linear Unit (ReLU), where pooling layers reduce spatial dimensionality. Let a mammogram image \({\textbf {X}}\in \mathbb {R}^\mathrm{H\times W\times 3}\) be a color-converted mammogram, Eq. 12. CNNs analyze images by processing \({\textbf {X}}\) through l layers where H and W represent height and width, respectively.

$$\begin{aligned} {\textbf {F}}^\textrm{l}=\sigma ({\textbf {W}}^\textrm{l} \circledast {\textbf {X}}^\mathrm{l-1}+{\textbf {b}}^\textrm{l}) \end{aligned}$$

(12)

The feature map at layer l, the learnable convolutional filter, and the bias term are represented by \({\textbf {F}}^\textrm{l}\), \({\textbf {W}}^\textrm{l}\), and \({\textbf {b}}^\textrm{l}\), respectively. \(\sigma (\cdot )\) is a nonlinear activation function, e.g., ReLU, in which \(\circledast\) represents the convolution operation. It should be noted that \({\textbf {W}}^\textrm{l}\) and \({\textbf {b}}^\textrm{l}\) are not updated since a pre-trained model is employed.

Equation 13 presents the maximum/average pooled feature map at layer l, in which \(\text {pool}{(\cdot )}\) and s are pooling functions (e.g., maximum pooling, average pooling) and stride size, respectively.

$$\begin{aligned} {\textbf {F}}_\textrm{Pooled}^\textrm{l}=\text {pool}({\textbf {F}}^\textrm{l},s) \end{aligned}$$

(13)

In Eq. 14, the final feature map is flattened into a feature vector after several convolutional and pooling layers.

$$\begin{aligned} {\textbf {f}}=\phi (W_\textrm{fc}\cdot \text {flatten}(F^\mathrm{(L)})+b_\textrm{fc}) \end{aligned}$$

(14)

while \({\textbf {f}}\in \mathbb {R}^\mathrm{H\times W\times 3}\), L, \(W_\textrm{fc}\), and \(\phi (\cdot )\) represent the extracted feature vector, final convolutional layer, weights of the fully connected layer (if present), and activation function, respectively.

Classification

A distinct classifier, like an SVM, is employed to classify the extracted feature vector f since the CNN classification head has been removed, as shown in Eq. 15.

$$\begin{aligned} y=\text {SVM}({\textbf {f}}) \end{aligned}$$

(15)

where y is the predicted classification of the tumor as benign or malignant.

Color enhancement and interpretability

By mapping diagnostically relevant features to distinct color channels, the proposed colorization technology enhances radiologists’ visibility of subtle anomalies and supports the AI detection model. For radiologists, an improved perception of malignant versus benign tissue reduces interpretative ambiguity, enabling more confident and timely clinical decisions. This is particularly critical in dense or complex cases where grayscale images may obscure significant findings.

Colorization increases visibility, making suspicious features such as microcalcifications or irregular masses easier to distinguish from the surrounding tissue. This leads to the more effective identification of subtle lesions that might otherwise be overlooked on grayscale images. Recent studies have shown that color-enhanced mammograms result in higher agreement among observers and lower diagnostic ambiguity, leading to more consistent and timely diagnostic interpretations. Ultimately, this contributes to earlier breast cancer detection, enhanced clinical certainty, and better patient outcomes.

On a technical level, colorization enhances input data for deep learning algorithms by expanding the feature space and highlighting tumor-related patterns that are difficult to differentiate from grayscale images. Color-enhanced input data enables the AI system to learn more discriminatory features, resulting in increased classification accuracy with fewer false negatives and false positives. Additionally, the colorization framework improves interpretability for clinicians while improving overall performance and reliability.

link