Big data dimensionality reduction-based supervised machine learning algorithms for NASH diagnosis | BMC Bioinformatics

This section firstly introduces the parameters of the algorithms and then extensively analyses the results.

Parameters of the machine learning algorithms

Table 2 presents the parameters of the algorithms.

Next sub-section provides the selected features with the Pearson correlation and PSO-ANN optimization approaches.

Selected features for the NASH diagnosis

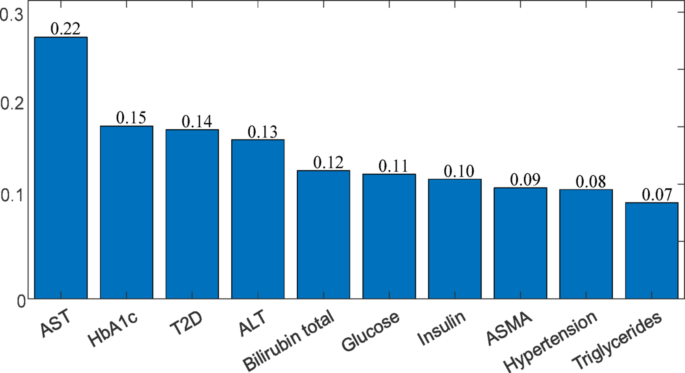

The dimension of the dataset is 30 and a number of them carries richer information about the existence of the NASH disease. Figure 7 shows the selected most informative features with the Pearson correlation.

Selected 10 most informative features with the Pearson correlation

As can be seen from Fig. 7, Pearson correlation selects the AST blood test as the one carrying the most relevant information about the NASH disease. It is also noticeable that the HbA1c, T2D and ALT have similar information contents about the existence of the NASH disease. Recently, independent association of NASH with high AST and low serum creatinine levels were confirmed. In addition, a univariate analysis on 192 NASH patients revealed that the NASH is closely related to high HbA1c, ALT and GGT. However, it also reported that despite the low ALT value, a large number of T2D patients is identified with NASH [22]. Moreover, while the mean miluribin total was significantly lower in the NASH patients and high glucose together with the insulin resistance were strongly correlated with the existence of NASH [23]. It is also highlighted that the ASMA and hypertension are generally coexist in the NASH patients [24]. In addition to the Pearson correlation, this paper modifies and implements the PSO-ANN optimization approach for feature selection, whose results are shown in Table 3.

As can be noticed from Table 3, five features are commonly selected by both the Pearson correlation and PSO-ANN approaches. While the Pearson correlation quantifies the relationship between each feature and the NASH disease, the PSO-ANN considers the relationship between all ten features and the NASH disease. Henceforth, the PSO-ANN does not provide a numeric value that quantifies the relationship between the features and NASH disease. Next sub-section analyses the machine learning results with these selected features.

NASH diagnosis with machine learning algorithms

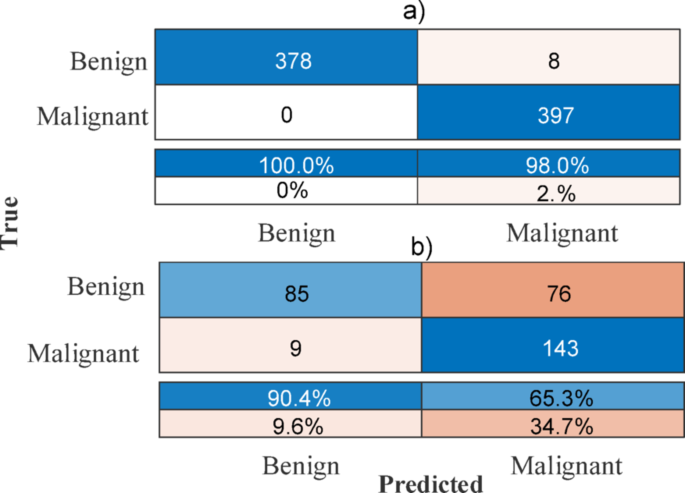

This paper analyses the developed NASH diagnosis model with both the training and testing data. The training data is utilized for the machine learning model development, whereas the test data is used for the validation of the developed machine learning models. Figure 8 shows the BLS machine learning algorithm-based NASH diagnosis results with the selected ten features in Sect. “ABC machine learning algorithm”.

Results with the BLS machine learning algorithm, a training, b testing

As can be seen from Fig. 8a, the trained BLS machine learning model is able to diagnose benign and malignant cases with 100% and 98% accuracies, respectively. In terms of its performance with the test data presented in Fig. 8b, while the benign diagnose accuracy is 90.4%, its malignant accuracy is only 65.3%, which is quite low compare to the benign accuracy. This possibly occurs because of the poor gradient information that leads the BLS algorithm to converge local optimum. However, as can be seen from Fig. 9, the search-based ABC machine learning algorithm yields high malignant prediction accuracy with the unseen test data since it adds external excitation signal which performs exploration for the global optimal solution. Figure 9 illustrates the training and validation accuracies with the ABC machine learning algorithms.

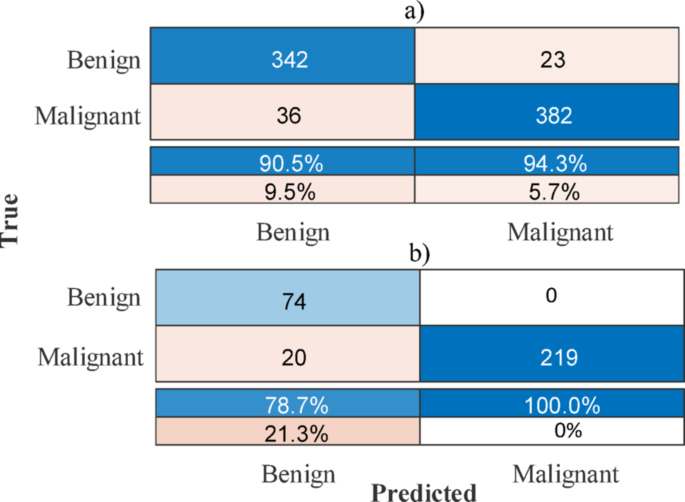

Accuracies with the ABC machine learning algorithm, a training, b testing

The trained ABC machine learning algorithm diagnosis the benign and malignant cases with 90.5% and 94.3% accuracies, respectively, as can be seen from Fig. 9a. Figure 9b shows that the tested ABC machine learning algorithm manages 78.7% and 100% accuracies for the benign and malignant cases, respectively. It is clear from Figs. 8 and 9, the trained BLS machine learning algorithm outperforms the trained ABC machine learning algorithm. However, the performance of the validated ABC machine learning algorithm surpasses the BLS machine learning algorithm with the test data. Therefore, one can deduce that the ABC machine learning algorithm is more robust to unseen uncertainties in the NASH data. Next sub-section analyses the role of the number of the selected features.

Selected features and performance analysis

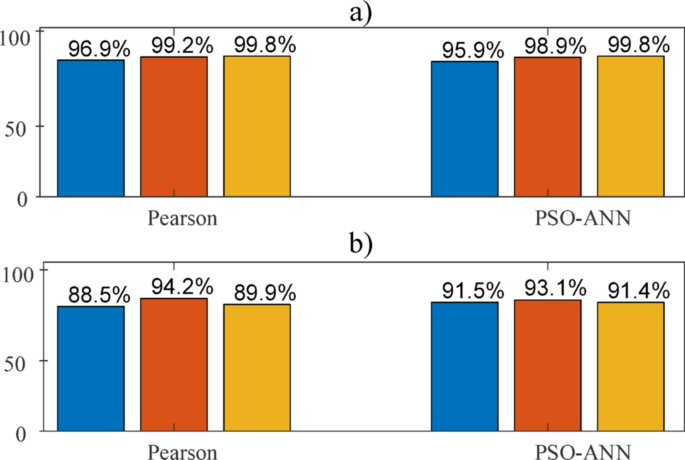

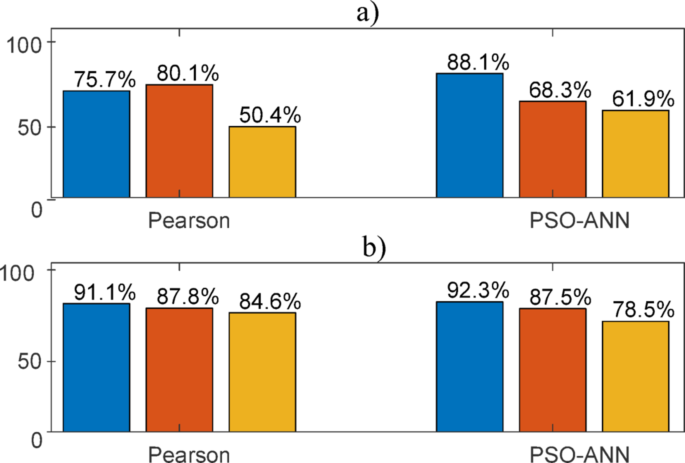

Figure 10 demonstrates the training performances of the BLS and ABC machine learning algorithms with respect to varying number of input features selected by the Pearson correlation and PSO-ANN optimization approach.

Training performances, a BLS, b ABC machine learning algorithms. Blue, orange and yellow colours represent overall accuracies for 10, 20, 30 selected features

It is clear from Fig. 10a that the BLS algorithm moderately and steadily increases its prediction accuracy with respect to increasing number of selected input features. In terms of the ABC algorithm, it slightly benefits from selected 20 features as demonstrated by Fig. 10b, but it faces challenges when the selected input features are 30. This is possibly due to its search-based property, which can fail to extract the information in case of large input features. In addition to training performance, Fig. 11 examines the validation performances with the unseen test data.

Validation performances, a BLS, b ABC machine learning algorithms. Blue, orange and yellow colours represent overall accuracies for 10, 20, 30 selected features

Figure 11a illustrates that the BLS machine learning algorithm loses its validation efficiency as the number of selected features increases. It only benefits when the Pearson correlation selects 20 features where its validation accuracy jumps to 80.1%. But it performs poorly with the selected 30 features, where its validation accuracy shrinks to 50.4%. In terms of the ABC algorithm, its validation accuracy outperforms the BLS algorithm for all possible selected input features. This possibly happens because the selected features are informative enough for the NASH diagnosis, but the gradient knowledge is insufficient for the optimization problem. The search-based ABC machine learning algorithm manages to explore the NASH knowledge in the data through added exploration noise.

Specificity, sensitivity and accuracy analysis

In order to evaluate the performance of the developed machine learning models, accuracy that performs direct assessment but can be misleading in case of uneven class distribution, specificity that essentially differentiate the absence of a condition from existence and sensitivity that recognizes positive occurrence are considered. They are quantified in terms of True Positive (TP), True Negative (TN), False Positive (FP) and False Negative (FN). Table 4 provides the specificity, sensitivity and accuracy performance measurements for the developed BLS and ABC machine learning algorithms.

Table 4, the trained BLS algorithm yields larger specificity, sensitivity and accuracy results. Moreover, the ABC algorithm yields higher specificity and accuracy results with the test data.

link