A longitudinal machine-learning approach to predicting nursing home closures in the U.S.

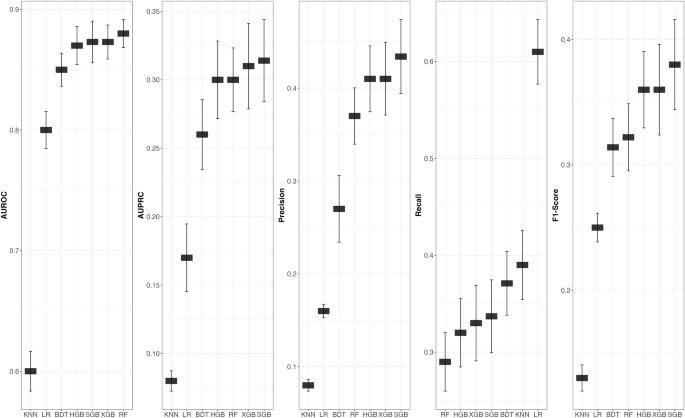

Our study demonstrates that U.S. nursing home closures, while relatively rare events (approximately 5% of facilities over the past decade), can be predicted with reasonable accuracy using machine learning on longitudinal data. We found that advanced sequence-based models outperformed traditional predictive approaches. In particular, the Recurrent Neural Network models (LSTM and Bi-Directional LSTM) achieved substantially higher discrimination ability than baseline models such as logistic regression (LR) or random forests (RF). For example, our best LSTM models achieved an AUPRC around 0.50 and a Recall of 0.77, compared to ~0.314 and 0.34, respectively, for the best performed traditional model. This improvement suggests that incorporating temporal trends in facility performance yields important predictive signals, especially in key metrics more relevant to studies of a highly imbalanced nature, i.e., AUPRC, Precision, Recall, and the F-1 score.

This study builds on prior cross-sectional research that identified factors associated with closure by showing these factors can also prospectively identify high-risk facilities4,10. We observed a geographic dimension to closure risk: rural facilities had higher odds of closure, which is consistent with reports of “nursing home deserts” in rural counties where a single facility closure can eliminate local care access4,5. Our results are consistent with findings that closures tend to be concentrated in socioeconomically disadvantaged areas. Feng et al.27 found that nursing home closures were more common in counties with higher poverty and larger minority populations, raising concerns about equity27. From a health equity standpoint, this pattern means communities that already face limited resources suffer disproportionately from facility loss, compounding disparities in access to care.

Moreover, our analysis identified several predictors of closure risk. Facilities that closed were more likely to have low occupancy and smaller average census, a rural location, and signs of quality or care challenges—for example, a significantly higher rate of catheter use among residents and lower overall star ratings—compared to facilities that remained open. These characteristics emerged as consistent risk factors in our models. Even the facilities incorrectly flagged by the model as high-risk (false positives) tended to share many of these risk features with actual closed facilities, reinforcing that the model was capturing a genuine profile of vulnerability. Taken together, these findings provide new evidence that nursing home closures are not random or unpredictable, but rather can be foreseen based on observable facility traits and trends, and that deep learning models leveraging longitudinal data offer superior performance in pinpointing at-risk nursing homes.

Another important aspect of our approach is the emphasis on Recall (Sensitivity) when predicting this rare event. Given the high-class imbalance (only ~5% of records represented closures), our modeling prioritized capturing as many true closures as possible, even at the expense of some false positives. In practical terms, missing an impending closure (a false negative) is far more detrimental for nursing home regulators and residents than flagging a facility that ultimately stays open (analogous to how in disease screening a false negative can be worse than a false positive). By maximizing model Recall, we aimed to ensure that most facilities that will close are identified in advance. Indeed, our best models detected the majority of actual closures, confirming that a recall-oriented strategy is suitable for this context. The trade-off is a number of false-positive predictions, but our analysis reveals that these false-positive facilities were typically “near misses” in that they exhibited many of the same risk factors as true closures. For instance, a subset of the facilities the model falsely identified as likely to close were in fact those on the SFF list or were SFF candidate facilities with well-documented quality and compliance issues. We found that 14 of the model’s false positives were designated SFF/SFFC facilities, and while only 5 of those ultimately did close during the study, their inclusion underlines that the model is effectively capturing markers of serious poor quality of care. In essence, the model’s “false alarms” are often facilities under substantial distress (e.g., low census, poor quality ratings, regulatory trouble) that have so far avoided closure, potentially due to temporary interventions or lack of nearby competitors. This observation illustrates that not all poorly performing nursing homes will close—some manage to continue operating, possibly due to external support or critical need in their community – but those that do close almost always come from the pool of troubled facilities. It also suggests that many false-positive facilities may remain closure risks in the longer term if underlying issues are not addressed. Overall, the consistency between our model’s risk flags and known indicators of nursing home instability provides confidence that the predictive patterns it learned are meaningful and not spurious. Additionally, the SFF program flags a limited number of facilities as poorly performing based primarily on quality and safety issues. By employing a broader set of characteristics, including market, financial, resident, quality, and other facility characteristics, the proposed model offers a more comprehensive perspective of nursing home performance and risk of nursing home closures.

These findings carry several implications for state and federal regulators tasked with oversight of nursing homes. First, predictive analytics could be integrated into regulatory monitoring systems as an early warning tool for facility closure risk. Rather than waiting for public closure announcements or obvious signs of financial collapse, agencies like CMS or state regulators could use a model-based alert system to proactively identify nursing homes showing the telltale signs of distress (e.g., sustained low occupancy, quality declines). By receiving data-driven risk alerts perhaps a year in advance of a potential closure, regulators would have lead time to intervene or at least prepare. Second, armed with predictions of closure risk, policymakers can target interventions and resources more efficiently. Facilities flagged at high risk might be offered support such as temporary financial relief, management assistance, or specialized oversight to address deficiencies—measures that could stabilize them and prevent an avoidable closure. In cases where closure appears inevitable, early identification allows for well-prepared transition plans for residents (e.g., finding alternate placements, notifying families) to minimize disruption and negative health outcomes associated with sudden displacements. Third, our model highlights geographic areas of concern (rural and high-uninsured regions), which can inform broader policy strategies around health equity and access. Regulators could prioritize sustaining services in these vulnerable areas by, for example, incentivizing new providers to enter “nursing home desert” counties or expanding home and community-based services where nursing homes have shut down. In this way, predictive modeling can support a more equitable allocation of resources, ensuring that underserved communities are not left behind by unmonitored market forces.

This study has several limitations that warrant consideration. First, there are constraints in the data available. Important facility-level variables, particularly from the CMS cost reports (e.g., detailed financial performance metrics), contained substantial missing values. These data gaps required imputation and likely reduced the model’s accuracy in assessing financial distress, a key precursor to closure. Improving the completeness and quality of such data in the future would strengthen predictive power. Second, nursing home closure is an inherently imbalanced classification problem—only a small minority of facilities close, even among poorly performing facilities, which complicates model training. We applied SMOTE oversampling to help address this imbalance, but the underlying skewed distribution still means our positive predictive value is limited (i.e., many false positives) even though recall is high. The gap between the AUROC and the more pertinent metrics, such as AUPRC, Precision, and Recall, specifically in the out of sample set of closures, indicate that the precision-recall trade-off remains persistent. To address this to the extent possible, model training was geared at optimizing Recall performance. In practice, this means the model’s alerts must be interpreted with caution: a flagged facility is not certain to close but rather should be seen as high-risk and in need of attention. Third, the best-performing models in our analysis were deep learning models (BI-LSTM in particular) that operate as “black boxes.” These recurrent neural network models do not readily provide interpretable explanations for why a given facility is predicted to be at risk. This lack of transparency could pose challenges for adoption in policy settings, where stakeholders may demand clear rationale for decisions. While we attempted to gather insights by comparing characteristics of true closures versus false-positive predictions, this post-hoc analysis is not a substitute for built-in interpretability. Future work might explore the use of knowledge distillation models to balance predictive capability with explainability, ensuring regulators can trust and act on model outputs28,29. Finally, our analysis was based on data from 2011 to 2021. The long-term care sector has been in flux, especially with the COVID-19 pandemic driving an uptick in closures after 20202. Our model does not yet capture pandemic-era policy changes or unprecedented strains (e.g., workforce shortages, infection control costs) that have emerged in recent years. Thus, the model may require retraining and recalibration with post-2021 data to remain valid. Despite these limitations, we believe our approach provides a robust proof-of-concept for predicting closures, and the general patterns identified (occupancy, quality, etc.) are likely to hold true even as absolute risk levels shift over time.

This study shows that nursing home closures, while rare, can be predicted with high accuracy using advanced machine learning and deep learning methods applied to longitudinal data. Our findings highlight key risk factors—such as low occupancy, poor quality, and rural location—that consistently signal elevated closure risk. By identifying at-risk facilities in advance, predictive models can support more proactive oversight, targeted interventions, and equitable resource allocation. These tools offer a promising path toward strengthening the resilience and stability of long-term care in the U.S.

link