Importance of harmonisation of measurements of education to comparability

Educational attainment is defined as the highest level of formal education an individual has completed, capturing key socioeconomic constructs such as human capital (skills and knowledge), cultural capital (cultural competence and resources), and socioeconomic status (position within social and economic hierarchies) (Schneider, 2009). It plays a central role in social science research, serving as an independent or dependent variable, a proxy for other variables, or as a control variable both inside and outside the social sciences. In survey methodology, educational attainment serves to assess survey representativeness and correct for non-response error through post-stratification weights (Lynn and Anghelescu, 2018; Ortmanns, 2020b). Given its importance, large-scale surveys prioritise high-quality educational measures while balancing flexibility and international comparability (Schneider, 2022).

However, achieving international comparability in measuring educational attainment is challenging due to the inherent diversity of educational systems. These systems differ in institutions, certificates, and structures, shaped by historical and cultural factors, complicating direct comparisons (Ortmanns, 2020b; Schneider, 2009). Harmonisation schemes such as ISCED (UNESCO, 2012), CASMIN (Brauns et al., 2003), ES-ISCED from the European Social Survey (Schneider, 2010b, 2016) or GISCED (Schneider, 2022) aim to bridge these differences. These schemes strive to ensure construct validity while enabling meaningful cross-national comparisons of educational attainment.

The importance of these harmonisation efforts is underscored by persistent inconsistencies in the distribution of educational attainment data across surveys. Schneider (2009) found these issues by comparing the data from the European Labour Force Survey, the European Survey of Income and Living Conditions, and the ESS across 26 European countries and multiple years. Ortmanns and Schneider (2016) confirmed similar issues in other international surveys, such as the International Social Survey Programme, the European Values Survey, and the Eurobarometer. Further, Ortmanns (2020a) showed that the observed inconsistencies across the aforementioned and other surveys like the Adult Education Survey, the European Quality of Life Survey, and the European Working Condition Survey, stemmed primarily from mapping errors and differences in response categories, even after accounting for other potential error sources. These findings have promoted the revisions in harmonisation protocols and instruments and impacted how education is measured.

The impact of harmonisation on survey measurement instruments

The introduction of harmonisation frameworks for measuring education has necessitated a close examination of the design of measurement instruments. Comparability in educational measures is enhanced when harmonisation is embedded into the instrument design through ex-ante harmonisation, ensuring consistency across countries before data collection begins (Granda et al., 2010). This approach minimises the challenges associated with harmonising data retrospectively, which often involves reconciling incomplete or ambiguous information (Schneider, 2022). As a result, some surveys have revised or redesigned their instruments to incorporate harmonisation principles effectively.

A prominent example of such adaptation is the European Social Survey (ESS), which undertook a comprehensive revision of its educational measurement for its 5th round of data collection. This process involved collaboration with national and international experts in education, the tailoring of the ISCED to European societies, and the implementation of standardised harmonisation procedures across participating countries. Additionally, national instruments, known as Country-Specific Educational Variables (CSEVs), were refined to align with these harmonised standards (Schneider, 2010a). Post-revision analyses of the distribution of educational attainment in the ESS indicate enhanced comparability, suggesting the effectiveness of ex-ante harmonisation in improving data quality (Ortmanns and Schneider, 2016).

One notable outcome of this harmonisation effort was the development of more nuanced educational measures that capture programme orientation, duration, and educational outcomes. Prior to harmonisation, some educational classifications were overlooked due to their limited relevance within specific national contexts. For instance, vocational education might be deemed less significant in some countries, yet comparative studies of its societal impact require consistent and harmonised measurement across nations (Smyth and Steinmetz, 2015).

Another significant implication of the harmonisation framework has been a shift toward instruments that classify education based on the highest completed qualifications rather than years of education (Ortmanns, 2020b). Empirical evidence supports this approach, with educational classifications exhibiting higher construct validity and cross-national comparability compared to years of education. Frameworks like the ESS version of the ISCED (ES-ISCED) and its more detailed form, EDULVLB, are designed with academic aims in mind, further enhancing the reliability and applicability of these measures in comparative research (Schneider, 2010b).

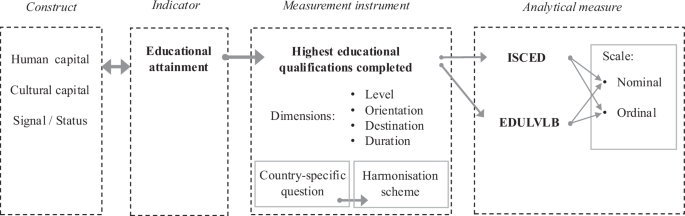

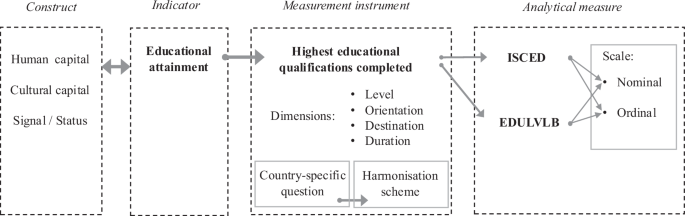

Figure 1, adapted from Schneider (2009, p. 57), illustrates the process of operationalizing educational attainment for comparable measurement using educational classifications. The measurement instrument serves as a bridge, linking the target indicator of educational attainment (to ensure construct validity) with a harmonisation framework (to achieve comparability in the analytical measure). It captures the highest educational qualification completed by survey respondents, using classifications tailored to the specific country’s educational system. Additionally, it incorporates detailed information on multiple dimensions essential for harmonisation with international standards. These dimensions include level, orientation, destination, and duration, which vary according to the characteristics of each educational system. The outcome is a harmonised educational classification that functions effectively as a nominal or ordinal scale of educational attainment.

Adapted from Schneider (2009, p. 57).

Changes that concern the reliability of the measurement

Achieving ex-ante harmonised measurement requires capturing detailed information about the highest educational qualification completed. Broad categories, such as “university degree,” are too vague to capture the diversity of qualifications granted by universities. In practice, this often necessitates expanding the list of classifications or adding more detailed descriptions to the educational options presented to respondents. While this increased detail can improve accuracy, it may also complicate the response process, especially if respondents struggle to differentiate between similar levels of education.

Changes in the length and complexity of survey questions can also affect the reliability of educational measurement. Generally, longer questions and questions with a larger number of answer categories are less reliable (Alwin, 2007; Alwin et al., 2018). However, as a factual question, educational attainment tends to exhibit higher reliability compared to subjective or attitudinal questions (Alwin, 2007; Hout and Hastings, 2016). Previous studies have highlighted the challenges posed by instruments with long lists of educational qualifications. While alternative approaches aim to reduce respondents’ burden and maintain validity and comparability, these solutions also encounter limitations (Herzing, 2020; Schneider et al., 2016, 2018).

The European Social Survey (ESS) illustrates the effects of harmonisation on the complexity of educational measurement. In Slovenia, the Country-Specific Educational Variable (CSEV) had 7 answer categories and 38 words in Round 1. By Round 8, this expanded to 13 categories with 340 words. Similarly, Estonia’s initial participation in Round 2 included 13 answer options with a word count of 53 in Estonian and 73 in Russian. By Round 8, the number of options rose to 15, with word counts increasing to 103 in Estonian and 170 in Russian. In the United Kingdom, Round 1 included 5 options with 161 words, while Round 8 had 18 options split across two lists, totalling 322 words. These examples suggest harmonisation efforts have increased the complexity of response options, both in terms of the number of categories and the length of descriptions.

Current and future developments in survey methodology present new challenges for ensuring the comparability of educational measurements. Survey methodologists expect a growing adoption of mixed-mode data collection, which is driven by goals to improve response rates, enhance data quality, and reduce costs (Couper, 2011; DeLeeuw, 2018). The COVID-19 pandemic has accelerated this shift, exposing the vulnerabilities of relying solely on face-to-face data collection and encouraging a broader evaluation of alternative methods (Gummer et al., 2020; Scherpenzeel et al., 2020). Even the ESS, which has long adhered to face-to-face data collection as a strict standard, plans to incorporate self-completion and mixed-device approaches in the near future (European Social Survey, 2024).

As these changes unfold, survey users and social science researchers will expect educational qualification measures to be sufficiently reliable across modes to ensure comparability. Additionally, survey methodologists will rely on educational variables to evaluate sample composition across modes. The comparability of educational measurements across collection modes will directly influence the quality of both substantive and methodological research that depends on these variables for their conclusions. Ensuring robust measurement across modes is, therefore, critical for the continued utility of educational data in cross-national surveys.

How Reliable are Harmonised Survey Instruments of Educational Classifications?

Despite the crucial role of educational attainment data, few studies have examined individual-level measurement errors for reliability. Porst and Zeifang (1987) analysed the reliability of socio-demographic variables using the German General Social Survey (ALLBUS) and found minimally acceptable reliability for occupational education, with a Kendall’s Tau as low as 72% (Porst and Zeifang, 1987: p194-196). Although this study included the orientation of education with vocational education, the classifications of educational attainment were relatively broad compared to those used in internationally harmonised instruments. The results of this study have limited comparability with the ex-ante harmonised instruments used in contemporary surveys.

Hout and Hasting (2016) investigated the reliability of years of education using repeated measurements from the U.S. General Social Survey (GSS), reporting high reliability for this measure. However, prior research has highlighted both conceptual and empirical limitations in using years of education to measure educational attainment for international comparisons (Schneider, 2009, 2010b).

Schneider (2009), Ortmanns and Schneider (2016), and Ortmanns (2020a) provide an extensive evaluation of the aggregated-level stability of educational measurements by looking at multiple large-scale international surveys. These studies compared the distributions of educational attainment using the ISCED across different surveys and rounds. Inconsistencies in distributions were quantified using the Duncan Dissimilarity Index (Duncan and Duncan, 1955), which measures the proportion of cases requiring reclassification to achieve identical distributions.

Ortmanns and Schneider (2016) analysed data from 2002 to 2008 across 34 countries using the European Social Survey (ESS), the Eurobarometer (EB), and the International Social Survey Programme (ISSP). They found that, on average, 8.6% of respondents in each sample would need to change their educational classification to achieve consistency with the distribution of the previous wave. The degree of inconsistency varied across both countries and surveys, with the ISSP showing the highest average dissimilarity. Some countries reached values as high as 16%, while others were as low as 3% (Ortmanns and Schneider, 2016, pp. 573–574). Ortmanns (2020a) compared data from European countries to the European Union Labour Force Survey (EU-LFS) as an external benchmark. The average dissimilarity was around 13% across several survey programmes, which included the European Union Statistics on Income and Living Conditions (EU-SILC), the European Values Study (EVS), the Programme for the International Assessment of Adult Competencies (PIAAC), the Adult Education Survey (AES), the European Quality of Life Survey (EQLS), the European Working Conditions Survey (EWCS), as well as the EB, ISSP, and ESS (Ortmanns, 2020a, p. 385).

The underlying assumption of these aggregated-level studies of educational measurement is that valid and reliable measurements should produce similar educational distributions across representative surveys of the same country and year, as well as over time, with only gradual changes expected. One possible explanation for observed inconsistencies is low reliability at the individual level. However, other factors can also lead to distributional inconsistencies, including errors in harmonisation or mapping procedures, and issues related to representativeness, such as sampling error and non-response bias (Ortmanns, 2020a). Importantly, aggregate-level analyses cannot assess the reliability of the measurement itself, but only the consistency of the resulting category distributions within the sample across surveys or over time.

Aggregated analyses cannot detect individual-level inconsistencies or the effects of random measurement errors, which are better captured through test-retest reliability studies (Schermelleh-Engel and Werner, 2012). While equal distributions at the aggregated level may suggest stability, they can mask unstable individual-level measurements, allowing random errors to go undetected. For instance, even if every respondent were to change their reported educational level between two measurement points, the overall distribution could remain identical if the number of cases in each category stayed the same. In such a scenario, the measurement would be completely unreliable at the individual level, despite appearing consistent in aggregate. This highlights the importance of conducting test-retest studies to evaluate the reliability of harmonised educational measurements, ensuring their robustness for both individual and aggregated analyses.

link