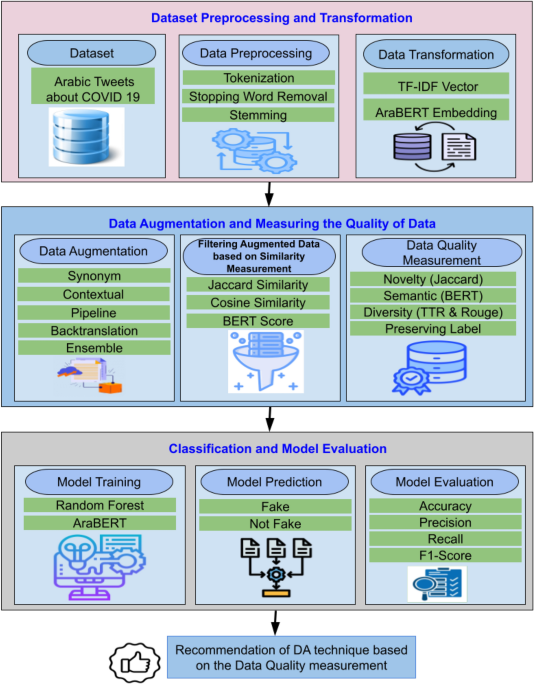

Augmentation techniques are applied to generate new tweets based on the training data. Generated tweets retain the same labels as their corresponding original tweets. To establish a baseline for the model, we evaluate its performance on the original training part using the unaltered test set. The augmented tweets are then added to the original training data, resulting in a non-filtered dataset. Following this, the model is retrained using the new augmented dataset, and the results are compared with the original dataset. To enhance text data processing and augmentation, the proposed implementation integrates the NLP augmentation library from75. The paper presents several experiments, each of which has a different objective as follows:

-

(a)

Experiment A: Analyzing the effects of augmentation on the classification performance of fake news. RF and AraBERT classifiers are applied to the original and augmented datasets.

-

(b)

Experiment B: Determining and establishing the best threshold for the similarity between the original Arabic tweet and the augmented tweet. The augmented dataset is filtered according to threshold values for cosine similarity and BERTScore measures. To evaluate the effect of contextual augmentation, we compared the classification results with those obtained using cosine and score similarity measures.

-

(c)

Experiment C: Evaluating data quality of the suggested augmentation methods regarding label preserving, novelty, diversity, and semantics. Additionally, determine whether the proposed methods consider the context of the augmentation process.

-

(d)

Experiment D: Evaluating the effect of a proposed ensemble augmentation approach on Arabic Healthcare classification performance.

-

(e)

Comparison between our proposed approach and the previous approaches that use the same dataset.

Experiment A: experiment assess the effectiveness of the augmentation process in identifying fake Arabic news

This experiment evaluates two classification models, RF and AraBERT, based on augmented datasets that have been subjected to various augmentation techniques. The similarity score is ignored when merging all newly created data with the original dataset. To ensure a fair comparison between different DA techniques, the same number of augmented tweets (1,203 tweets) was generated and used in this experiment.

Table 5 displays the categorization results for the original dataset, which serve as our baseline results. In Tables 6 and 7, the results of the RF and AraBERT classifiers (using original \(+\) new augmented tweets) are presented. Eight augmentation techniques (WordAntonym, WordNet, TFIDF, WordEmbeddings _BERTBase, WordEmbeddings_DistilBERT, WordEmbeddings_RoBERTa, pipline_random, and pipline_sequential techniques) are applied to the original dataset to provide a more comprehensive analysis. The augmentation process adds the same number of instances for each class as newly generated tweets to the original dataset to keep the dataset balanced.

Table 6 shows the results of RF for the Not-Filtered Dataset. We can observe that RF’s performance improves significantly with augmentation, especially with the WordAntonym method, surpassing results from the original dataset. The highest performance across all metrics was achieved by WordAntonym, with accuracies of 92.45%, precision of 93%, recall of 92%, and an F-score of 92%. The TF-IDF method performed the least well out of the four methods, with an accuracy of 87.17%, precision, Recall, and F-score of 87%. In Table 7, the results of the AraBERT model for the Not-Filtered Dataset are displayed. It is observed that the WordNet DA method achieves higher accuracy compared to other methods.

For both RF and AraBERT classifiers, the performance of the augmented dataset is better than that of the original dataset, suggesting that augmentation may be a valuable means of improving the classification performance.

AraBERT Performance Evaluation using Augmented Dataset.

According to Tables 6 and 7, the AraBERT classifier outperforms the RF model for all enhancement strategies. The AraBERT model outperforms the RF model by 2.68% for WordAntonym and 5.62% for WordNet. Additionally, while both models receive the same number of tweets through augmentation, AraBERT uses the augmented data more effectively, achieving a higher score.

Additional experiments were conducted for the RF and AraBERT models to detect potential model overfitting. The RF model was retrained using 5-fold cross-validation on both the original dataset and an augmented dataset containing generated tweets, as presented in Table 8. For the AraBERT model, we ran 15 epochs on the original dataset after adding the augmented tweets. Figure 4 illustrates the training and validation accuracy over the 15 epochs.

Based on the performance across all folds, as illustrated in Table 8 and Fig. 4, the augmentation technique improves model generalization without introducing biases or redundancies. The results indicate that the RF model does not exhibit overfitting during training, and the test results are robust and reliable. Additionally, the training and validation accuracy of the AraBert model improved throughout the epochs, indicating that the model continued to perform well after the inclusion of augmented tweets.

Overall, the replacement techniques (WordAntonym, WordNet) perform better than others. This is because they do not alter the context of the input tweet; instead, they replace words with related terms or synonyms from the WordNet dictionary. In contrast, contextual word embedding techniques achieve lower performance in classification tasks. These techniques rely on pre-trained deep-learning models to replace words in the original tweets based on contextual understanding. However, since most tweets are short, they do not provide sufficient context for these models. Additionally, their performance depends on the size and domain of the corpus used for training. As a result, these models often generate tweets with different contexts and lower similarity to the original ones. Pipeline-based augmentation was tested but proved to be significantly less effective than alternative augmentation methods, with a maximum accuracy of 82.61%. Consequently, it was excluded from subsequent analyses to focus on more effective techniques that yielded better results.

Experiment B: the impact of similarity threshold on classification performance

In this section, a systematic analysis is conducted to assess the impact of filtering on classification outcomes and the model’s overall effectiveness. Preliminary research suggests that generating data with a higher similarity threshold could minimize noise and thus improve precision at the expense of Recall. However, low similarity requirements generally allow for more sentence changes. As a result, Recall may be enhanced, but accuracy may be compromised as duplicate or irrelevant data is added. A balance will be maintained between these measures, emphasizing the trade-offs of choosing a similarity threshold and its impact on the models’ long-term classification. According to the results of Experiment A, we selected two DA techniques with the best performance, namely Word_Antonym and WordNet, to investigate the impact of their similarity on augmentation performance. Table 9 displays the RF results of the filtered BERT similarity dataset of the generated tweets utilizing the Word_Antonym method. As is clear in Table 9, the threshold range (0.4–1.0) outperforms others as it generates more tweets. Additionally, Table 10 presents the RF results of the filtered BERT similarity dataset of the generated tweets of the WordNet technique. Also, the threshold range (0.4–1.0) outperforms others and generates the highest number of tweets compared to the other threshold ranges.

In Table 11, the results of the AraBERT model of the filtered BERT similarity dataset of the generated tweets by using the \(Word\_Antonym\) method are presented. AraBERT’s accuracy level of 97.22% is achieved using \(Word\_Antonym\) augmentation for the range \(0.5 \le \text {Score} \le 1.0\), which is the best performer and the ideal range for accurate forecasts. This range comprises the most tweets (1125). While AraBERT, with high similarity scores (greater than 0.8), achieves an accuracy of 87.85%, this indicates a decline in rewards. Additionally, Table 12 presents the results of the AraBERT model on the filtered BERT similarity dataset of generated tweets, obtained using the WordNet method. Furthermore, the threshold range (0.4–1.0) surpasses others and generates more tweets compared to the other threshold ranges.

From Tables 9, 10, 11, 12 we can observe that a balance between similarity and growth (number of tweets) of generated data is associated with higher classification performance. The classification task at specific similarity ranges (0.4–1.0 and 0.5–1.0) outperforms the others. The reason is that newly generated sentences have no excessive similarity with the original corpus within the same class label. As shown in experiments A and B, the RF model improved from 92.45% without similarity classification to 93.58% after similarity classification. Finally, we can conclude that tweet volume and accuracy provide a strategic direction for refining models to optimize performance and data utility. In general, similarity-based filtering can benefit both RF and AraBERT models.

Experiment C: evaluating the data quality of augmentation techniques in terms of preserving label, semantics, novelty, and diversity

Through experiment C, our goal was to evaluate the quality of sentences generated by the different data augmentation techniques. This evaluation employs a combination of metrics, including the ability to preserve class labels, semantics, diversity, and novelty.

BERTscore Similarity Score Distribution.

To assess the ability of the DA technique to preserve semantics, we compare the classification results based on cosine similarity (text match) with those based on BERTScore similarity (semantic match). So filtering process is conducted based on cosine similarity as shown in Tables 13 and 14. Table 13 presents the classification results when filtering the augmented sentences produced by word-antonym. With increasing similarity thresholds, classification performance has steadily improved, reaching a peak around the 0.6–1.0 range (Accuracy: 81.13%, F1-score: 81%). Additionally, Table 14, illustrates text classification results using augmented data produced by WordNet and filtered by cosine similarity thresholds. Achieving 80% accuracy and F1-score while utilizing mid-range similarity criteria (0.6–1.0) increases classification performance, indicating that enhanced sentences within this range are more useful for the model because they are semantically similar to the original sentences. Performance slightly declines at the higher threshold (0.8–1.0) (Accuracy: 78.09%, F1: 78%), as excessive constraints restrict diversity and lose augmentation gains.

Based on the results of both tables, the semantic quality control using cosine similarity enhances the efficacy of data augmentation. However, there is a trade-off between the quantity of the dataset and semantic purity. As a result of the ideal augmentation, which occurs at a moderate similarity threshold (0.6–1.0), the model achieves both performance and generalization. According to these findings, sentence-level augmentation necessitates the careful adjustment of similarity filters to strike a balance between the quantity and quality of the data.

By comparing Tables 9 and 10 with Tables 13 and 14, a comparative analysis demonstrates that BERT similarity outperforms cosine similarity in preserving semantic integrity during augmentation. WordNetbased augmentation accuracy declined from 90.94% to 80% under cosine similarity filtering, while Word_Antonymbased performance dropped from 93.58% to 81.13%, exhibiting BERT superior effectiveness in maintaining semantic. This superiority of BERT similarity leads to improved classification performance.

Additionally, providing the similarity between the original and augmented tweets based on BERTScores as illustrated in Fig. 5. Figure 5 demonstrates the similarity between the original and augmented tweets based on BERTScores. As indicated by the x-axis of the histogram, the BERTScore values show the semantic similarity between the original and enhanced text. On the y-axis, the frequency of each BERTScore value appears, representing how often each similarity level occurs in the “fake” class samples from both the original and augmented datasets. By using BERTScore (Fig. 5a through 5d), we demonstrate how context-aware evaluation provides a more nuanced understanding of text similarity. This approach better captures the semantic relationships within the text, thus improving the classification model’s overall performance.

Comparison of semantic (BERT) Precision, Recall, and F1-Score for Different Augmentation Techniques.

To assess the ability of the DA technique to provide novel data, the average novelty score is calculated for different augmented datasets using the Jaccard similarity metric, as shown in Fig. 6. In text augmentation, it is crucial to strike a balance between semantic similarity and semantic novelty. Higher semantic novelty scores (closer to 1) generally reflect a more significant semantic difference between the original and augmented text. The threshold for what constitutes “significant” novelty varies depending on the specific task and desired level of augmentation.

Novelty of Data Generated by DA techniques.

Lexical and Semantic Novelty Scores measure a variation in word choice and meaning from the original text. The balance between novelty and relevance is maintained to eliminate redundant data, ensuring that the enhanced text adds functional variants without altering its meaning or context. Figure 7 summarizes the scores obtained for these metrics; some DA techniques, such as TF-IDF and WordNet, provide new words to the original data, unlike WordAntonym and BackTranslate, which offer fewer new words but ensure preserving the original context or meaning.

Semantic similarity assesses how closely related two pieces of text are in terms of meaning. In contrast, semantic novelty measures the degree to which the definition of a piece of text differs from or is unique to another. The Jaccard similarity metric assesses the degree of uniqueness introduced by augmentation techniques and its potential impact on model performance. Typically, as semantic similarity increases, semantic novelty decreases, and vice versa, due to the trade-off between maintaining meaning and introducing new concepts. A more considerable distance between cosines shown in Fig. 7 indicates more significant semantic dissimilarity and potentially more remarkable novelty. Different augmentation strategies are evaluated based on how well important language, relevant phrases, and logical text structure are retained, assessing the quality of machine-generated translations. TTR and Rouge scores for different applied DA techniques are measured. TTR scores can provide indirect information on the variety of generated text in text augmentation. TTR calculates the ratio of unique words to the total number of words in the text. A low TTR score may indicate a lack of diversity; a higher TTR score means the data contains diverse words. Using the ROUGE score, several characteristics of overlap between enhanced and reference texts are measured by the ROUGE-1, ROUGE-2, and ROUGE-3 scores. ROUGE-1 examines single words, ROUGE-2 examines word pairs or brief phrases, and ROUGE-3 considers the general organization and flow of the text.

To achieve the objective of assessing the degree to which the augmented text maintains important details while adding an unexpected element to the data, using both measures, Fig. 8 shows that there is an improvement in diversity after the augmentation process based on TTR and Rouge metrics such as WordAnotym, Backtranslation DA techniques. However, some DA augmentation techniques (WordNet, TF-IDF, and Wordemd_base) decrease the dataset’s diversity because the Rouge measures diversity at the sequence of words. TTR Metrics seem more representative of diversity when focusing on individual words, unlike Rouge metrics, which capture diversity at the n-gram level.

Diversity Scores for Augmentation Techniques.

To assess the ability of DA techniques to preserve the original label of the generated data as a vital data quality perspective. We introduce a method for relating the generated data to the original training data. Our technique for measuring the preservation of the label for each DA technique is as follows: Each DA technique assigns a label to the generated text (the predicted label), which matches the label of the original input text. First, we train a baseline classifier (RF) using the labeled original data. Then, we use the trained RF model to test and evaluate the newly generated tweets (as test data) and assign the generated text its proper label. Finally, we compare the classification labels of the baseline (truth labels) to those of the generated text (predicted labels) to measure the accuracy and ability of the DA technique to preserve the label. For example, for all generated text (using the WordAntonym technique) for the actual class, the RF baseline classifier classifies 95% of it as accurate and 5% as fake. So, the WordAntonym technique has the ability 95% to preserve the label of its generated text.

As shown in Figs. 9 and 10 for real label class, the generated tweets by WordAntonym and Wordnet have the highest precision 95% and 93%. However, regarding recall, Backtranslate surpasses the other DA techniques by 91%. Figure 10 shows the results for fake class labels. It indicates that the tweets generated by WordAntonym and WordNet have a recall of 96% and 94%, respectively, and F1-scores of 94% and 93%. However, Precision, Backtranslate, and WordAntonym surpass the other DA techniques, 92% and 92.1%, respectively. Therefore, we recommend using the three WordAntonym, WordNet, and Backtranslate for augmentation to focus on preserving the label as a data quality measure.

Comparison of DA techniques ability to preserve real class label.

Comparison of DA techniques ability to preserve fake class label.

Experiment D: performance of text classification using ensemble augmentation-based techniques

Machine learning models can be made more robust and general by employing various strategies that produce a range of data variations, thereby improving their ability to generalize to unseen data43. Despite this, this strategy has a drawback: The enhanced dataset may become too noisy, making it more difficult for the models to learn. An effective way to manage this variability is to combine several augmentation strategies. However, controlling the diversity of the supplemented data is crucial when using data augmentation techniques. Therefore, it is essential to determine the ideal ratio of noise to diversity in the enhanced dataset. To achieve this balance, a careful assessment of the model’s performance on these datasets and rigorous experiments with different combinations of augmentation approaches are necessary.

The results of Experiment A are compared with those of Experiment D. This comparison determines the effectiveness of combining multiple data augmentation (DA) techniques in enhancing model precision and resilience, and it helps understand how each method affects the data augmentation process and the model’s overall performance. After removing the duplicates, the ensemble approach combines the tweets generated by the ensemble techniques such that the total combined tweets are equal to 1203, the same tweets added to the original training data in Experiment A. We compare the performance of models trained on datasets created by various combinations of augmentation techniques, as shown in Tables 15 and 16, including Synonym-WordNet, GPT-Synonym, and GPT-Synonym-WordNet.

Both the RF and AraBERT models achieve the highest accuracy with the GPT −2 antonym technique. The Antonym-WordNet strategy greatly enhances GPT’s performance but remains less effective than the GPT-Antonym strategy in both models. Generally, AraBERT outperforms RF, especially in WordAntonym-WordNet and GPT-Antonym models. AraBERT achieves a maximum accuracy of 91%, while RF achieves 90.2%. From results of Experiment A and D, By comparing the best accuracy of models in Table 6 and Table 7 to Table 15 and Table 16, RF’s accuracy is decreased by 2.25% (92.45% vs. 90.2%), while for AraBERT is decreased by 4.81% (95.81% vs. 91.0%).

We can conclude that ensemble augmentation decreases model performance, while increased diversity through ensemble augmentation can improve generalization. Combining different augmentation techniques can, however, produce excessive noise, leading to overfitting or misclassification. Therefore, carefully selecting and tuning augmentation strategies are crucial to maximize model performance without compromising data quality.

Comparison between our proposed approach and the previous approaches

The results of our study are presented in Table 17 along with those of earlier methods19,69 that used the same dataset. The performance of RF and AraBERT is significantly improved over the earlier methods when combined with our suggested augmentation techniques. Alsudias and Rayson69 introduce a dataset of Arabic fake news and apply traditional ML models, such as SVM and LR, for classification; they did not use any text augmentation before the classification task. However, Mohamed et al.19 did not introduce the effect of different DA techniques; only the back-translation DA technique is applied. Unlike our work, they did not consider preserving labels, semantic similarity, or novelty issues. Furthermore, the related work section highlights the distinction between our approach and these state-of-the-art methods, as shown in Table 2.

link