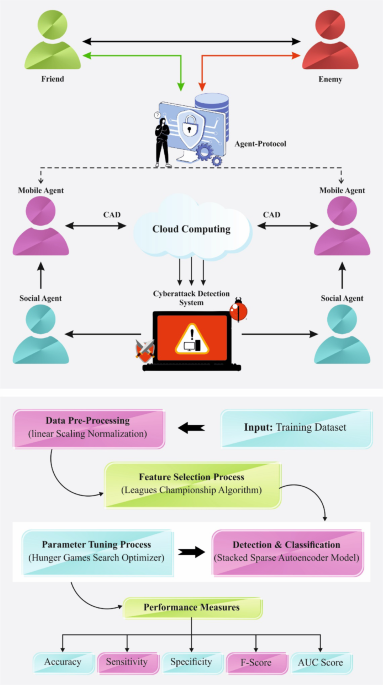

In this work, a CLAFS-ODLCD model for securing the digital ecosystem is proposed. The CLAFS-ODLCD technique focuses on the recognition and classification of cyberattacks in the IoT infrastructure. To achieve this, the CLAFS-ODLCD technique involves various types of sub-processes namely LSN-based data normalization, CLA-based feature selection subset, SSAE-based classification, and HGS-based hyperparameter tuning. Figure 1 illustrates the working flow of the CLAFS-ODLCD technique.

Working flow of CLAFS-ODLCD method.

Data normalization

Initially, the CLAFS-ODLCD technique utilizes the LSN approach for data pre-processing. LSN is a data normalization method implemented in diverse domains, comprising ML and statistics for scaling and standardizing the numerical values within a particular range. Unlike some normalization techniques that target to center data about the mean or median, LSN linearly scales the input data to a predetermined interval at the range [0, 1] or [− 1, 1]. This procedure supports keeping the original correlation between data points when ensuring that the values are reliable and interpretable level. LSN is mainly beneficial in conditions wherein the absolute magnitude of data will not be important then, maintaining comparative variances is needed, providing more stable and efficient analyses in numerous applications.

Feature selection using CLA

The CLAFS-ODLCD technique utilizes the CLAFS technique to choose an optimal subset of features. A new metaheuristic technique for solving continuous optimization problems, the CLA method was introduced by Kashan29. The team (each person) in the swarm of \(L\) teams (leagues) has the feasible solution to the problem with \(n\) players equivalent to the amount of variables. Team \(i\) takes playing strength respective to the fitness rate following the construction of fake weekly league schedules. Based on this, the club plays together in pairs for \(S\times \left(L-1\right)\) week where \(t\) denotes the week and \(S\) indicates the number of seasons. Playing outcomes define who wins and who loses. Based on the outcome of prior weeks, all sides form a new team match to prepare for the upcoming match. Under the direction of team formation, the configuration of the best team is selected with better playing strength and replaced by effective team formation.

League Schedule’s generation

The initial phase is to prepare the schedule that involves games for all the seasons. Each team plays together once the season under the round‐robin schedule. \(L\left(L-1\right)/2\) competition exists, and \(L\) must be the even integer. Then, the competition goes on for \(S\) seasons. The CLA constructs an 8‐team \((L=8)\) sports league.

Evaluating winner or loser

Based on the standard playing strength, a winner and loser is selected, with playing strength \(f\left(R\right_N\right^\\right)\) and \(f\left(_\left^ \right)\) and formation \(R\right_\left^\=\left(_N\right^\,{x}_{\iota 2}^{t}, \dots ,{x}_{in}^{t}\right),{ X}_{i}^{t}\), correspondingly, \({i}\text{th}\) and \({j}\text{th}\) teams participating at \({t}\text{th}\) weeks are considered. \({p}_{i}^{t}\) represents the probability that \({i}\text{th}\) team will outdo \({j}\text{th}\) team in \({t}\text{th}\) week.

$${p}_{i}^{t}=\frac{f({X}_{j}^{t})-\widehat{f}}{f\left({X}_{j}^{t}\right)+f\left({X}_{i}^{t}\right)-2\widehat{f}}$$

(1)

In Eq. (1), the best team global team formation is denoted as \(\widehat{f}\). Also, The probability that \({j}\text{th}\) teams can overcome \({i}\text{th}\) team is simultaneously defined by the random numbers within \([\text{0,1}\)). If the number is greater than \({p}_{i}^{t}\) \(i\) loses and \(j\) wins. if the outcome is lesser than or equivalent to \({p}_{i}^{t}\) then team \(i\) wins, and team \(j\) loses.

New team formation

Based on the league schedule, the club that played with \(l\) teams in \({t}\text{th}\) weeks, with \({i}\text{th}\) teams in week \(t+1\), and, with \({i}\text{th}\) teams in \({t}\text{th}\) weeks, correspondingly, are represented by \(i:l,j\), and \(k\). Assume \({B}_{k}^{t},{ B}_{j}^{t}\), and \({B}_{i}^{t}=\left({b}_{i1}^{t}, {b}_{i2}^{t}, \dots ,{ b}_{in}^{t}\right)\) as the best team configuration for \(k, i\), and \(i\) teams at \({t}\text{th}\) weeks, correspondingly. It can affirm that for \({k}\text{th}\) teams to overcome \({l}\text{th}\) teams, \({i}\text{th}\) teams should come up with a playing style akin to that employed by \({k}\text{th}\) teams at \({t}\text{th}\) weeks, based on the strength of \({k}\text{th}\) teams, which \(\left({B}_{k}^{t}-{B}_{i}^{t}\right)\) represents the gap vector amongst the playing strategy of \({k}\text{th}\) and \({i}\text{th}\) teams. Likewise, it steers clear of adopting the playing strategy that is analogous to \({k}\text{th}\) teams while concentrating on the deficiency of the team \(({B}_{i}^{t}-{B}_{k}^{t})\). The information of the gap vector is integrated with constant parameters,\({\psi }_{2}\)‐approach, and \({\psi }_{1}\)‐retreat, for generating a new team. The approach parameter is employed once \(i\) team desires to go toward the rival. In contrast, the retreat parameter is employed if \(i\) team distances itself from the competitor.

The swarm‐based technique was used to accomplish a globally optimal solution. The CLA can easily get stuck in local optimal solutions despite its effectiveness and simplicity, resulting in an imbalance in local exploitation and global exploration.

In the CLA model, the objective is combined into single objective thus weight finds the objective importance30.

$$Fitness\left(X\right)=\alpha \cdot E\left(X\right)+\beta *\left(1-\frac{\left|R\right|}{\left|N\right|}\right)$$

(2)

In Eq. (2), \(Fitness(X)\) is the fitness value of \(X\) subset\(,\) \(\alpha\), and \(\beta\) are the weights of classifier error rate and the reduction ratio, \(\alpha \in [\text{0,1}]\) and \(\beta =(1-\alpha )\). \(E(X)\) indicates the classifier error using the attributes selected in the \(X\) subset, \(|R|\) and \(|N|\) are the amount of attributes selected and the amount of attributes in the original data correspondingly.

Cyberattack detection using SSAE

In this phase, the detection and classification of the cyberattacks are performed by using the SSAE approach31. This model is chosen for its robust capability in learning deep hierarchical feature representations and detecting subtle and intrinsic attack patterns compared to conventional methods. This model also effectually mitigates noise and irrelevant data, thereby enhancing the detection accuracy and generalization. The technique also utilizes dropout regularization and early stopping strategies for addressing overfitting, ensuring the model does not memorize the training data but generalizes well to unseen samples. Furthermore, class imbalance is handled through techniques such as weighted loss functions or data augmentation, allowing SSAE to maintain robust performance across minority attack classes, which is significant for reliable intrusion detection in cybersecurity environments. Figure 2 illustrates the infrastructure of SSAE.

An autoencoder (AE) is an unsupervised learning method that mechanically absorbs the raw feature data and contains three layers namely HL, output, and input. The network of coding collects of input layer and an HL, and the decoder is made up of an output layer and an HL. The network of coding removes the original feature data.

\(X=[{X}_{1},{ X}_{2}, \cdots ,{ X}_{n}{]}^{T}\) denotes the network input, and \(n\) represents the amount of nodes, demonstrating the data size of the sample. The \(h\) hidden features of the \(X\) original data gained over the coding system are computed as below:

$$h=f\left(WX+b\right)$$

(3)

whereas \(f\) specifies the Sigmoid activation function; \(b\) and \(W\) denote the biases and weights, correspondingly; the parameter \(h\) is removed by coding; and \(W\) size is \(s\times n\), while \(s\) represents the feature parameter size.

The decoder is employed to rebuild the original data of input, and the rebuilt data \(Y\) is gained after decoding the \(h\) hidden feature as below:

$$Y=U(W^{\prime}h+b^{\prime})$$

(4)

While, \(Y=[{Y}_{1}, {Y}_{2}, \cdots , {Y}_{n}{]}^{T}\) represents the output data of the network; \(U\) refers to the Sigmoid activation function; \(b^{\prime}\) denotes the biases and \(W^{\prime}\) represents the weights utilized in the coding stage. Whereas, \(W{\prime}={W}^{T}.\)

The AE employs stochastic gradient descent and backpropagation (BP) techniques to improve the set of parameter \(\theta =\{W,b, W^{\prime}, b^{\prime}\}\) to diminish faults among data of input and output. Generally, the function of MSEis described as a loss function that is given below:

$${J}_{MSE}(\theta )=\frac{1}{m}\sum\limits_{i=1}^{m}\frac{1}{2}\| {X}^{\left(i\right)}-{Y}^{\left(i\right)}{\| }^{2}$$

(5)

Here, \({X}^{(i)}\) symbolizes the original data of the \(i\) sample; \({Y}^{(i)}\) refers to the output data and \(m\) denotes the total amount of training samples.

The SAE is created by inserting a term of sparse penalty to the \(AE\) cost function. In the following equations, the sparse penalty term is definite:

$${J}_{spare}(\theta )=\beta \sum\limits_{j=1}^{s}KL(\rho \left.\Vert {\widehat{\rho }}_{j}\right)$$

(6)

$$KL(\rho \left.\Vert {\widehat{\rho }}_{j}\right)=\rho {\text{log}}_{2}\frac{\rho }{{\widehat{\rho }}_{j}}+\left(1-\rho \right)\text{log }\frac{1-\rho }{{1-\widehat{\rho }}_{j}}$$

(7)

$${\widehat{\rho }}_{j}=\frac{1}{m}\sum\limits_{i=1}^{m}({a}_{j}{X}^{(i)})$$

(8)

In Eq. (6), \(\beta\) denotes the factor of sparse penalty that is employed to manage the weight in the loss function; \({\widehat{\rho }}_{j}\) represents the average activation value of HL; \(s\) refers to the size of the HL; and \(\rho\) specifies the parameter of sparse. Equation (7) denotes the calculation of relative entropy formulation, which is applied to determine the degree of deviance among the dual supplies. Equation (8) computes the average activation value of HL, whereas \({a}_{j}\) designates the amount of activity in the \(j\) unit of the HL.

$$J\left(\theta \right)={J}_{MSE}\left(\theta \right)+{J}_{sparse}\left(\theta \right)$$

(9)

The above-mentioned formula is the SAE loss function. Where the 1st term denotes the function of MSE and the 2nd term refers to the sparse penalty term.

HGS-based hyperparameter tuning

Finally, the HGS optimizer is employed for the optimum hyperparameter selection. HGS is a population-reliant optimizer model that has resolved restricted and free issues while maintaining the feature32. The sub-sections define the numerous steps in an algorithm of HGS.

Moving near food

Thus the below-mentioned mathematical formulations are formed to pretend the reduction mode and imitate its future behavior.

$$\overrightarrow{Y(t+1)}=\left\{\begin{array}{l}\overrightarrow{Y(t)}\cdot \left(1+\mathfrak{R}m\left(1\right)\right), {\mathfrak{R}}_{1}k,{\mathfrak{R}}_{2}>F\\ \overrightarrow{{Z}_{1}}\cdot \overrightarrow{{Y}_{a}}+\overrightarrow{S}\cdot \overrightarrow{{Z}_{2}}.\left|\overrightarrow{{Y}_{a}}-\overrightarrow{Y\left(t\right)}\right|, {\mathfrak{R}}_{1},{\mathfrak{R}}_{2}k,\end{array}\right.$$

(10)

whereas, \(\overrightarrow{S}\) denotes the ranges among \(-b\), and \(b\). The randomly generated numbers in the range \([0\, \text{and}\, 1]\) are signified as \({\mathfrak{R}}_{1}\) and \({\mathfrak{R}}_{2}\). The existing iteration is represented as \(t\). \(\mathfrak{R}m(1)\) is a normal distribution of random numbers. \(\overrightarrow{{Z}_{1}}\) and \(\overrightarrow{{Z}_{2}}\) are the hunger’s weight. Individuals’ full position is reflected by utilizing the \(\overrightarrow{Y\left(t\right)}\) and the initial location is \(k\). \(\overrightarrow{{Y}_{a}}\) is represented by the position of a random individual. The below-given expression is for originating F.

$$F=sech\left(\left|E\left(j\right)-Bes{t}_{fitness}\right|\right)$$

(11)

whereas, \(j\in \text{1,2},\ldots ,m\). \(E\left(j\right)\) denotes the fitness value and \(Bes{t}_{fitness}\) represents the optimum fitness attained in the existing iteration method. The hyperbolic function \(\left(sech\left(y\right)=\frac{2}{{e}^{y}+{e}^{-y}}\right)\) is denoted as \(such\). The calculation for \(\overrightarrow{S}\) is set below:

$$\overrightarrow{S}=2\times b\times \mathfrak{R}-b$$

(12)

$$b=2\times \left(1-\frac{t}{\text{ maximum}_{iteration}}\right)$$

(13)

Here, \(\mathfrak{R}\) symbolizes the random integer within \([\text{0,1}]\). The biggest number in an iteration is represented by \(\text{maximum}_{iteration}.\)

Hunger role

The starvation features who are searching are demonstrated utilizing mathematical simulation. The formulation for \(\overrightarrow{{Z}_{1}}\) is provided below:

$$\overrightarrow{{Z}_{1}(j)}=\left\{\begin{array}{l}hungry\left(j\right)\cdot \frac{M}{su{m}_{hungry}}\times {\mathfrak{R}}_{4}, {\mathfrak{R}}_{3}k\end{array}\right.$$

(14)

The equation for \(\overrightarrow{{Z}_{2}}\) is as follows:

$$\overrightarrow{{Z}_{2}(j)}=\left(1-exponential\left(-\left|hungry\left(j\right)-su{m}_{{h}_{ll}mgry}\right|\right)\right)\times {\mathfrak{R}}_{5}\times 2$$

(15)

Each individual’s starvation is signified by employing the variable \(hungry (j)\). The individual’s amount is denoted by \(M\). \(su{m}_{hungry}\) is the sum of the entire individual’s hunger experiences. Random numbers among \(0\) and 1 are denoted by \({\mathfrak{R}}_{3}\), \({\mathfrak{R}}_{4}\), and \({\mathfrak{R}}_{5}\). The \(hungry (j)\) representation is the resultant utilizing Eq. (16).

$$hungry\left( j \right) = \left\{ {\begin{array}{*{20}l} {0,} \hfill & {OF~\left( j \right) = Bestfitness} \hfill \\ {ungry\left( j \right) + hunger_{{sensation}} ,} \hfill & {h~OF\left( j \right) = Bestfitness} \hfill \\ \end{array} } \right.$$

(16)

In the existing iteration, all individual fitness is kept by \(OF (j)\). The calculation for \(hunge{r}_{sensation}\) is mentioned as follows:

$$\begin{aligned} hunger_{threshold} & = \frac{E\left( j \right) – bestfitness}{{worstfitness – bestfitness}} \times {\Re }_{6} \times 2 \\ & \quad \times \left( {upper_{bound} – lower_{bound} } \right) \\ \end{aligned}$$

(17)

$$hunger_{sensation} = \left\{ {\begin{array}{*{20}l} {lower_{bound} \times \left( {1 + {\Re }} \right){ },} \hfill & {hunger_{thershold} < lower_{bound} } \hfill \\ {hunger_{thershold} ,} \hfill & {hunger_{thershold} \ge lower_{bound} } \hfill \\ \end{array} } \right.$$

(18)

whereas, \({\mathfrak{R}}_{6}\) is signified by the random number between 0 and 1. The hunger threshold is symbolized by the \(hunge{r}_{threshold}\). The fitness value of all individuals is represented by \(E(j)\). The worst and best fitness achieved throughout the present procedure of iterations is denoted by \(wors{t}_{fitness}\) and \(bes{t}_{fitness}\). The \(lowe{r}_{bound}\) and \(uppe{r}_{bound}\) are denoted by the lower and upper boundaries of the problem. There is a lower boundary \((lowe{r}_{bound})\), to the feeling of hunger \(\left(hunge{r}_{sensation}\right)\).

The fitness selection was the major factor that affected the performance of the HGS methodology. The hyperparameter selection method comprises the solution encoder process to estimate the efficacy of candidate solutions. Here, the HGS technique estimates precision as the key criterion for designing the FF.

$$Fitness =\text{ max }\left(P\right)$$

(19)

$$P=\frac{TP}{TP+FP}$$

(20)

where \(TP\) and \(FP\) are the true and the false positive values.

link