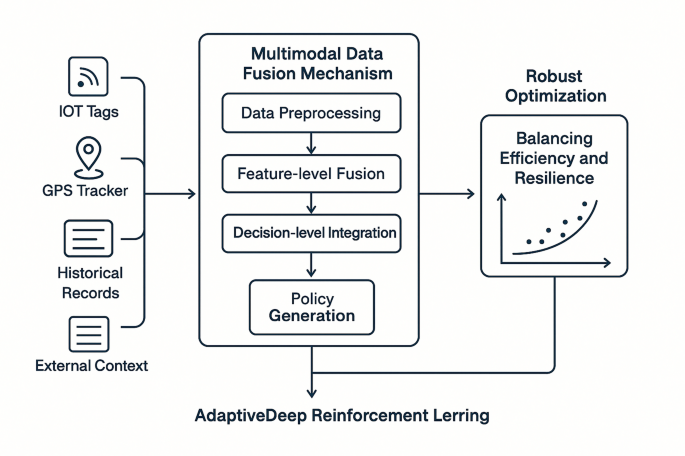

Multimodal data fusion framework

Effective decision-making in global logistics networks requires comprehensive situational awareness derived from diverse data sources spanning physical assets, digital systems, and business operations. This section proposes a multimodal data fusion framework that integrates heterogeneous information streams to construct a holistic representation of logistics network states. The framework addresses the fundamental challenges of temporal misalignment, dimensional heterogeneity, and semantic inconsistency that typically impede effective utilization of multimodal logistics data38.

The proposed fusion architecture employs a hierarchical structure with three processing stages: data preprocessing, feature-level fusion, and decision-level integration. At the preprocessing stage, source-specific techniques normalize and standardize incoming data streams according to their unique characteristics. The feature-level fusion then projects these preprocessed modalities into a common latent representation space using modality-specific encoders followed by cross-modal attention mechanisms. This process can be formalized as:

$$Z = \mathop \sum \limits_a^a \alpha_s \cdot E_a \left( {X_{i} } \right)$$

where \(Z\) represents the fused representation, \(X_{i}\) denotes the input from modality \(i\), \(E_{i}\) is the encoding function for modality \(i\), \(\alpha_{i}\) is the attention weight determining the importance of modality \(i\), and \(M\) is the total number of modalities. The attention weights are dynamically computed based on contextual relevance and data quality metrics to ensure adaptive fusion39.

Temporal alignment of asynchronous data streams presents a significant challenge in logistics contexts where sensor updates, transaction records, and external data sources operate on different timescales. The framework addresses this through a multi-rate fusion approach where high-frequency data streams are aggregated into aligned time windows, while low-frequency data undergoes temporal interpolation. The temporal consistency objective is formulated as:

$$L_{temporal} = \mathop \sum \limits_{t = 1}^{T} \mathop \sum \limits_{i = 1}^{M} \mathop \sum \limits_{j = 1}^{M} d\left( {f_{i} \left( {X_{i}^{t} } \right),f_{j} \left( {X_{j}^{t} } \right)} \right)$$

where \(d\left( { \cdot , \cdot } \right)\) represents a distance metric between aligned features, \(f_{i}\) and \(f_{j}\) are feature extraction functions for modalities \(i\) and \(j\), and \(X_{i}^{t}\) and \(X_{j}^{t}\) represent data from these modalities at time step \(t\).

To account for uncertainty in the integrated data, the framework incorporates evidential deep learning principles that estimate aleatoric and epistemic uncertainty for each modality. The uncertainty-aware fusion mechanism weights each modality’s contribution according to its reliability, with the evidence accumulation process formulated as:

$${\mathbf{e}} = \mathop \sum \limits_{i = 1}^{M} w_{i} \cdot {\mathbf{e}}_{i} ,\;w_{i} = \frac{{{\text{exp}}\left( { – u_{i} } \right)}}{{\mathop \sum \nolimits_{j = 1}^{M} {\text{exp}}\left( { – u_{j} } \right)}}$$

where \({\mathbf{e}}\) represents the accumulated evidence vector, \({\mathbf{e}}_{i}\) is the evidence from modality \(i\), \(w_{i}\) is the weight assigned to modality \(i\), and \(u_{i}\) is the uncertainty measure for modality \(i\). This approach enables robust decision-making even when certain data sources are unreliable or temporarily unavailable40.

The diverse characteristics of data sources integrated within the proposed framework are summarized in Table 1, highlighting the distinct properties that necessitate specialized fusion approaches. Efficient implementation of the fusion framework leverages parallel computing architectures that distribute preprocessing tasks across edge devices while centralizing cross-modal integration operations on cloud infrastructure. This hybrid approach balances computational efficiency with fusion accuracy, enabling real-time situation awareness across global logistics networks41.

Adaptive deep reinforcement learning model design

Building upon the multimodal data fusion framework, we design an adaptive deep reinforcement learning model that dynamically optimizes logistics scheduling decisions by learning from continuous interactions with the network environment. The proposed architecture employs a modified Soft Actor-Critic (SAC) framework enhanced with multimodal input processing capabilities and adaptive hyperparameter tuning mechanisms to accommodate the dynamic nature of global logistics operations42.

State space design

The state space is constructed to comprehensively represent the logistics network condition using the fused multimodal data. Each state \(s_{t}\) at time step \(t\) is formulated as a heterogeneous tensor:

$$s_{t} = \left\{ {X_{numerical}^{t} ,X_{categorical}^{t} ,X_{spatial}^{t} ,X_{temporal}^{t} ,X_{textual}^{t} } \right\}$$

where each component represents a different data modality processed through specialized encoding mechanisms. The numerical features include quantitative logistics metrics such as inventory levels, transit times, and capacity utilization rates. Categorical features encode discrete elements like transportation modes and facility types. Spatial features capture geographical relationships using graph-based representations of the logistics network. Temporal features incorporate historical patterns and predictions, while textual features encode semantic information from business documents and communications43. This multimodal state representation enables the model to capture complex interdependencies across different aspects of logistics operations.

Action space definition

The action space is designed to encompass the full spectrum of logistics scheduling decisions while maintaining computational tractability. For a global logistics network with \(N\) nodes and \(M\) transportation options, the action space \(A\) is defined as:

$$A = \left\{ {a_{ij}^{k} |i,j \in \left\{ {1,2, \ldots ,N} \right\},k \in \left\{ {1,2, \ldots ,M} \right\}} \right\}$$

where \(a_{ij}^{k}\) represents the decision to allocate transportation resource \(k\) for moving goods from node \(i\) to node \(j\). To address the combinatorial explosion in large-scale networks, we implement a hierarchical action decomposition approach that separates strategic routing decisions from tactical resource allocation. This hierarchical structure reduces the effective action space dimensionality while preserving decision flexibility, enabling efficient exploration during training.

Reward function design

The reward function incorporates multiple objectives relevant to logistics performance, formulated as a weighted combination of key performance indicators:

$$R\left( {s_{t} ,a_{t} ,s_{t + 1} } \right) = w_{1} \cdot R_{cost} \left( {s_{t} ,a_{t} } \right) + w_{2} \cdot R_{time} \left( {s_{t} ,a_{t} } \right) + w_{3} \cdot R_{reliability} \left( {s_{t} ,a_{t} } \right) + w_{4} \cdot R_{sustainability} \left( {s_{t} ,a_{t} } \right)$$

where \(w_{i}\) are adaptive weights that adjust based on current logistics priorities and constraints. The cost component \(R_{cost}\) evaluates transportation and operational expenses, while \(R_{time}\) measures delivery performance relative to promised time windows. The reliability component \(R_{reliability}\) assesses consistency and predictability, and \(R_{sustainability}\) quantifies environmental impact metrics such as carbon emissions and resource consumption44. The weights are dynamically adjusted through a meta-learning process that optimizes the balance between competing objectives based on evolving business requirements and network conditions.

Neural network architecture

The model employs a multimodal transformer-based architecture with modality-specific encoders and cross-modal attention mechanisms. The actor network parametrizes a stochastic policy \(\pi_{\theta } \left( {a|s} \right)\) that outputs both action means and variances to enable exploration, while the critic network estimates the Q-value function \(Q_{\phi } \left( {s,a} \right)\) to evaluate action quality. The policy improvement step follows the soft policy iteration approach:

$$\nabla_{\theta } J\left( \theta \right) = {\mathbb{E}}_{{s_{t} \sim {\mathcal{D}}}} \left[ {\nabla_{\theta } {\text{log}}\pi_{\theta } \left( {a_{t} |s_{t} } \right) \cdot \left( {Q_{\phi } \left( {s_{t} ,a_{t} } \right) – b\left( {s_{t} } \right)} \right)} \right]$$

where \({\mathcal{D}}\) represents the experience replay buffer and \(b\left( {s_{t} } \right)\) is a state-dependent baseline that reduces variance. To address the non-stationarity of logistics environments, we implement a distributional critic that models the full distribution of returns rather than just expectations45:

$$Z_{\phi } \left( {s,a} \right) = \{ z_{i} ,p_{i} \}_{i = 1}^{N}$$

where \(Z_{\phi } \left( {s,a} \right)\) represents the return distribution with atoms \(z_{i}\) and corresponding probabilities \(p_{i}\). This distributional approach enhances robustness to uncertainty and improves training stability in the volatile context of global logistics operations46.

The model parameters are configured according to extensive experimental validation across diverse logistics scenarios, as summarized in Table 2. These parameter settings balance learning efficiency with model expressiveness, enabling effective adaptation to the complex dynamics of global logistics networks.

Scheduling policy generation for global logistics networks

The trained multimodal deep reinforcement learning model provides the foundation for generating adaptive scheduling policies that dynamically respond to evolving logistics conditions. This section presents the algorithmic framework for transforming model outputs into executable scheduling decisions across resource allocation, route planning, and task scheduling dimensions. The policy generation process employs a hierarchical decision-making approach that decomposes complex global logistics decisions into a sequence of interconnected sub-decisions to maintain computational tractability while preserving solution quality47.

At each decision epoch \(t\), the policy extraction algorithm samples actions from the learned stochastic policy distribution with temperature-controlled exploration:

$$a_{t} = {\text{arg}}\mathop {{\text{max}}}\limits_{a \in A} \mathop \sum \limits_{i = 1}^{K} \pi_{\theta } \left( {a_{i} |s_{t} } \right) \cdot Q_{\phi } \left( {s_{t} ,a_{i} } \right) \cdot \tau^{ – 1}$$

where \(\pi_{\theta }\) represents the trained policy network, \(Q_{\phi }\) is the critic value function, \(K\) is the number of candidate actions sampled from the policy distribution, and \(\tau\) is the temperature parameter that controls the trade-off between exploration and exploitation. During operational deployment, \(\tau\) is dynamically adjusted based on uncertainty estimates derived from the distributional critic, enabling more exploratory behavior in novel situations while favoring exploitation in familiar contexts.

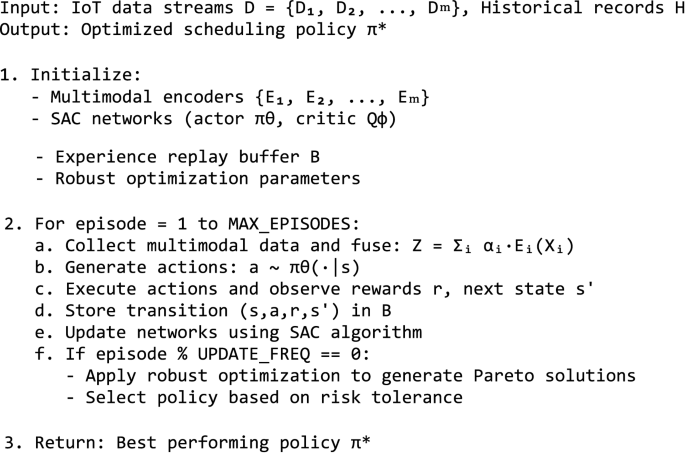

Algorithm 1 summarizes the complete training and deployment procedure for the proposed multimodal deep reinforcement learning approach.

Multimodal DRL Training and Deployment.

The resource allocation component of the scheduling policy maps transportation assets (vehicles, containers, storage facilities) to logistics tasks based on spatiotemporal demand patterns. For a set of \(n\) tasks requiring completion and \(m\) available resources, the allocation matrix \(X\) is derived from the model output using a deterministic rounding procedure:

$$X_{ij} = {\mathcal{R}}\left( {\frac{{{\text{exp}}\left( {\pi_{\theta } \left( {a_{ij} |s_{t} } \right)/\tau } \right)}}{{\mathop \sum \nolimits_{{j{^{\prime}} = 1}}^{m} {\text{exp}}\left( {\pi_{\theta } \left( {a_{{ij{^{\prime}}}} |s_{t} } \right)/\tau } \right)}}} \right)$$

where \(X_{ij}\) represents the allocation of resource \(j\) to task \(i\), and \({\mathcal{R}}\) is a rounding operator that ensures feasibility constraints are satisfied while minimizing deviation from the optimal continuous solution. This approach preserves the stochastic nature of the policy during training while providing deterministic execution plans during deployment.

Route planning decisions are generated using a hierarchical process that first determines high-level corridors between major logistics hubs based on model outputs, then refines specific paths using constrained shortest-path algorithms augmented with learned heuristics. This multi-level approach bridges the gap between the abstract action representations used during training and the detailed execution plans required for operational implementation. The dynamic routing adjustments respond to real-time conditions including traffic congestion, weather events, and port delays, leveraging the model’s ability to integrate multimodal sensory inputs48.

Task scheduling policies determine the sequencing and timing of logistics operations, balancing throughput maximization with due date adherence and resource utilization. The scheduling algorithm transforms model outputs into priority values for each pending task:

$$p_{i} = \beta_{1} \cdot \hat{v}_{i} + \beta_{2} \cdot \hat{u}_{i} + \beta_{3} \cdot \hat{d}_{i}$$

where \(p_{i}\) is the priority of task \(i\), \(\hat{v}_{i}\) is the normalized value (importance) of the task, \(\hat{u}_{i}\) is the normalized urgency factor (deadline proximity), \(\hat{d}_{i}\) is the normalized disruption potential, and \(\beta_{1} ,\beta_{2} ,\beta_{3}\) are contextually adaptive weights derived from the policy network. Tasks are then scheduled according to these priorities while respecting precedence constraints and resource availability.

The integrated policy generation framework incorporates feedback mechanisms that evaluate execution outcomes and trigger policy refinements when performance deviates from expectations. This closed-loop approach enables continuous adaptation to changing logistics conditions without requiring complete retraining of the underlying model49. Implementation in production environments leverages distributed computing architectures, with strategic decisions computed on central servers while tactical adjustments are delegated to edge devices positioned throughout the logistics network. This hierarchical execution strategy balances computational requirements with the need for responsive decision-making in time-sensitive logistics operations.

link