LLMs augmented hierarchical reinforcement learning with action primitives for long-horizon manipulation tasks

Yun, W. J., Mohaisen, D., Jung, S., Kim, J.-K. & Kim, J. Hierarchical reinforcement learning using gaussian random trajectory generation in autonomous furniture assembly. In Proceedings of the 31st ACM International Conference on Information & Knowledge Management, 3624–3633 (2022).

Levine, S., Finn, C., Darrell, T. & Abbeel, P. End-to-end training of deep visuomotor policies. The J. Mach. Learn. Res. 17, 1334–1373 (2016).

Google Scholar

Schulman, J., Moritz, P., Levine, S., Jordan, M. & Abbeel, P. High-dimensional continuous control using generalized advantage estimation. arXiv preprint arXiv:1506.02438 (2015).

Jiang, Y., Gu, S. S., Murphy, K. P. & Finn, C. Language as an abstraction for hierarchical deep reinforcement learning. Adv. Neural Inf. Process. Syst. 32, (2019).

Nasiriany, S., Liu, H. & Zhu, Y. Augmenting reinforcement learning with behavior primitives for diverse manipulation tasks. In 2022 International Conference on Robotics and Automation (ICRA), 7477–7484 (IEEE, 2022).

Bacon, P.-L., Harb, J. & Precup, D. The option-critic architecture. In Proceedings of the AAAI conference on artificial intelligence, vol. 31 (2017).

Eysenbach, B., Salakhutdinov, R. R. & Levine, S. Search on the replay buffer: Bridging planning and reinforcement learning. Adv. Neural Inf. Process. Syst. 32, (2019).

Nachum, O., Gu, S. S., Lee, H. & Levine, S. Data-efficient hierarchical reinforcement learning. Adv. Neural Inf. Process. Syst. 31, (2018).

Co-Reyes, J. et al. Self-consistent trajectory autoencoder: Hierarchical reinforcement learning with trajectory embeddings. In International conference on machine learning, 1009–1018 (PMLR, 2018).

Andrychowicz, O. M. et al. Learning dexterous in-hand manipulation. The Int. J. Robotics Res. 39, 3–20 (2020).

Google Scholar

Kalashnikov, D. et al. Qt-opt: Scalable deep reinforcement learning for vision-based robotic manipulation. arXiv preprint arXiv:1806.10293 (2018).

Dalal, M., Pathak, D. & Salakhutdinov, R. R. Accelerating robotic reinforcement learning via parameterized action primitives. Adv. Neural Inf. Process. Syst. 34, 21847–21859 (2021).

Wang, H., Zhang, H., Li, L., Kan, Z. & Song, Y. Task-driven reinforcement learning with action primitives for long-horizon manipulation skills. IEEE Transactions on Cybern. (2023).

Huang, W. et al. Inner monologue: Embodied reasoning through planning with language models. arXiv preprint arXiv:2207.05608 (2022).

Frans, K., Ho, J., Chen, X., Abbeel, P. & Schulman, J. Meta learning shared hierarchies. arXiv preprint arXiv:1710.09767 (2017).

Li, A. C., Florensa, C., Clavera, I. & Abbeel, P. Sub-policy adaptation for hierarchical reinforcement learning. arXiv preprint arXiv:1906.05862 (2019).

Allshire, A. et al. Laser: Learning a latent action space for efficient reinforcement learning. In 2021 IEEE International Conference on Robotics and Automation (ICRA), 6650–6656 (IEEE, 2021).

Hausman, K., Springenberg, J. T., Wang, Z., Heess, N. & Riedmiller, M. Learning an embedding space for transferable robot skills. In International Conference on Learning Representations (2018).

Sharma, A., Gu, S., Levine, S., Kumar, V. & Hausman, K. Dynamics-aware unsupervised discovery of skills. arXiv preprint arXiv:1907.01657 (2019).

Xie, K., Bharadhwaj, H., Hafner, D., Garg, A. & Shkurti, F. Latent skill planning for exploration and transfer. arXiv preprint arXiv:2011.13897 (2020).

Lynch, C. et al. Learning latent plans from play. In Conference on robot learning, 1113–1132 (PMLR, 2020).

Shankar, T., Tulsiani, S., Pinto, L. & Gupta, A. Discovering motor programs by recomposing demonstrations. In International Conference on Learning Representations (2019).

Brown, T. et al. Language models are few-shot learners. Adv. Neural Inf. Process. Syst. 33, 1877–1901 (2020).

Huang, W., Abbeel, P., Pathak, D. & Mordatch, I. Language models as zero-shot planners: Extracting actionable knowledge for embodied agents. In International Conference on Machine Learning, 9118–9147 (PMLR, 2022).

Ahn, M. et al. Do as i can, not as i say: Grounding language in robotic affordances. arXiv preprint arXiv:2204.01691 (2022).

Suglia, A., Gao, Q., Thomason, J., Thattai, G. & Sukhatme, G. Embodied bert: A transformer model for embodied, language-guided visual task completion. arXiv preprint arXiv:2108.04927 (2021).

Pashevich, A., Schmid, C. & Sun, C. Episodic transformer for vision-and-language navigation. In Proceedings of the IEEE/CVF International Conference on Computer Vision, 15942–15952 (2021).

Sharma, P., Torralba, A. & Andreas, J. Skill induction and planning with latent language. arXiv preprint arXiv:2110.01517 (2021).

Li, S. et al. Pre-trained language models for interactive decision-making. Adv. Neural Inf. Process. Syst. 35, 31199–31212 (2022).

Google Scholar

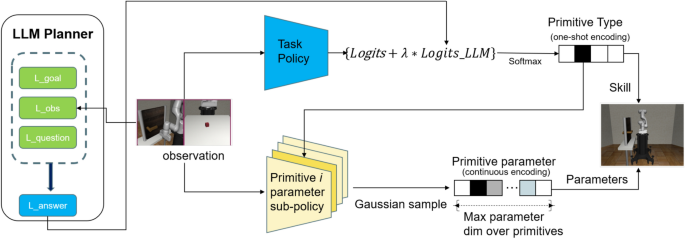

Prakash, B., Oates, T. & Mohsenin, T. Llm augmented hierarchical agents. arXiv preprint arXiv:2311.05596 (2023).

Bohg, J., Morales, A., Asfour, T. & Kragic, D. Data-driven grasp synthesis-a survey. IEEE Trans. Robot. 30, 289–309 (2013).

Google Scholar

Ijspeert, A. J., Nakanishi, J., Hoffmann, H., Pastor, P. & Schaal, S. Dynamical movement primitives: learning attractor models for motor behaviors. Neural Comput. 25, 328–373 (2013).

Google Scholar

Karaman, S. & Frazzoli, E. Sampling-based algorithms for optimal motion planning. Int. J. Rob. Res. 30, 846–894 (2011).

Google Scholar

Fu, H., Yu, S., Tiwari, S., Littman, M. & Konidaris, G. Meta-learning parameterized skills. arXiv preprint arXiv:2206.03597 (2022).

Bousmalis, K. et al. Robocat: A self-improving generalist agent for robotic manipulation. Trans. Mach. Learn. Res. arXiv:2306.11706 (2023).

Vemprala, S., Bonatti, R., Bucker, A. & Kapoor, A. Chatgpt for robotics: Design principles and model abilities. Microsoft Auton. Syst. Robot. Res. 2, 20 (2023).

Mu, Y. et al. Embodiedgpt: Vision-language pre-training via embodied chain of thought. Adv. Neural Inf. Process. Syst. 36, (2024).

Liu, H. et al. Enhancing the llm-based robot manipulation through human-robot collaboration (IEEE Robotics Autom, Lett, 2024).

Google Scholar

Kim, Y. et al. A survey on integration of large language models with intelligent robots. Intell. Serv. Robotics 17, 1091–1107 (2024).

Google Scholar

Brohan, A. et al. Rt-1: Robotics transformer for real-world control at scale. arXiv preprint arXiv:2212.06817 (2022).

Brohan, A. et al. Rt-2: Vision-language-action models transfer web knowledge to robotic control. arXiv preprint arXiv:2307.15818 (2023).

Driess, D. et al. Palm-e: An embodied multimodal language model. arXiv preprint arXiv:2303.03378 (2023).

Mees, O. et al. Octo: An open-source generalist robot policy. In First Workshop on Vision-Language Models for Navigation and Manipulation at ICRA 2024 (2024).

Du, Y. et al. Guiding pretraining in reinforcement learning with large language models. arXiv preprint arXiv:2302.06692 (2023).

Dalal, M., Chiruvolu, T., Chaplot, D. & Salakhutdinov, R. Plan-seq-learn: Language model guided rl for solving long horizon robotics tasks. arXiv preprint arXiv:2405.01534 (2024).

Song, S. et al. Playing fps games with environment-aware hierarchical reinforcement learning. In IJCAI, 3475–3482 (2019).

Wang, R., Zhao, D., Gupte, A. & Min, B.-C. Initial task allocation in multi-human multi-robot teams: An attention-enhanced hierarchical reinforcement learning approach (IEEE Robotics Autom, Lett, 2024).

Gao, X., Liu, J., Wan, B. & An, L. Hierarchical reinforcement learning from demonstration via reachability-based reward shaping. Neural Process. Lett. 56, 184 (2024).

Google Scholar

Yang, X. et al. Hierarchical reinforcement learning with universal policies for multistep robotic manipulation. IEEE Transactions on Neural Networks Learn. Syst. 33, 4727–4741 (2021).

Zhang, D. et al. Explainable hierarchical imitation learning for robotic drink pouring. IEEE Trans. Autom. Sci. Eng. 19, 3871–3887 (2021).

Google Scholar

Zhang, H., Wang, H., Qian, T. & Kan, Z. Temporal logic guided affordance learning for generalizable dexterous manipulation. In 2024 7th International Symposium on Autonomous Systems (ISAS), 1–7 (IEEE, 2024).

Zhu, Y. et al. robosuite: A modular simulation framework and benchmark for robot learning. arXiv preprint arXiv:2009.12293 (2020).

Haarnoja, T., Zhou, A., Abbeel, P. & Levine, S. Soft actor-critic: Off-policy maximum entropy deep reinforcement learning with a stochastic actor. In International conference on machine learning, 1861–1870 (PMLR, 2018).

Masson, W., Ranchod, P. & Konidaris, G. Reinforcement learning with parameterized actions. In Proceedings of the AAAI conference on artificial intelligence, vol. 30 (2016).

Achiam, J. et al. Gpt-4 technical report. arXiv preprint arXiv:2303.08774 (2023).

Duan, J. et al. Distributional soft actor-critic: Off-policy reinforcement learning for addressing value estimation errors. IEEE Trans. Neural Netw. Learn. Syst. 33, 6584–6598 (2021).

Google Scholar

Mehta, S. A., Habibian, S. & Losey, D. P. Waypoint-based reinforcement learning for robot manipulation tasks. In 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 541–548 (IEEE, 2024).

Levenshtein, V. I. et al. Binary codes capable of correcting deletions, insertions, and reversals. In Soviet physics doklady, vol. 10, 707–710 (Soviet Union, 1966).

link