Optimized machine learning based comparative analysis of predictive models for classification of kidney tumors

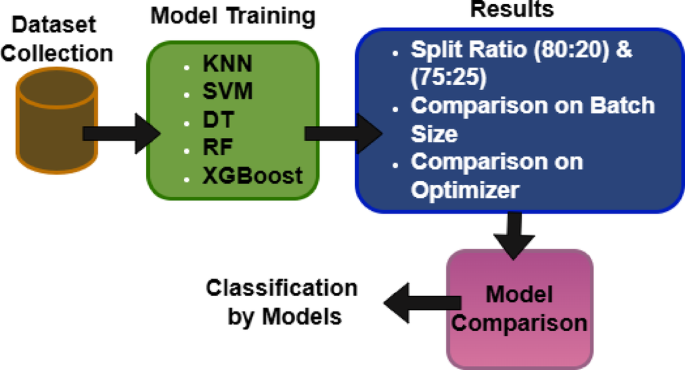

This section presents an ablation study analyzing the impact of different splitting ratios, comparing model performance across various optimizers and batch sizes.

Ablation study based on splitting ratio

This subsection examines the effect of varying dataset splitting ratios, specifically 80:20 and 75:25, on model performance.

80–20

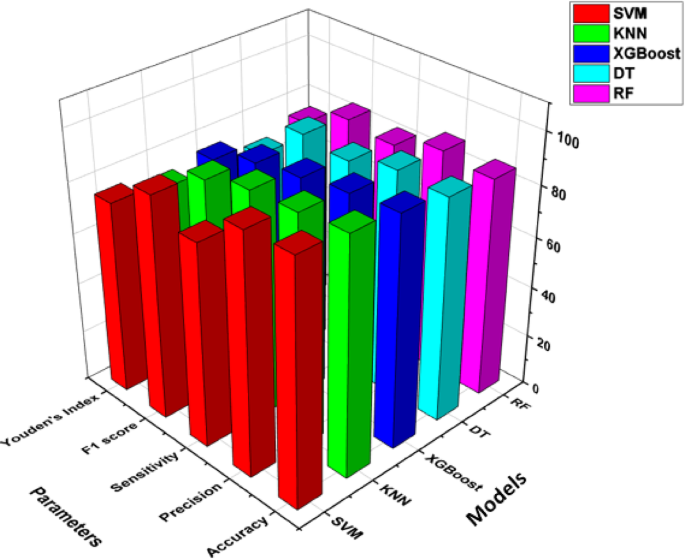

The Fig. 5 illustrates a comparative analysis of five machine learning classifiers—KNN, XGBoost, SVM, DT, RF—based on key performance metrics. Among the models, SVM achieves the highest accuracy as 95%, indicating its strong generalization capability. KNN follows closely with 93% accuracy, while XGBoost also performs well at 91%. DT and RF show slightly lower accuracy at 88% and 86%, respectively. Precision, is highest for SVM with value as 94%, suggesting it makes fewer false positive predictions compared to others. Finally, Youden’s Index, which balances sensitivity and specificity, is highest for SVM with value as 76%. In contrast, DT has the lowest value as 70%, suggesting room for improvement.

Overall, SVM demonstrates the best classification performance, while KNN and XGBoost also provide strong results.

75–25

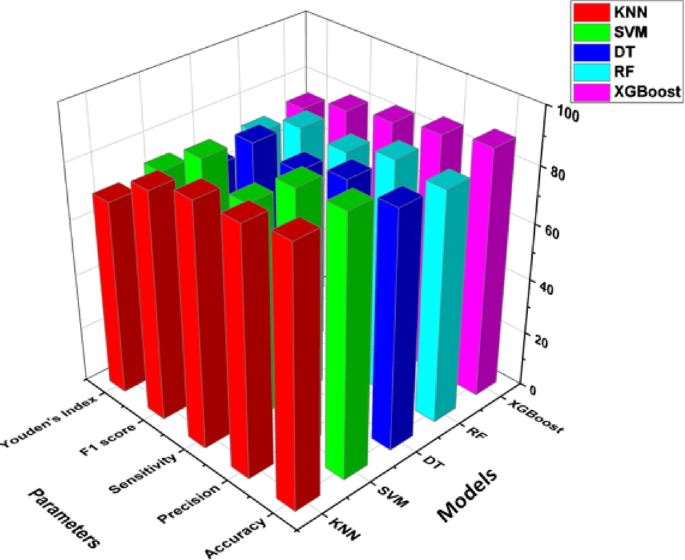

The Fig. 6 provides a comparative analysis of five machine learning classifiers— KNN, XGBoost, SVM, DT, RF. Among the classifiers, SVM achieves the highest accuracy as 92%, indicating strong generalization and predictive capability. KNN as 91% and XGBoost as 89% also perform well, while DT shows value as 85% and RF as 83% have slightly lower accuracy. Precision, which measures how many of the predicted positive cases are actually positive, is highest for SVM as 91%, suggesting fewer false positives, while the other models range between 85 and 88%.

Overall, SVM, KNN, and XGBoost demonstrate strong classification performance. The choice of the best model depends on the importance of precision versus sensitivity in the specific application.

Performance comparison with different optimizers and batch sizes

From the last section it is seen that model has performed best on the split ratio of 80:20. Out of the five models SVM, KNN and XGBoost are selected as the best performing models. Now in this section the model training of these three models is performed on different batch sizes and different optimizers.

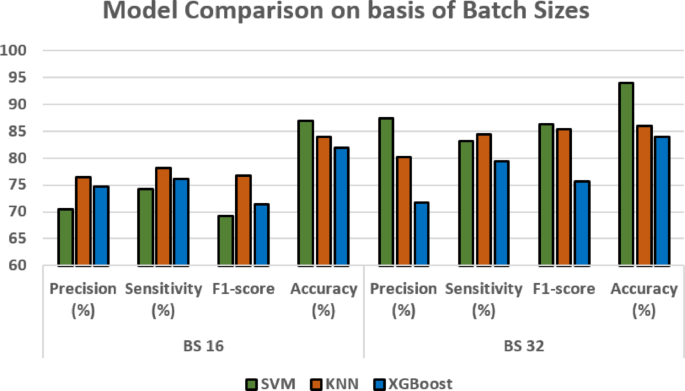

Performance on different batch sizes

In this sub-section, the performance of three models is performed on the basis of different batch sizes.

The Table 3 and Fig. 7 presents the performance evaluation of three machine learning models—SVM, KNN and XGBoost—across different batch sizes (16 and 32)39,40. For SVM, the performance significantly improves when the batch size increases from 16 to 32. The precision rises from 70.42 to 87.47%, and sensitivity improves from 74.28 to 83.20%, leading to an F1-score increase from 69.2 to 86.35. The accuracy also improves from 87 to 94%, making SVM the best-performing model at batch size 32. KNN also shows an improvement with a larger batch size. At batch size 16, it has a precision of 76.42%, sensitivity of 78.1%, and an F1-score of 76.7, leading to 84% accuracy. This suggests that KNN benefits from a larger batch size, though not as significantly as SVM. XGBoost, however, shows a mixed trend leading to a slight increase in accuracy to 84%. Overall, SVM at batch size 32 performs the best, while KNN shows steady improvement, and XGBoost remains relatively stable across batch sizes.

Models comparison based on Batch sizes.

Performance on different optimizers

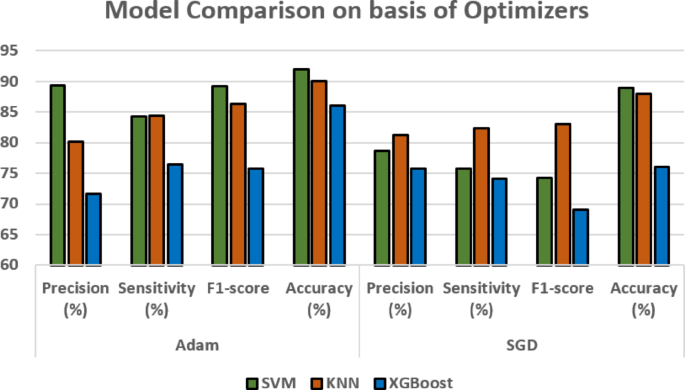

In this sub-section, Table 4 and Fig. 8 illustrates the performance of three models that is performed on the basis of different optimizers using best performing 32 batch size.

Models comparison on the basis of Optimizers.

The performance of three machine learning models—SVM, KNN, and XGBoost—using two distinct optimizers, Adam and SGD, is contrasted in the table. Precision, sensitivity, F1-score, and accuracy—all crucial measures for evaluating classification performance—are used to evaluate the models. For SVM, the Adam optimizer significantly outperforms SGD. With Adam, precision is 89.42%, sensitivity is 84.28%, and the F1-score reaches 89.2, leading to 92% accuracy. In contrast, using SGD results in lower values across all metrics. This suggests that Adam allows SVM to generalize better and make more precise predictions.

KNN also performs better with Adam. At Adam optimization, it achieves 80.14% precision, 84.42% sensitivity. When using SGD, the metrics slightly decrease, with precision at 81.28%. This indicates that KNN is more stable across both optimizers, but Adam provides a slight edge. XGBoost, however, performs better with SGD in precision (75.71%) but worse in other metrics. Overall, Adam optimization enhances performance across all models, particularly for SVM and KNN. This suggests that Adam is generally a better choice for these models in classification tasks.

Performance on different datasets

Table 5 gives a comparison of two image sets used in recent studies to test diagnostic models for chest diseases. The first set is called the Lungs Disease Dataset (2022), and it has five different categories: Bacterial Pneumonia, Coronavirus Disease, Normal, Tuberculosis, and Viral Pneumonia. It has about 10,095 images in total, with roughly 2,000 images in each category. The images are of moderate quality, around 400 × 300 pixels. The second set is called the Tuberculosis Chest X-rays (Shenzhen) dataset (2020), and it only includes images for tuberculosis. It has 662 images, which are high-resolution, measuring 3000 × 2900 pixels.

The Lungs Disease Dataset had better results in all the main evaluation measures. It had a precision of 87.32%, sensitivity of 93.46%, F1-score of 92.23%, and an accuracy of 96.30%. The Shenzhen dataset had slightly lower scores: precision of 86.45%, sensitivity of 92.36%, F1-score of 91.18%, and accuracy of 94. 20%. The Lungs Disease Dataset shows better ability to work well with different types of lung diseases, making it a good choice for broad studies that look at many different lung conditions.

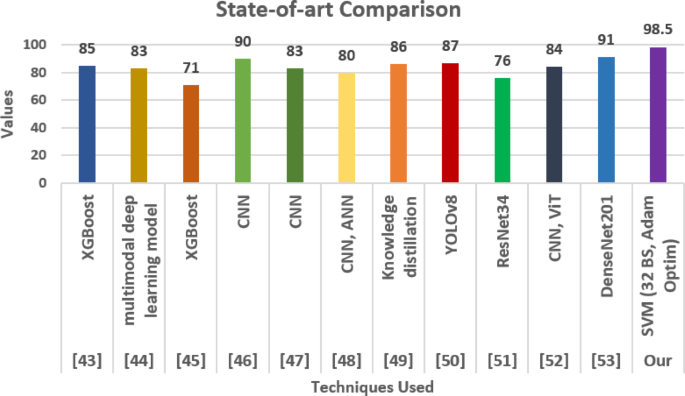

State-of-art comparison

The comparison with other researchers is shown in Table 6 and Fig. 9. The author43 has used SVM with same number of images as proposed has obtained the value of accuracy as 93.0% and author17 has used XGBoost and scoring the value of accuracy as 85.66%. Although the proposed SVM model with 32 batch size and Adam optimizer the model is scoring the value of accuracy as 98.5%.

State-of-the-art Comparison.

To demonstrate the effectiveness of our proposed approach, we compared it with several existing state-of-the-art methods for kidney tumor detection and classification reported in the literature. As shown in Table [X], earlier studies have utilized various machine learning and deep learning techniques, including XGBoost43,45, convolutional neural networks (CNNs)46,47,48, multimodal deep learning models44, knowledge distillation49, YOLOv850, ResNet3451, vision transformers (ViT)52, and DenseNet20153. Reported accuracies among these methods range from 71 to 91%, with the highest accuracy achieved by DenseNet201 at 91%. In contrast, our approach employing an SVM model with a batch size of 32 and the Adam optimizer significantly outperforms these methods, achieving an accuracy of 98.5%. This remarkable improvement highlights the robustness and reliability of our proposed method, which integrates model tuning and comprehensive evaluation through an ablation study. The superior performance indicates that our model has strong potential for real-world clinical deployment to assist in the early and precise detection of kidney tumors.

link