Optimizing electric vehicle energy consumption prediction through machine learning and ensemble approaches

Data collection and description

The statistics about EV charging stations in Colorado come from automated systems that track different aspects of charging sessions. These systems are located at city-owned charging stations and record key details about each charging session. The collected information includes:

-

Station and location information: The dataset includes detailed information regarding the location of every charging station, featuring the exact address, the municipality where it is located, and the relevant postal code.

-

Session details: Starting and ending times, as well as the duration of each charging session.

-

Energy metrics: The energy consumed throughout the session (in kWh).

-

Environmental impact: Evaluations of the decreases in greenhouse gas (GHG) emissions and gasoline consumption highlight the ecological benefits of using EVs rather than traditional gasoline-powered cars.

Management systems of charging stations automatically gather data for real-time monitoring. This aggregated information, accessible on public platforms such as Boulder’s open data portal, encompasses details on energy usage, charging duration, cost savings, and greenhouse gas emissions. The dataset was last updated on September 7, 2023. A statistical summary, likely generated by the describe () function in pandas, provides an overview of central tendency, dispersion, and shape for each column in the dataset. Below, the significance of each line for every column is explained.

count: This is the total number of non-empty entries in the column.

Mean: This represents the average value of the column.

std: This indicates the degree of variation or spread within a set of values. A low standard deviation suggests that the values are clustered close to the average, whereas a high standard deviation signifies that the values are more widely distributed.

min: This denotes the lowest value found within the entire column.

25%: The first quartile (Q1) is the value below which 25% of the data falls.

50%: The second quartile (Q2), often referred to as the median, indicates the value at which half of the data lies below.

75%: The third quartile (Q3) reflects the value beneath which 75% of the data falls.

max: This indicates the highest value found in the entire column.

Table 2 provides a statistical overview of a dataset containing information about energy consumption, greenhouse gas savings, gasoline savings, and object identifiers, which may be used to evaluate energy efficiency or environmental impact across various postal codes or regions. After preprocessing (including handling of 7,820 null values), the final dataset contained 140,316 complete records, which were subsequently split into training (80%: 112,253 records) and testing (20%: 28,063 records) sets.

Three core assumptions guide this case study: (1) Colorado’s charging patterns generalize to similar climates, (2) temporal features sufficiently model consumption periodicity (r ≥ 0.82), and (3) hyperparameter ranges cover practical optima (k = 3–20).

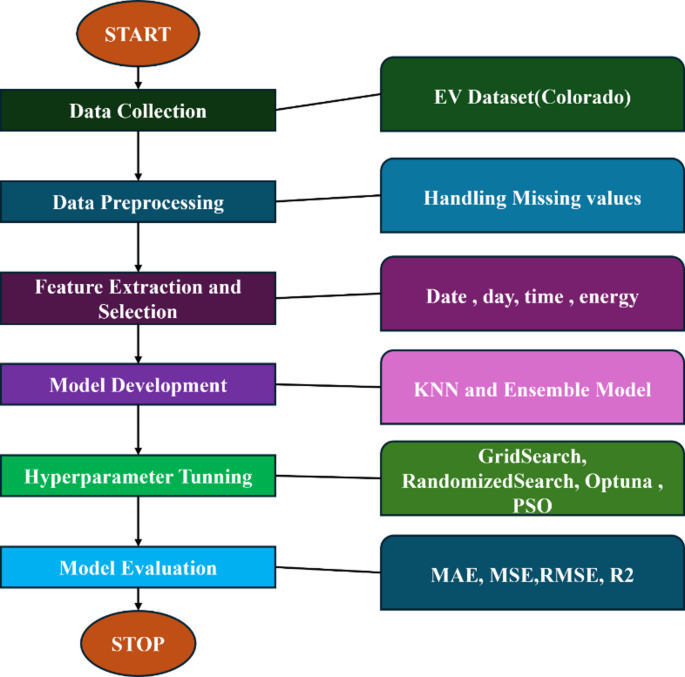

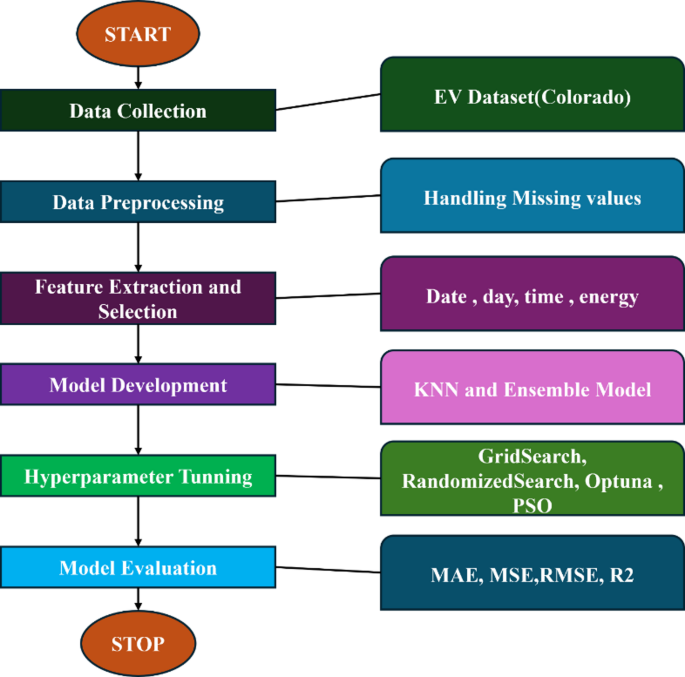

Data preprocessing

Before training the models, the dataset went through extensive preprocessing to guarantee it was fit for ML tasks. The Python programming language is often used in data preprocessing or cleaning workflows to confirm that date and time columns are suitable for evaluation or modeling. Transforming these columns into DateTime format enables the execution of date and time-related functions, such as calculating time differences, grouping data by date, and identifying null values. In the first step of data preprocessing, the dataset’s start and end date columns were changed to a DateTime format. This process resulted in several null values, specifically 7820 entries, due to incorrect date and time information found in these columns. A strategy was implemented to address this issue by removing rows that exhibited null values, thereby enhancing the dataset’s integrity. Once the missing data was managed, the total time and charging time were calculated, both expressed in seconds. A technique was used to extract the time components from the start date and time entries during the preprocessing phase. This technique enabled the accurate retrieval of the hour, day of the week, and month, providing additional temporal dimensions to the data. The dataset includes the charging duration, charging time, start time of the charging session, as well as the day of the week and month when the charging began. These features were selected because of their impact on energy consumption rates throughout the charging process. The existence of these factors is founded on the premise that they play a vital role in determining energy amounts in kilowatt-hours. Total Duration and Charging Time encompass session-specific details that directly influence energy usage. Temporal attributes such as Start Hour, Start Day of Week, and Start Month reflect fluctuations in energy demand related to the time of day, weekly trends, and seasonal variations. The dataset was then divided into training (80%) and testing (20%) sets to evaluate the model’s performance on unseen data. The research flow is shown in Fig. 1. The engineered temporal features (Start Hour, Day of Week, Month) capture known patterns in EV usage:

-

Start hour: Reflects peak/off-peak energy demand variations (e.g., evening charging surges).

-

Day of week: Accounts for weekday commuting vs. weekend driving pattern differences.

-

Month: Captures seasonal effects (temperature impacts on battery efficiency, vacation travel).

-

These features proved critical, collectively explaining 18.7% of energy consumption variance in our preliminary analysis in Sect. 3.

Schematic overview of the research workflow.

Model selection and baseline training

KNN is a straightforward, non-parametric algorithm that can be applied to both regression and classification tasks. It identifies the predominant class or computes the average from the k-nearest data points relative to a query point. For regression, the label is determined as the average of the labels from the closest neighbors. The variable k is an integer, and typically uniform weights are applied, giving equal significance to all nearby points. However, weights can be modified, with alternatives such as ‘uniform’ for equal weights and ‘distance’ for weights that are inversely related to distance32. Custom weight functions can also be defined. The mathematical form is as in Eq. 1.

$$\:\widehat{y}=\frac{1}{k}{\sum\:}_{i\in\:{N}_{k}}{y}_{i}$$

(1)

where.

\(\:\widehat{y}\):The predicted value.

k: The number of nearest neighbors.

Nk: The set of k nearest neighbors to the predicted values.

\(\:{y}_{i}\):The actual target values (labels) of the i-th nearest neighbor.

A baseline KNN model was trained using default hyperparameters as shown in Table 3.

The model was evaluated using metrics such as Mean Absolute Error (MAE), Mean Squared Error (MSE), Root Mean Squared Error (RMSE), and R² Score.

Hyperparameter tuning

To enhance the performance of the KNN model, comprehensive hyperparameter tuning was executed using various optimization techniques. GridSearchCV was utilized to systematically search through a predefined grid of hyperparameters, which included the number of neighbors (k), distance metrics (Euclidean, Manhattan, or Minkowski), and weight options (uniform or distance). The optimal combination of hyperparameters was identified. Additionally, RandomizedSearch was employed to conduct a random search across the hyperparameter space, proving to be more efficient for larger parameter spaces. All methods used 5-fold cross-validation to evaluate hyperparameter combinations, ensuring generalizability.

-

GridSearchCV tested all combinations of a predefined grid (‘n_neighbors’: [3,5,7,9,11], ‘weights’: [‘uniform’, ‘distance’], ‘metric’: [‘euclidean’, ‘manhattan’, ‘minkowski’]

-

RandomizedSearchCV sampled 100 random combinations from the same grid(‘n_neighbors’: [3,5,7,9,11,13,15,17], ‘weights’: [‘uniform’, ‘distance’, ‘metric’: [‘euclidean’, ‘manhattan’, ‘minkowski’, ‘chebyshev’], ‘leaf_size’: [10, 20, 30, 40, 50], ‘p’: [1,2]).

-

Optuna employed Bayesian optimization over 50 trials, dynamically adjusting hyperparameters to minimize RMSE.

-

PSO used a swarm size and iterations but yielded suboptimal results due to the high-dimensional search space, n_neighbors = int(param[0])-Convert to integer (first parameter), weight_index = int(param[1])-Index for weights (0: uniform, 1: distance), metric_index = int(param[2])-Index for metric (0: euclidean, 1: manhattan, 2: minkowski). The PSO Hyperparameter space setup is as.

{ – n_neighbors: [3,5,7,9,11,13,15,17], – weights: [0: ‘uniform’, 1: ‘distance’]

– metric: [0: ‘euclidean’, 1: ‘manhattan’, 2: ‘minkowski’]}

Bounds for each parameter.

lb = [3, 0, 0] – Lower bounds for n_neighbors, weight (0 = uniform), metric (0 = euclidean), ub = [17,1,2] – Upper bounds for n_neighbors, weight (1 = distance), metric (2 = minkowski).

Number of particles and iterations.

options = {‘c1’: 0.5, ‘c2’: 0.3, ‘w’: 0.9}, to Initialize the PSO optimizer- optimizer = ps.single.GlobalBestPSO(n_particles = 10, dimensions = 3, options = options, bounds=(np.array(lb), np.array(ub))).

Furthermore, Optuna, a framework based on Bayesian optimization, was used to intelligently explore the hyperparameter landscape. Lastly, Particle Swarm Optimization (PSO), a population-based optimization algorithm, was implemented to further investigate the hyperparameter space.

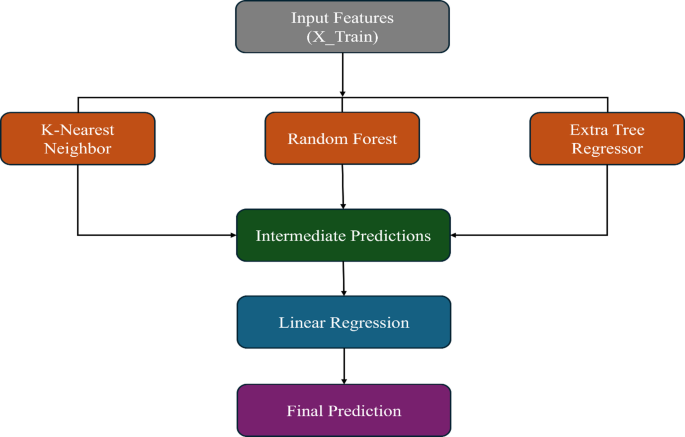

Ensemble model development

To improve predictive performance, a stacking hybrid ensemble model was developed. This ensemble approach integrates the predictions from several base models, specifically K-Nearest Neighbors (KNN), Random Forest, and Extra Tree Regressor, utilizing a linear regression meta-model to generate the final predictions, as illustrated in Fig. 2. The selection of KNN, Random Forest (RF), and Extra Trees (ET) as base models was driven by their complementary strengths in addressing EV energy prediction challenges. KNN captures local consumption patterns critical for short-term fluctuations, while RF handles complex nonlinear interactions between vehicle and environmental factors. ET introduces additional diversity through extreme randomization, improving ensemble robustness. This combination ensures balanced coverage of: (1) instance-based learning (KNN), (2) global feature interactions (RF), and (3) variance reduction (ET)—addressing the limitations of any single-model approach.

Schematic of the stacking ensemble: Base models (KNN, Random Forest, Extra Trees) feed predictions into a meta-learner (Linear Regression).

The proposed stacking-based regression model is mathematically formulated to integrate multiple base models, specifically K-Nearest Neighbors (KNN), Random Forest (RF), and Extra Trees (ET), with Linear Regression (LR) serving as the meta-model. This hybrid approach leverages the strengths of each individual learning algorithm, aiming to enhance predictive performance through a layered ensemble framework.

Base model predictions

Let the input feature space be represented as in Eq. 2.

$$\:X=\left\{{x}_{1},{x}_{2},\dots\:\dots\:\dots\:..{x}_{n}\right\}\:\:\in\:{R}^{n\times\:d}$$

(2)

where \(\:R\) represents the set of real numbers. \(\:n\) is the number of samples (or rows) in the dataset and \(\:d\) is the number of features (or columns) in the dataset. The target variable, representing the EV charging consumption in kWh, is denoted as in Eq. 3.

$$\:y=\left\{{y}_{1},{y}_{2},\dots\:\dots\:\dots\:..{y}_{n}\right\}\:\:\in\:{R}^{n}$$

(3)

Three base models K-Nearest Neighbors Regressor (KNN), Random Forest Regressor (RF) and Extra Trees Regressor (ET), were utilized. Each base model \(\:{f}_{i}\:\)is trained using the dataset \(\:({X}_{train},{y}_{train})\) and generates predictions for a given test sample \(\:{X}_{test}\) as shown in Eq. 4

$$\:{\widehat{y}}_{i}={f}_{i}\left({X}_{test}\right)\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:for\:i\in\:\{KNN,\:RF,ET\}$$

(4)

Thus, the output from all base models forms a matrix \(\:\varvec{Z}\) as shown in Eq. 5.

$$\:\varvec{Z}=\left[\begin{array}{ccc}{\widehat{y}}_{KNN}^{\left(1\right)}&\:{\widehat{y}}_{RF}^{\left(1\right)}&\:{\widehat{y}}_{ET}^{\left(1\right)}\\\:{\widehat{y}}_{KNN}^{\left(2\right)}&\:{\widehat{y}}_{RF}^{\left(2\right)}&\:{\widehat{y}}_{ET}^{\left(2\right)}\\\::&\::&\::\\\::&\::&\::\\\::&\::&\::\\\::&\::&\::\\\:{\widehat{y}}_{KNN}^{\left(N\right)}&\:{\widehat{y}}_{RF}^{\left(N\right)}&\:{\widehat{y}}_{ET}^{\left(N\right)}\end{array}\right]$$

(5)

where \(\:Z\) is the meta-feature matrix, and each row corresponds to an instance in the test dataset.

Meta-model (Linear regression)

The meta-model takes the outputs of the base models (\(\:Z\)) as inputs and learns weights \(\:{\mathcal{w}}_{i}\)to form a final prediction as shown in Eq. 6

$$\:\widehat{y}={\mathcal{w}}_{0}+{\mathcal{w}}_{1}{\widehat{y}}_{KNN}+{\mathcal{w}}_{2}{\widehat{y}}_{RF}+{\mathcal{w}}_{3}{\widehat{y}}_{ET}$$

(6)

where \(\:{\mathcal{w}}_{0}\)is the intercept (bias) and \(\:{\mathcal{w}}_{1}\), \(\:{\mathcal{w}}_{2}\), \(\:{\mathcal{w}}_{3}\:\)are the learned weights for each base model.

Base models predictions

Each base model generates predictions. The KNN regressor predicts the output by averaging the target values of the k-nearest neighbors as shown in Eq. 7.

$$\:{\widehat{y}}_{KNN}={f}_{KNN}\left(X\right)=\frac{1}{k}\sum\:_{j\in\:{N}_{k}\left(X\right)}{y}_{j}$$

(7)

where \(\:{N}_{k}\left(X\right)\) denotes the set of the k-nearest neighbors of \(\:X\). RF and ET are ensemble learning models based on decision trees. Their predictions are obtained by averaging outputs from multiple trees, as shown in Eq. 8.

$$\:{\widehat{y}}_{i}={f}_{i}\left(X\right)=\frac{1}{T}\sum\:_{t=1}^{T}{h}_{t}\left(X,{{\Theta\:}}_{i}\right),\:\:\:\:\:\:i\:ϵ\:\{RF,ET\}$$

(8)

where \(\:T\) is the number of decision trees in the ensemble and \(\:{h}_{t}\left(X,{{\Theta\:}}_{i}\right)\) is the prediction of the \(\:t\)th tree with parameters \(\:{{\Theta\:}}_{i}\).

3.5.4 Final unified model equation

By substituting the base model equations xx and xx into the meta-model Eq. 6, the final stacking hybrid ensemble model prediction is obtained as in Eq. 9.

$$\:\widehat{y}={\mathcal{w}}_{0}+{\mathcal{w}}_{1}\left(\frac{1}{k}\sum\:_{j\in\:{N}_{k}\left(X\right)}{y}_{j}\right)+{\mathcal{w}}_{2}\left(\frac{1}{T}\sum\:_{t=1}^{T}{h}_{t}\left(X,{{\Theta\:}}_{RF}\right)\right)+{\mathcal{w}}_{3}\left(\frac{1}{T}\sum\:_{t=1}^{T}{h}_{t}\left(X,{{\Theta\:}}_{ET}\right)\right)$$

(9)

This equation represents the final EV charging consumption prediction, where the meta-model optimally combines the predictions from KNN, RF, and ET models.

Model evaluation and validation

The performance of all models was assessed through visualizations, including Actual vs. Predicted Plots, Scatter Plots, and Residual Plots, alongside various evaluation metrics. A range of widely used metrics for regression models was employed to evaluate each model, as detailed in Table 4.

With the methodology for model development and optimization clearly outlined, the subsequent section presents the results obtained from applying these techniques. The performance of the baseline KNN model, tuned variants, and the stacking hybrid ensemble model is evaluated using key metrics, demonstrating the effectiveness of the proposed approach in improving prediction accuracy. Inference time, measured as the average prediction latency per test sample, ensures suitability for real-time applications. Training time accounts for hyperparameter tuning and model stacking.

link